16 树方法

调入需要的扩展包:

## Loading required package: Matrix## Loaded glmnet 4.1-8## randomForest 4.7-1.2## Type rfNews() to see new features/changes/bug fixes.## Loaded gbm 2.2.2## This version of gbm is no longer under development. Consider transitioning to gbm3, https://github.com/gbm-developers/gbm316.1 决策树

决策树方法按不同自变量的不同值, 分层地把训练集分组。 每层使用一个变量, 所以这样的分组构成一个二叉树表示。 为了预测一个观测的类归属, 找到它所属的组, 用组的类归属或大多数观测的类归属进行预测。 这样的方法称为决策树(decision tree)。 决策树方法既可以用于判别问题, 也可以用于回归问题。

决策树的好处是容易解释, 在自变量为分类变量时没有额外困难。 但预测准确率可能比其它有监督学习方法差。

改进方法包括装袋法(bagging)、随机森林(random forests)、 提升法(boosting)。 这些改进方法都是把许多棵树合并在一起, 通常能改善准确率但是可解释性变差。

16.1.1 回归树

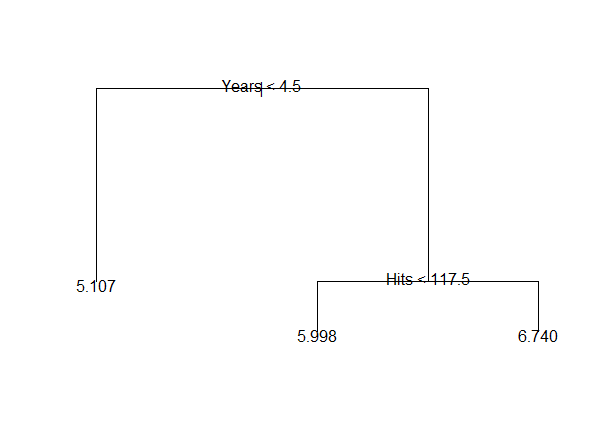

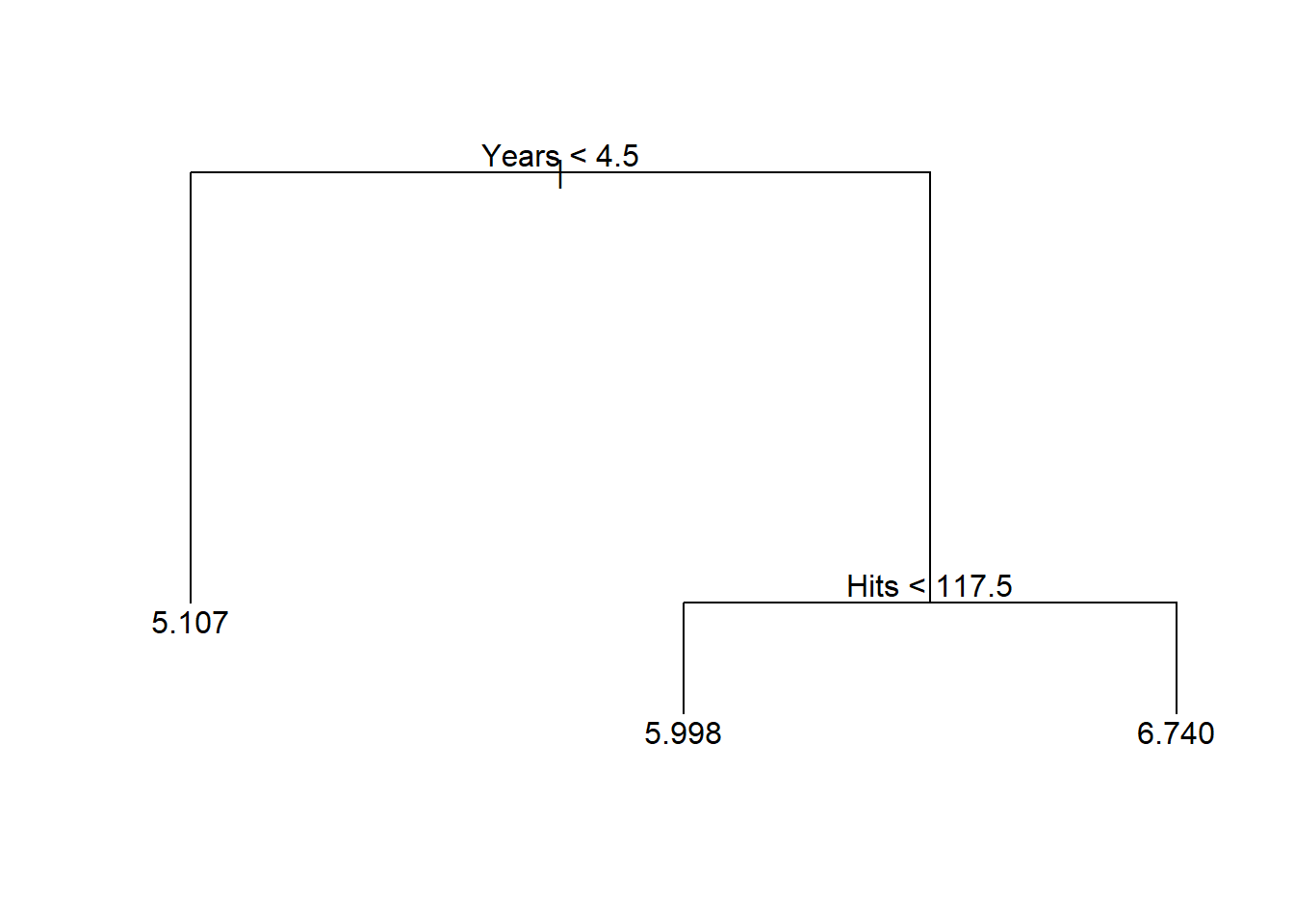

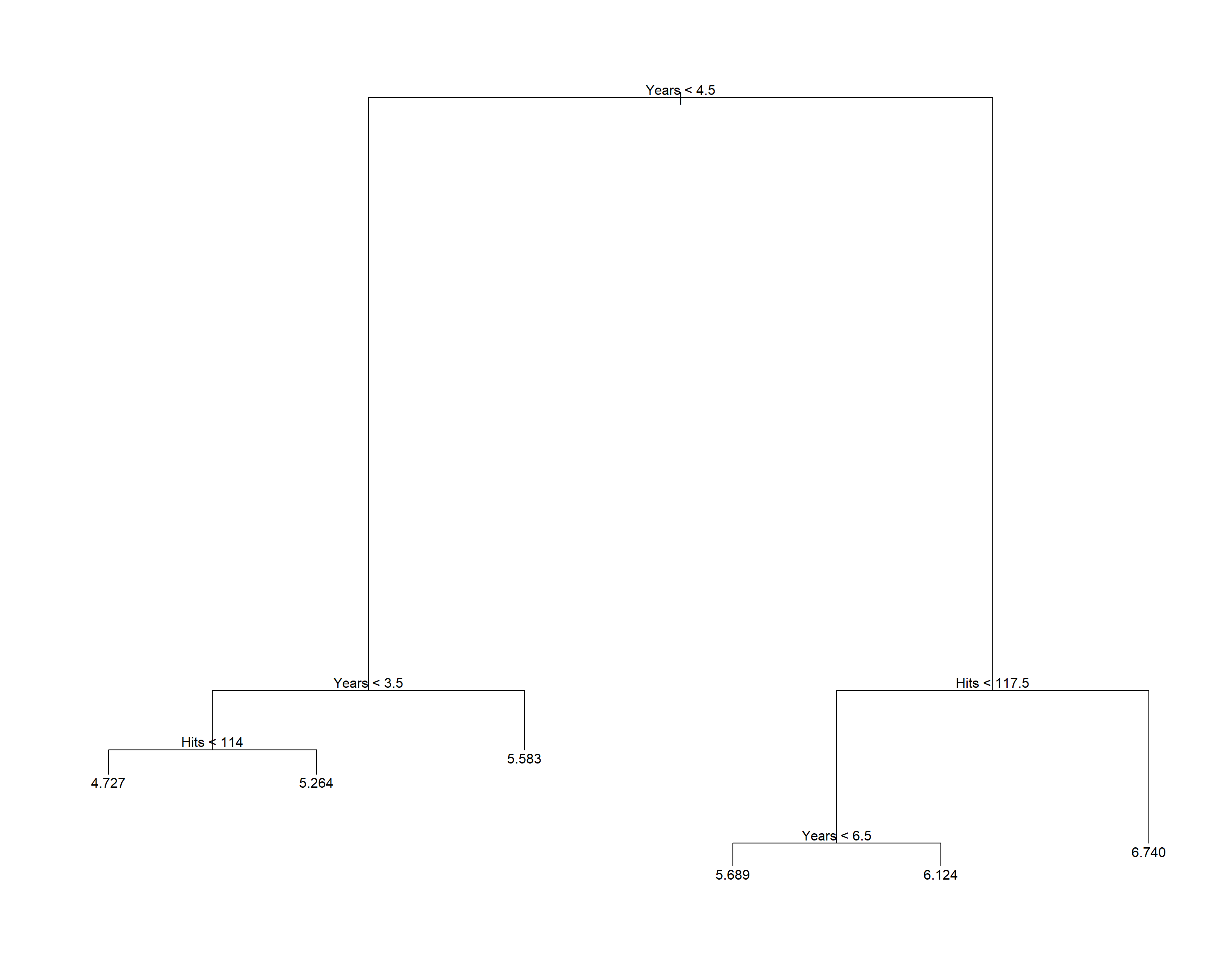

以Hitters数据为例。 以Salary为因变量, 其它自变量为自变量,预测工资值。 为简单起见,仅考虑Years(入行年限)和Hitts(前一年的安打数)两个自变量。 数据需要去掉有缺失值的观测; 以Salary的自然对数值为因变量。 可以得到如下的树(见图16.1):

图16.1: Hitters数据的简单树图形

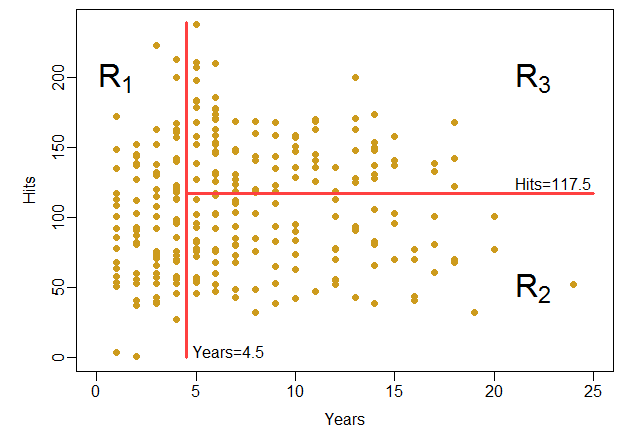

这个树把(Years, Hits)的取值\(\mathbb R^2\)空间分成了三个区域: \[\begin{aligned} R_1 =& \{ (\text{Years}, \text{Hits}):\; \text{Years} < 4.5 \}; \\ R_2 =& \{ (\text{Years}, \text{Hits}):\; \text{Years} \geq 4.5 \text{且} \text{Hits} < 117.5 \}; \\ R_3 =& \{ (\text{Years}, \text{Hits}):\; \text{Years} \geq 4.5 \text{且} \text{Hits} \geq 117.5 \} . \end{aligned}\] 每个区域内用因变量平均值预测因变量值。 见图16.2。

图16.2: Hitters数据的简单树划分的空间

此树对应的文字规则如下:

node), split, n, deviance, yval

* denotes terminal node

1) root 263 207.20 5.927

2) Years < 4.5 90 42.35 5.107 *

3) Years > 4.5 173 72.71 6.354

6) Hits < 117.5 90 28.09 5.998 *

7) Hits > 117.5 83 20.88 6.740 *根据某变量的值分为两个分支的节点称为内部节点; 不再分割的节点称为叶结点。

\(\text{Year}<4.5\)节点和\(\text{Year} \geq 4.5\) 下面的\(\text{Hits}<117.5\)节点是两个内部节点。 \(R_1, R_2, R_3\)是三个叶结点。叶节点处用训练数据的因变量平均值作预测。

从上述结果看出, 为了预测Salary,Years是比Hits更重要的变量。 入行年限多的运动员工资高。 在入行年限超过4.5年的运动员中, Hits低的运动员工资比入行年限低于4.5年的运动员工资提高有限, 而Hits高的运动员工资则提高很多。

树模型可能会过于简单, 但是容易解释和绘图。

树回归步骤用变量值作为分界, 把自变量取值空间\(\mathbb R^p\)分割为不相交的\(J\)个区域 \(R_1, R_2, \dots, R_J\)。 对每个待预测观测,如果自变量值落入\(R_j\)中, 则因变量值用\(R_j\)中训练集的因变量平均值来预测。

16.1.1.1 树回归的分叉方法

树回归分割的区域都是高维矩形或盒子。 求盒子\(R_1, \dots, R_J\)使得其并集为自变量取值空间, 且使得如下的残差平方和最小: \[\begin{align} \text{RSS} = \sum_{j=1}^J \sum_{i \in R_j} (y_i - \hat y_{R_j})^2, \tag{16.1} \end{align}\] 其中\(\hat y_{R_j}\)是\(R_j\)中的训练样本的因变量平均值。

为此,使用由顶向下、贪婪的递归二分叉方法。

由顶向下: 从所有训练样本都在一类中开始,逐步分割, 每次按照一个自变量的值把一个类分成两个类。

贪婪是指每次分割时仅考虑当前使得残差平方和减少最多的分割, 不考虑会对整棵树的影响。

在所有观测都未分割时,要找到一个变量\(X_j\)和变量值\(s_j\), 使得 \[\begin{aligned} R_1(j, s_j) =\{ \boldsymbol x | x_j < s_j \}, \quad R_2(j, s_j) =\{ \boldsymbol x | x_j \geq s_j \} \end{aligned}\] 对应的RSS总和最小, 对每个变量都测试一遍, 找到分割后分割的两个区域内的RSS总和最小的变量和分割点。

对变量\(X_j\),为了求\(s_j\),只要对\(X_j\)的所有不同样本值穷举即可。

在已经分成两类\(R_1\), \(R_2\)以后, 对每一类分别去找到能进行最优分割的变量和截断值\(s\), 然后仅取使得RSS总和更小的那个。 这样得到三个类。 对每个类都找最优分割, 然后仅取使得RSS总和最小的一个, 得到四个类。 如此重复,直到满足某种停止法则, 比如每个类中因变量值都相等, 每个类的观测个数至多5个等等。 分割结束后,对测试样本可以用自变量所属区域的因变量平均值预测因变量值。

16.1.1.2 剪枝

分类树可能会过于复杂, 也会产生过度拟合。 剪去一些枝叶, 使得模型复杂度降低, 可以提高可解释性, 降低方差, 当然偏差会增大一些, 总的来说适当剪枝可以降低测试集上的均方误差。

虽然可以用提前结束分割的方法降低复杂度, 但是这样可能会漏掉底层的好分割。 所以还是先构造一棵大树再剪枝更好。

剪枝的目标是使得测试均方误差最小。 可以用交叉验证或者测试集来估计不同的子树的均方误差。 但是对所有可能的子树都估计均方误差带来过多的计算量。 应仅考虑部分子树。

剪枝的一种方法是代价复杂度剪枝(cost complexity pruning), 也称为最弱联系剪枝(weakest link pruning)。 仅考虑用一个调节参数\(\alpha\)标记的子树的序列。 对每个\(\alpha>0\), 存在子树\(T\), 使得如下函数最小化: \[\begin{aligned} \sum_{m=1}^{|T|} \sum_{i:\; x_i \in R_m} (y_i - \hat y_{R_m})^2 + \alpha |T| \end{aligned}\] 其中\(|T|\)是子树\(T\)的叶子节点个数, \(R_m\)是第\(m\)个叶子节点对应的分割区域, \(\hat y_{R_m}\)是用\(R_m\)中样品计算的因变量预测值。

\(\alpha=0\)时对应于不剪枝的树。 \(\alpha\)增大时规则对较大的树有惩罚, 所以能选择较小的子树, 而且每次子树变化都是从前面较小的\(\alpha\)对应的子树中剪去一个分枝点, 是嵌套进行的。

很容易得到所有的\(\alpha\)对应的子树序列。 可以用交叉验证或测试集选择合适的\(\alpha\)从而选择合适的子树。 得到最优的\(\alpha\)后, 可以再利用全部观测以及获得的\(\alpha\)值产生剪枝的树。

16.1.2 Hitters回归树的简单演示

对Hitters数据,用Years和Hits作因变量预测log(Salaray)。

仅取Hitters数据集的Salary, Years, Hits三个变量, 并仅保留完全观测:

## 'data.frame': 263 obs. of 3 variables:

## $ Salary: num 475 480 500 91.5 750 ...

## $ Years : int 14 3 11 2 11 2 3 2 13 10 ...

## $ Hits : int 81 130 141 87 169 37 73 81 92 159 ...

## - attr(*, "na.action")= 'omit' Named int [1:59] 1 16 19 23 31 33 37 39 40 42 ...

## ..- attr(*, "names")= chr [1:59] "-Andy Allanson" "-Billy Beane" "-Bruce Bochte" "-Bob Boone" ...

## NULL建立完整的树:

剪枝为只有3个叶结点:

显示树:

## node), split, n, deviance, yval

## * denotes terminal node

##

## 1) root 263 207.20 5.927

## 2) Years < 4.5 90 42.35 5.107 *

## 3) Years > 4.5 173 72.71 6.354

## 6) Hits < 117.5 90 28.09 5.998 *

## 7) Hits > 117.5 83 20.88 6.740 *显示概括:

##

## Regression tree:

## snip.tree(tree = tr1, nodes = c(6L, 2L))

## Number of terminal nodes: 3

## Residual mean deviance: 0.3513 = 91.33 / 260

## Distribution of residuals:

## Min. 1st Qu. Median Mean 3rd Qu. Max.

## -2.24000 -0.39580 -0.03162 0.00000 0.33380 2.55600做树图:

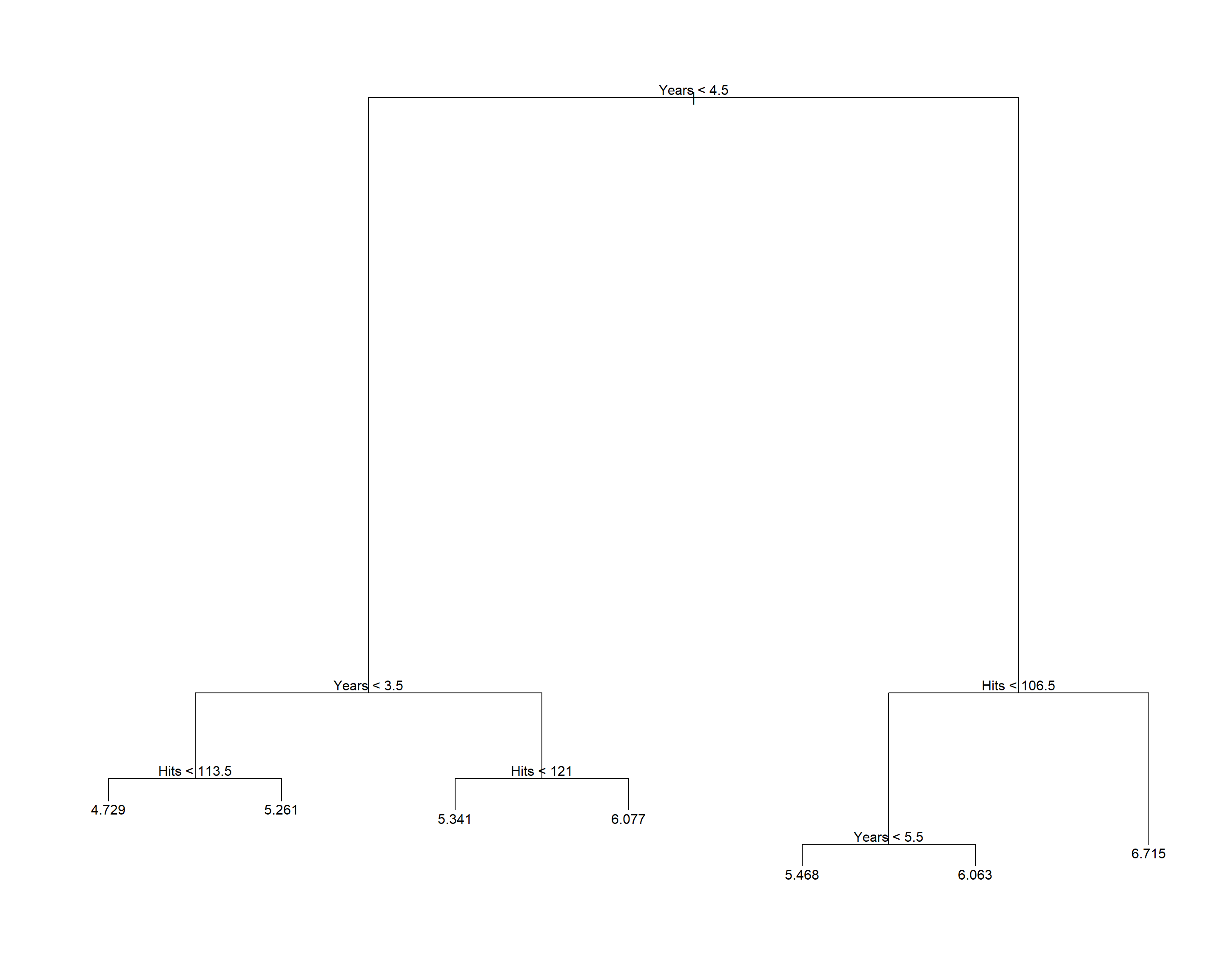

16.1.3 Hitters数据回归树的完整演示

把数据随机地分成一半训练集,一半测试集:

d <- na.omit(Hitters[,c('Salary', 'Years', 'Hits')])

set.seed(1)

train_id <- sample(nrow(d), size=round(0.5*nrow(d)))

train <- rep(FALSE, nrow(d))

train[train_id] <- TRUE

test <- (!train)对训练集,建立未剪枝的树:

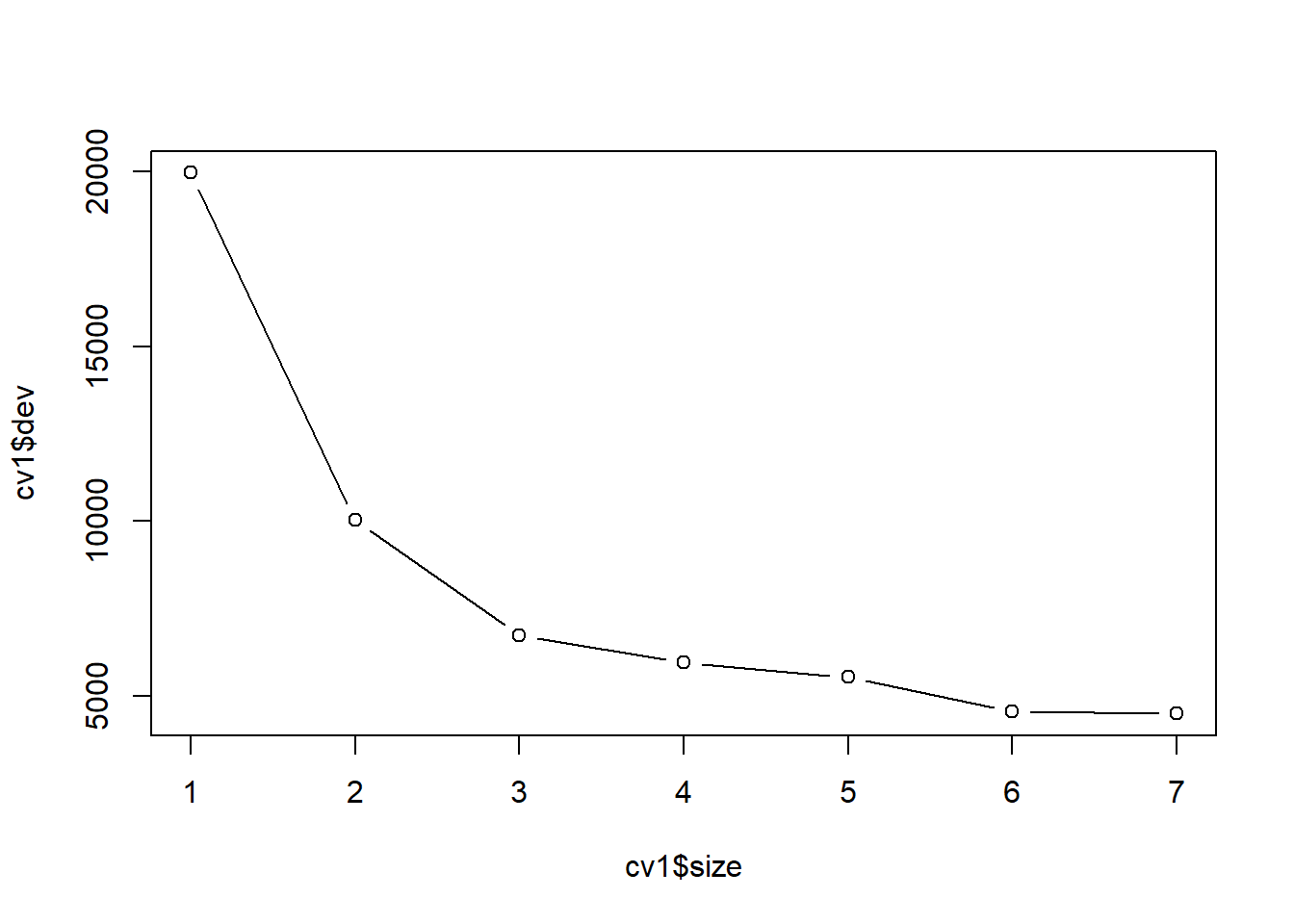

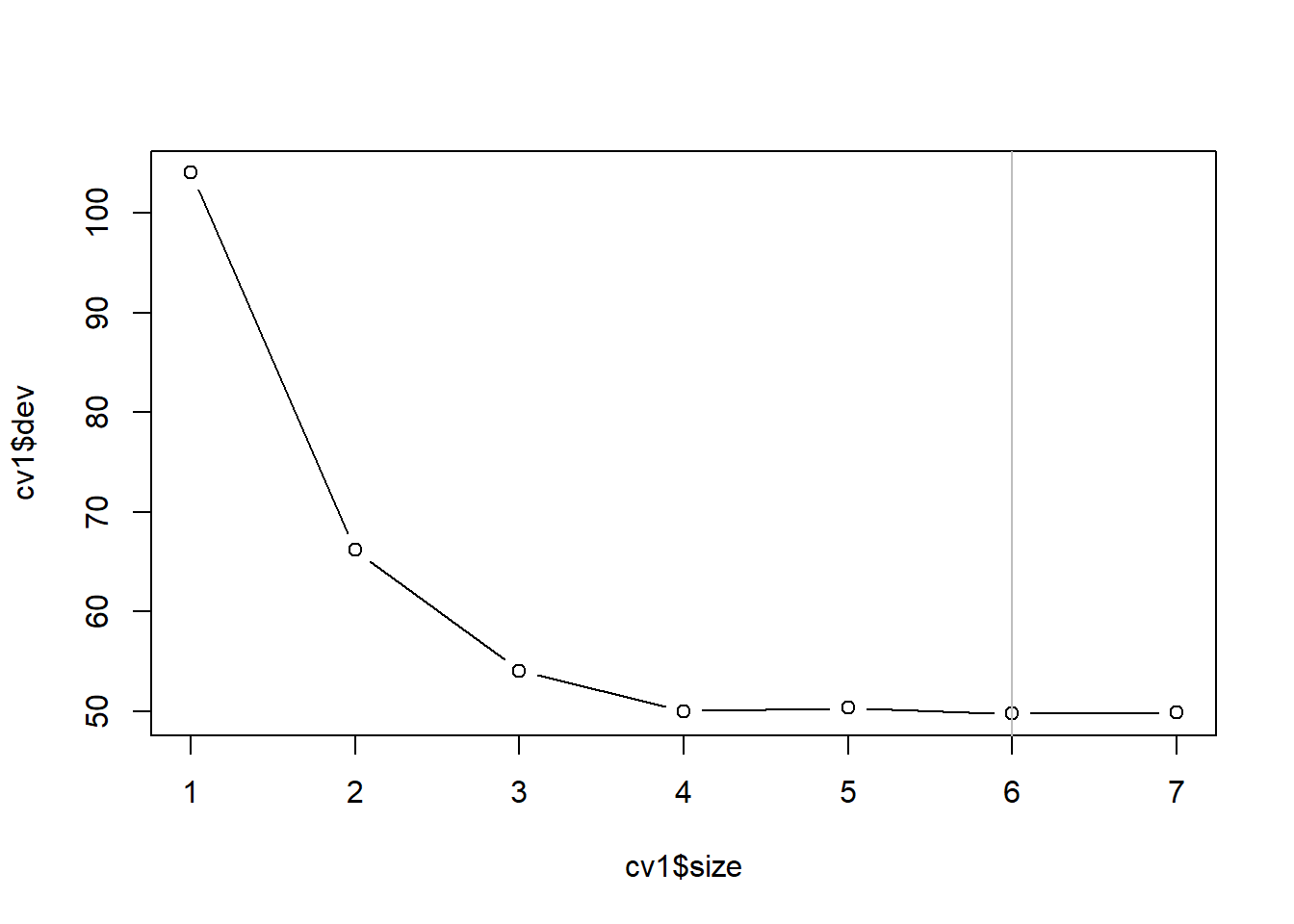

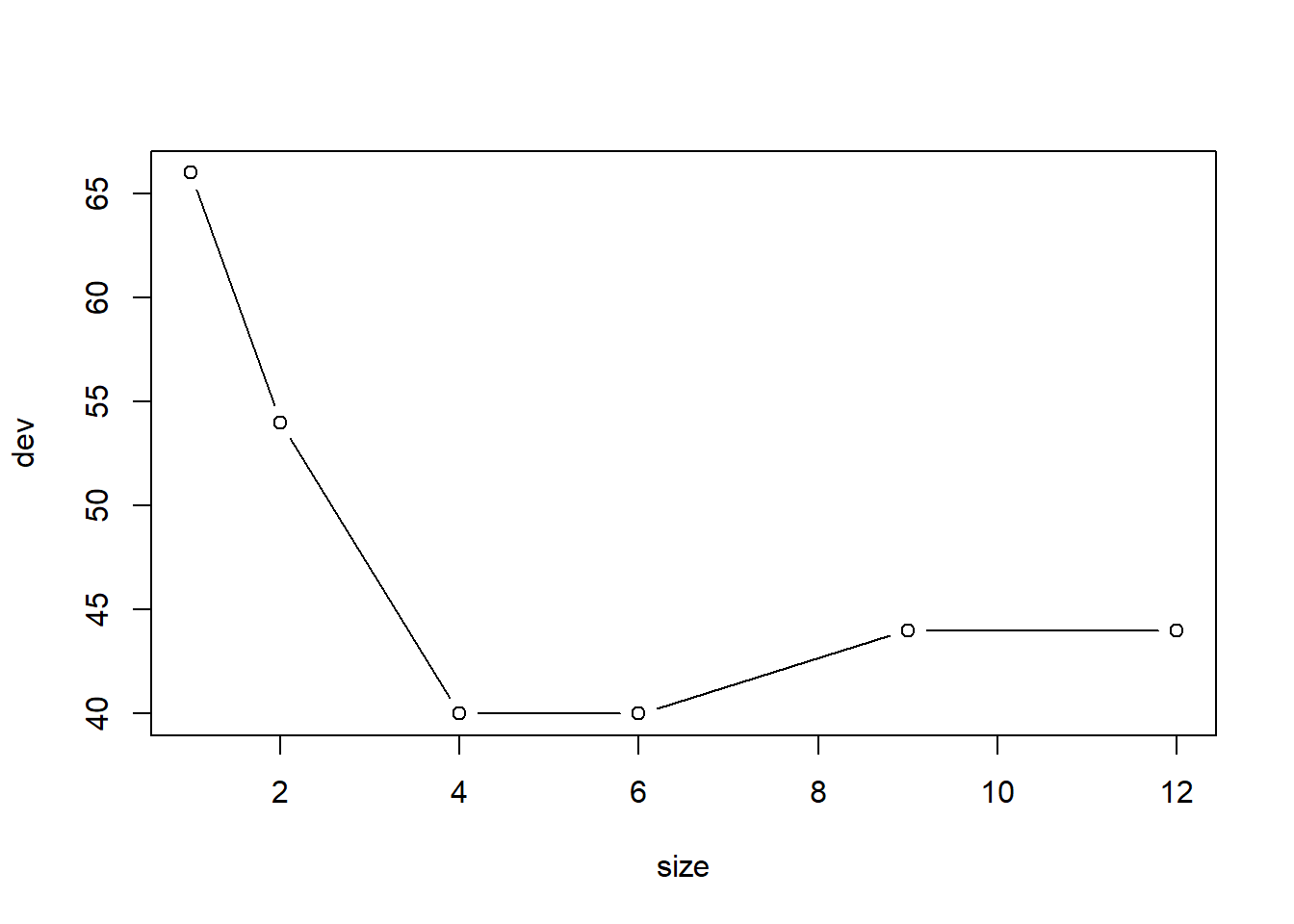

对训练集上的未剪枝树用交叉验证方法寻找最优大小:

## $size

## [1] 7 6 5 4 3 2 1

##

## $dev

## [1] 49.88386 49.78504 50.36061 50.00678 54.07967 66.20924 104.06611

##

## $k

## [1] -Inf 1.558635 1.642895 2.319001 6.275232 11.126931 43.728005

##

## $method

## [1] "deviance"

##

## attr(,"class")

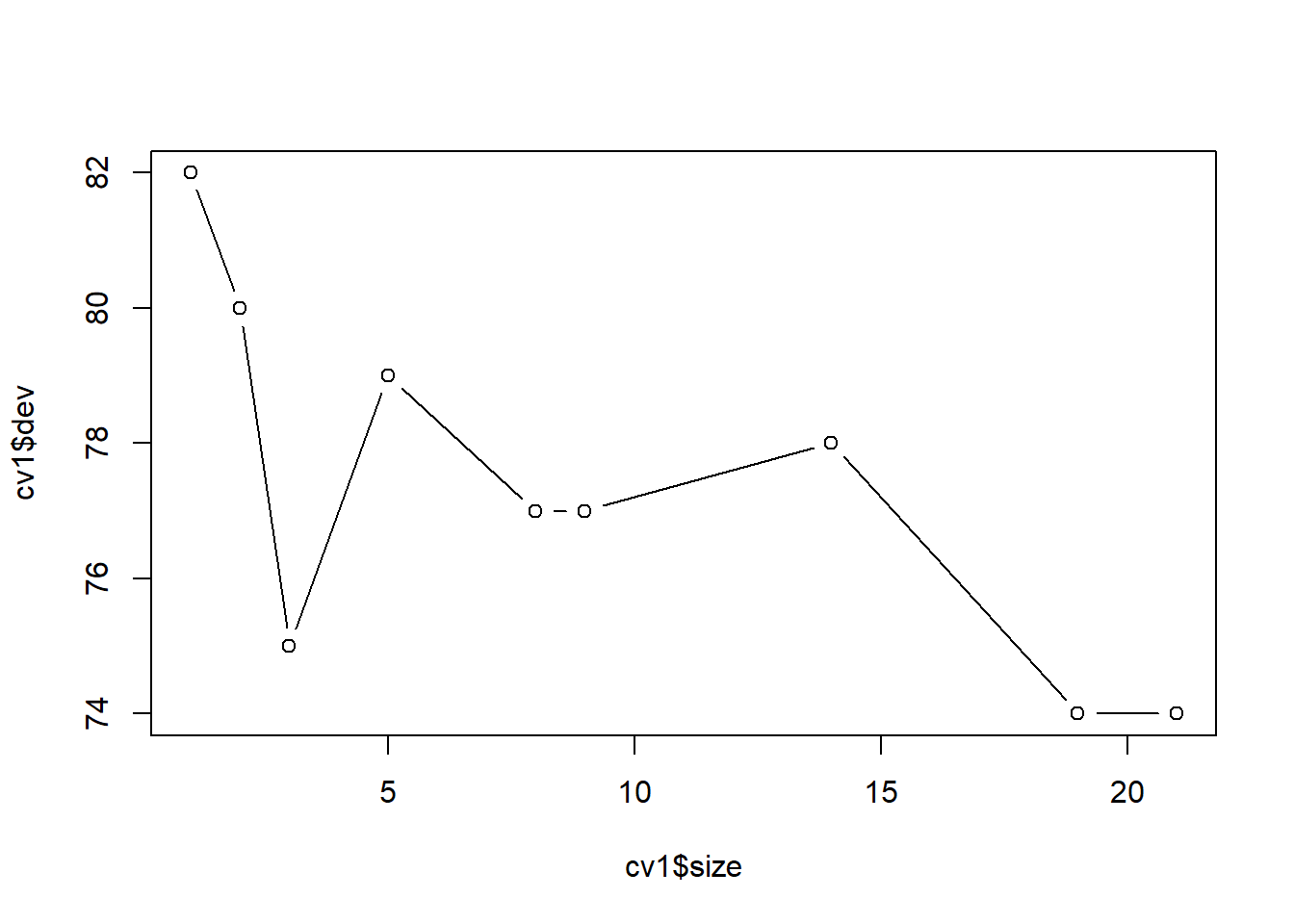

## [1] "prune" "tree.sequence"plot(cv1$size, cv1$dev, type='b')

best.size <- cv1$size[which.min(cv1$dev)[1]]

abline(v=best.size, col='gray')

最优大小为6。 获得训练集上构造的树剪枝后的结果:

在测试集上计算预测均方误差:

pred.test <- predict(tr1b, newdata=d[test,])

test.mse <- mean( (d[test, 'Salary'] - exp(pred.test))^2 )

test.mse## [1] 135635.4如果用训练集的因变量平均值估计测试集的因变量值, 均方误差为:

## [1] 224692.1用所有数据来构造未剪枝树:

用训练集上得到的子树大小剪枝:

16.1.4 分类树

这里分类树指因变量是分类变量的树模型。 回归树对每一分割区域用该区域观测的因变量平均值做预测, 而分类树对每一分割区域用该区域因变量的最常见值做预测。 训练出来的回归函数不仅包括区域分割信息、每个区域的最常见类别信息, 还包括每个分割区域的类别分布信息。

在构造分叉时, 不再以拟合均方误差RSS最小为目标, 而是以错判率(classification error rate)最小为目标。 每个区域的错判率是该区域中最常见类之外的类比例。

设\(\hat p_{mk}\)表示第\(m\)个分割区域的训练样本中, 因变量的第\(k\)个类的比例,则 \[\begin{aligned} E = 1 - \max_{k} \hat p_{mk} \end{aligned}\] 是第\(m\)个分割区域的错判率。 但是,错判率不容易区分不同树的判别性能。 一般改用基尼系数和互熵。

基尼系数(Gini index)定义为 \[\begin{aligned} G = \sum_{k=1}^K \hat p_{mk} (1 - \hat p_{mk}), \end{aligned}\] 易见当比例\(\hat p_{mk}\)中一个接近于1,其余都接近于0时\(G\)很小, 这时区域\(m\)基本上都属于因变量的同一类别。 所以\(G\)可以看成是某个分割区域的类混杂程度, \(G\)很小时此区域的类是纯一的, \(G\)很大时,此区域的类别比较混杂,应进一步分割。

另一个衡量某分割区域类别分布情况的指标是如下的互熵(cross entropy): \[\begin{aligned} D = - \sum_{k=1}^K \hat p_{mk} \log \hat p_{mk} . \end{aligned}\] 易见\(D \geq 0\), 当比例\(\hat p_{mk}\)中一个接近于1, 其余都接近于0时\(D\)很小, 这时区域\(m\)基本上都属于因变量的同一类别。 所以\(D\)也可以看成是某个分割区域的类混杂程度, \(D\)很小时此区域的类是纯一的, \(D\)很大时,此区域的类别比较混杂,应进一步分割。 事实上,\(G\)与\(D\)的值很接近。

在分叉时, 用基尼系数或者互熵可以得到更好的分叉结果。

在剪枝时, 仍可以使用基尼系数或互熵, 但是如果关心的是剪枝后的树的预测准确率, 用错判率剪枝更合适。

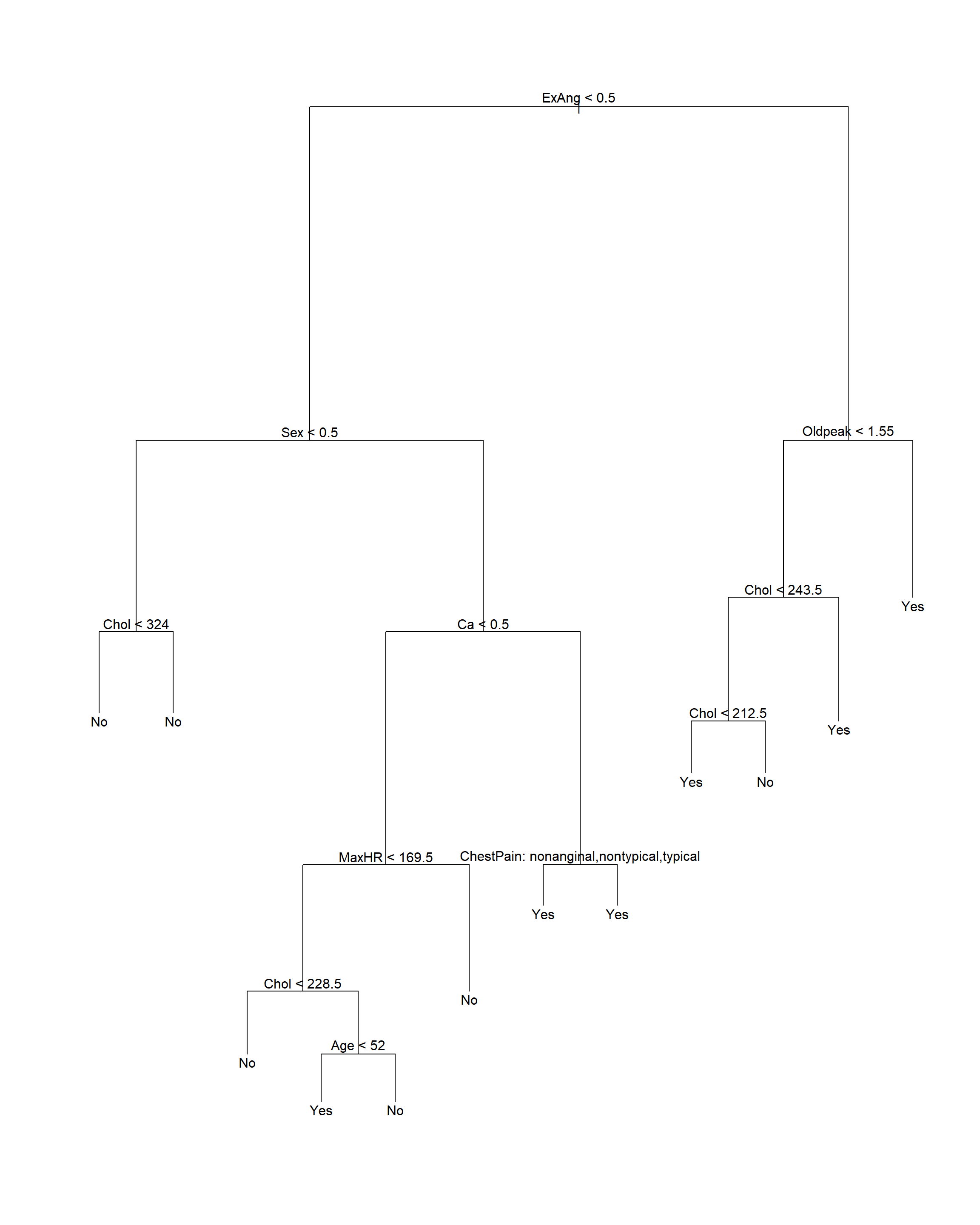

16.1.5 Heart数据判别树演示

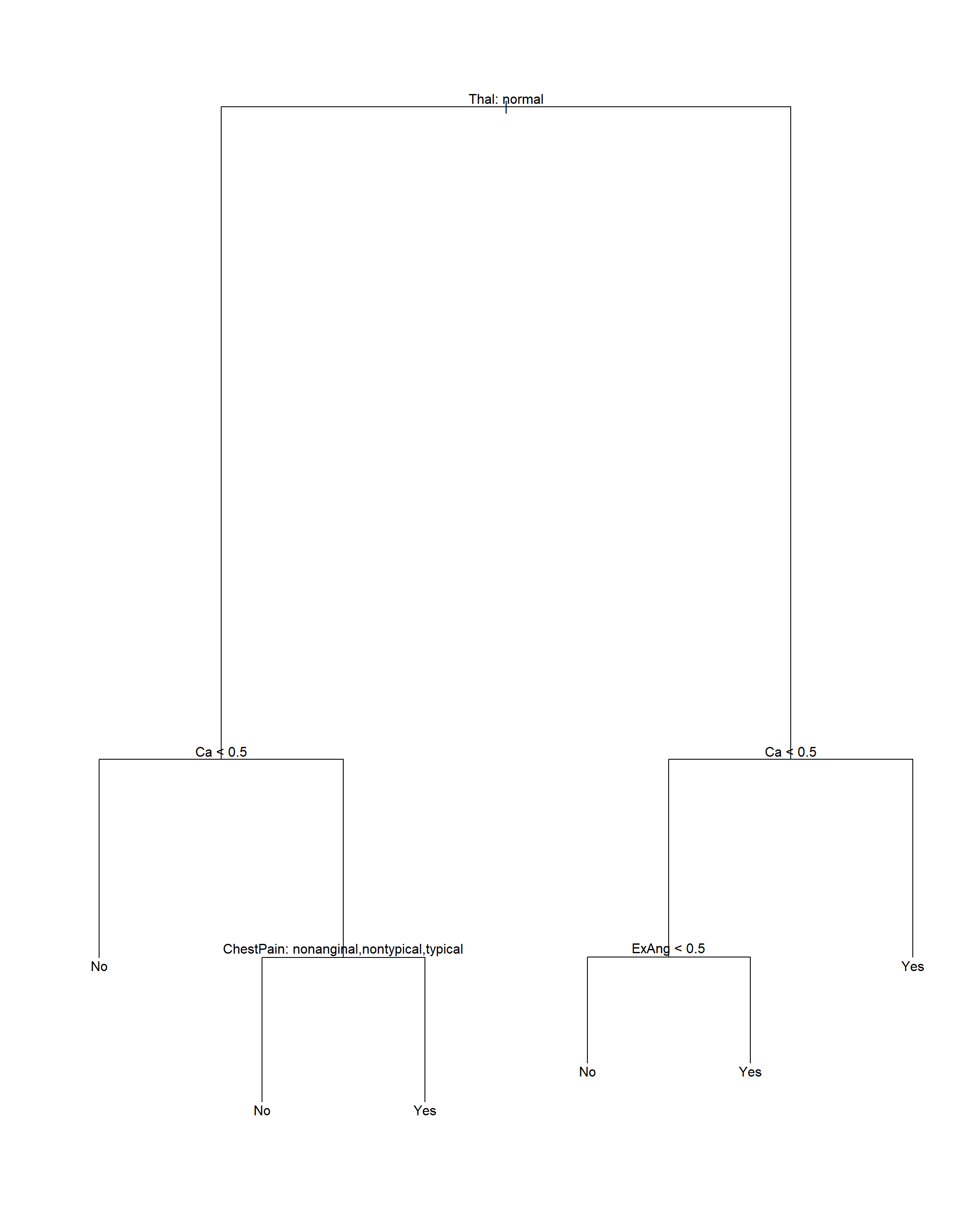

数据集Heart中有303个病人的数据,其中变量AHD是二值变量, 取Yes表示用血管造影检查确诊心脏病的,No表示没有心脏病的。 有13个自变量,包括Age, Sex, Chol(胆固醇化验指标)等。

读入Heart数据集,并去掉有缺失值的观测:

Heart <- read.csv("Heart.csv", header=TRUE,

row.names=1,

stringsAsFactors=TRUE)

Heart <- na.omit(Heart)

str(Heart)## 'data.frame': 297 obs. of 14 variables:

## $ Age : int 63 67 67 37 41 56 62 57 63 53 ...

## $ Sex : int 1 1 1 1 0 1 0 0 1 1 ...

## $ ChestPain: Factor w/ 4 levels "asymptomatic",..: 4 1 1 2 3 3 1 1 1 1 ...

## $ RestBP : int 145 160 120 130 130 120 140 120 130 140 ...

## $ Chol : int 233 286 229 250 204 236 268 354 254 203 ...

## $ Fbs : int 1 0 0 0 0 0 0 0 0 1 ...

## $ RestECG : int 2 2 2 0 2 0 2 0 2 2 ...

## $ MaxHR : int 150 108 129 187 172 178 160 163 147 155 ...

## $ ExAng : int 0 1 1 0 0 0 0 1 0 1 ...

## $ Oldpeak : num 2.3 1.5 2.6 3.5 1.4 0.8 3.6 0.6 1.4 3.1 ...

## $ Slope : int 3 2 2 3 1 1 3 1 2 3 ...

## $ Ca : int 0 3 2 0 0 0 2 0 1 0 ...

## $ Thal : Factor w/ 3 levels "fixed","normal",..: 1 2 3 2 2 2 2 2 3 3 ...

## $ AHD : Factor w/ 2 levels "No","Yes": 1 2 2 1 1 1 2 1 2 2 ...

## - attr(*, "na.action")= 'omit' Named int [1:6] 88 167 193 267 288 303

## ..- attr(*, "names")= chr [1:6] "88" "167" "193" "267" ...| Age | Min. :29.00 | 1st Qu.:48.00 | Median :56.00 | Mean :54.54 | 3rd Qu.:61.00 | Max. :77.00 |

| Sex | Min. :0.0000 | 1st Qu.:0.0000 | Median :1.0000 | Mean :0.6768 | 3rd Qu.:1.0000 | Max. :1.0000 |

| ChestPain | asymptomatic:142 | nonanginal : 83 | nontypical : 49 | typical : 23 | NA | NA |

| RestBP | Min. : 94.0 | 1st Qu.:120.0 | Median :130.0 | Mean :131.7 | 3rd Qu.:140.0 | Max. :200.0 |

| Chol | Min. :126.0 | 1st Qu.:211.0 | Median :243.0 | Mean :247.4 | 3rd Qu.:276.0 | Max. :564.0 |

| Fbs | Min. :0.0000 | 1st Qu.:0.0000 | Median :0.0000 | Mean :0.1448 | 3rd Qu.:0.0000 | Max. :1.0000 |

| RestECG | Min. :0.0000 | 1st Qu.:0.0000 | Median :1.0000 | Mean :0.9966 | 3rd Qu.:2.0000 | Max. :2.0000 |

| MaxHR | Min. : 71.0 | 1st Qu.:133.0 | Median :153.0 | Mean :149.6 | 3rd Qu.:166.0 | Max. :202.0 |

| ExAng | Min. :0.0000 | 1st Qu.:0.0000 | Median :0.0000 | Mean :0.3266 | 3rd Qu.:1.0000 | Max. :1.0000 |

| Oldpeak | Min. :0.000 | 1st Qu.:0.000 | Median :0.800 | Mean :1.056 | 3rd Qu.:1.600 | Max. :6.200 |

| Slope | Min. :1.000 | 1st Qu.:1.000 | Median :2.000 | Mean :1.603 | 3rd Qu.:2.000 | Max. :3.000 |

| Ca | Min. :0.0000 | 1st Qu.:0.0000 | Median :0.0000 | Mean :0.6768 | 3rd Qu.:1.0000 | Max. :3.0000 |

| Thal | fixed : 18 | normal :164 | reversable:115 | NA | NA | NA |

| AHD | No :160 | Yes:137 | NA | NA | NA | NA |

16.1.5.1 划分训练集与测试集

简单地把观测分为一半训练集、一半测试集:

set.seed(1)

train_id <- sample(nrow(Heart), size=round(0.5*nrow(Heart)))

train <- rep(FALSE, nrow(Heart))

train[train_id] <- TRUE

test <- (!train)

test.y <- Heart[["AHD"]][test]在训练集上建立未剪枝的判别树:

注意: tree()函数要求输入的分类变量为因子类型,

不能直接输入字符型数据作为自变量。

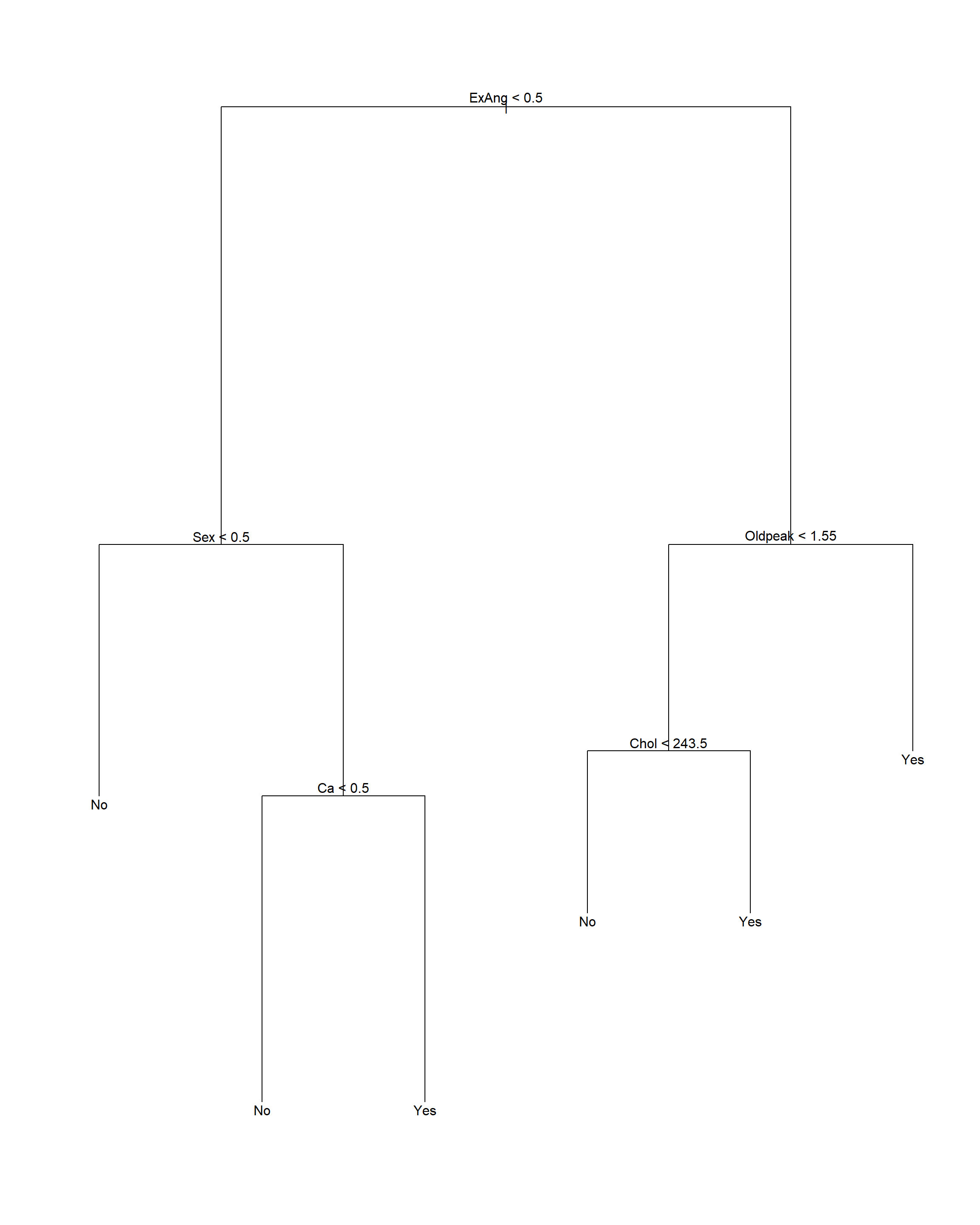

16.1.5.2 适当剪枝

用交叉验证方法确定剪枝保留的叶子个数,剪枝时按照错判率执行:

## $size

## [1] 12 9 6 4 2 1

##

## $dev

## [1] 44 44 40 40 54 66

##

## $k

## [1] -Inf 0.000000 1.666667 3.000000 7.000000 26.000000

##

## $method

## [1] "misclass"

##

## attr(,"class")

## [1] "prune" "tree.sequence"

最优的大小是6。

对训练集生成剪枝结果:

注意剪枝后树的显示中, 内部节点的自变量存在分类变量, 这时按照这个自变量分叉时, 取指定的某几个分类值时对应分支Yes, 取其它的分类值时对应分支No。

16.2 装袋法和随机森林

16.2.1 装袋法

判别树在不同的训练集、测试集划分上可以产生很大变化, 说明其预测值方差较大。 利用bootstrap的思想, 可以随机选取许多个训练集, 把许多个训练集的模型结果平均, 就可以降低预测值的方差。

办法是从一个训练集中用有放回抽样的方法抽取\(B\)个训练集, 设第\(b\)个抽取的训练集得到的回归函数为\(\hat f^{*b}(\cdot)\), 则最后的回归函数是这些回归函数的平均值: \[\begin{aligned} \hat f_{\text{bagging}}(x) = \frac{1}{B} \sum_{b=1}^b \hat f^{*b}(x) \end{aligned}\] 这称为装袋法(bagging)。 装袋法对改善判别与回归树的精度十分有效。

装袋法的步骤如下:

- 从训练集中取\(B\)个有放回随机抽样的bootstrap训练集,\(B\)取为几百到几千之间。

- 对每个bootstrap训练集,估计未剪枝的树。

- 如果因变量是连续变量,对测试样品,用所有的树的预测值的平均值作预测。

- 如果因变量是分类变量,对测试样品,可以用所有树预测类的多数投票决定预测值。

装袋法也可以用来改进其他的回归和判别方法。

装袋后不能再用图形表示,模型可解释性较差。 但是,可以度量自变量在预测中的重要程度。 在回归问题中, 可以计算每个自变量在所有\(B\)个树种平均减少的残差平方和的量, 以此度量其重要度。 在判别问题中, 可以计算每个自变量在所有\(B\)个树种平均减少的基尼系数的量, 以此度量其重要度。

除了可以用测试集、交叉验证方法以外, 还可以使用袋外观测预测误差。 用bootstrap再抽样获得多个训练集时每个bootstrap训练集总会遗漏一些观测, 平均每个bootstrap训练集会遗漏三分之一的观测。 对每个观测,大约有\(B/3\)棵树没有用到此观测, 可以用这些树的预测值平均来预测此观测,得到一个误差估计, 这样得到的均方误差估计或错判率称为袋外观测估计(OOB估计)。 好处是不用很多额外的工作。

16.2.1.1 Hitters数据装袋法演示

对训练集用装袋法:

set.seed(1)

d <- na.omit(Hitters)

train_id <- sample(nrow(d), size=round(0.5*nrow(d)))

train <- rep(FALSE, nrow(d))

train[train_id] <- TRUE

test <- (!train)

bag1 <- randomForest(log(Salary) ~ ., data=d,

subset=train, mtry=ncol(d)-1, importance=TRUE)

bag1##

## Call:

## randomForest(formula = log(Salary) ~ ., data = d, mtry = ncol(d) - 1, importance = TRUE, subset = train)

## Type of random forest: regression

## Number of trees: 500

## No. of variables tried at each split: 19

##

## Mean of squared residuals: 0.2488363

## % Var explained: 68.1注意randomForest()函数实际是随机森林法,

但是当mtry的值取为所有自变量个数时就是装袋法。

对测试集进行预报:

pred2 <- predict(bag1, newdata=d[test,])

test.mse2 <- mean( (d[test, 'Salary'] - exp(pred2))^2 )

test.mse2## [1] 90171.44结果与剪枝过的单课树相近。

在全集上使用装袋法:

##

## Call:

## randomForest(formula = log(Salary) ~ ., data = d, mtry = ncol(d) - 1, importance = TRUE)

## Type of random forest: regression

## Number of trees: 500

## No. of variables tried at each split: 19

##

## Mean of squared residuals: 0.1873377

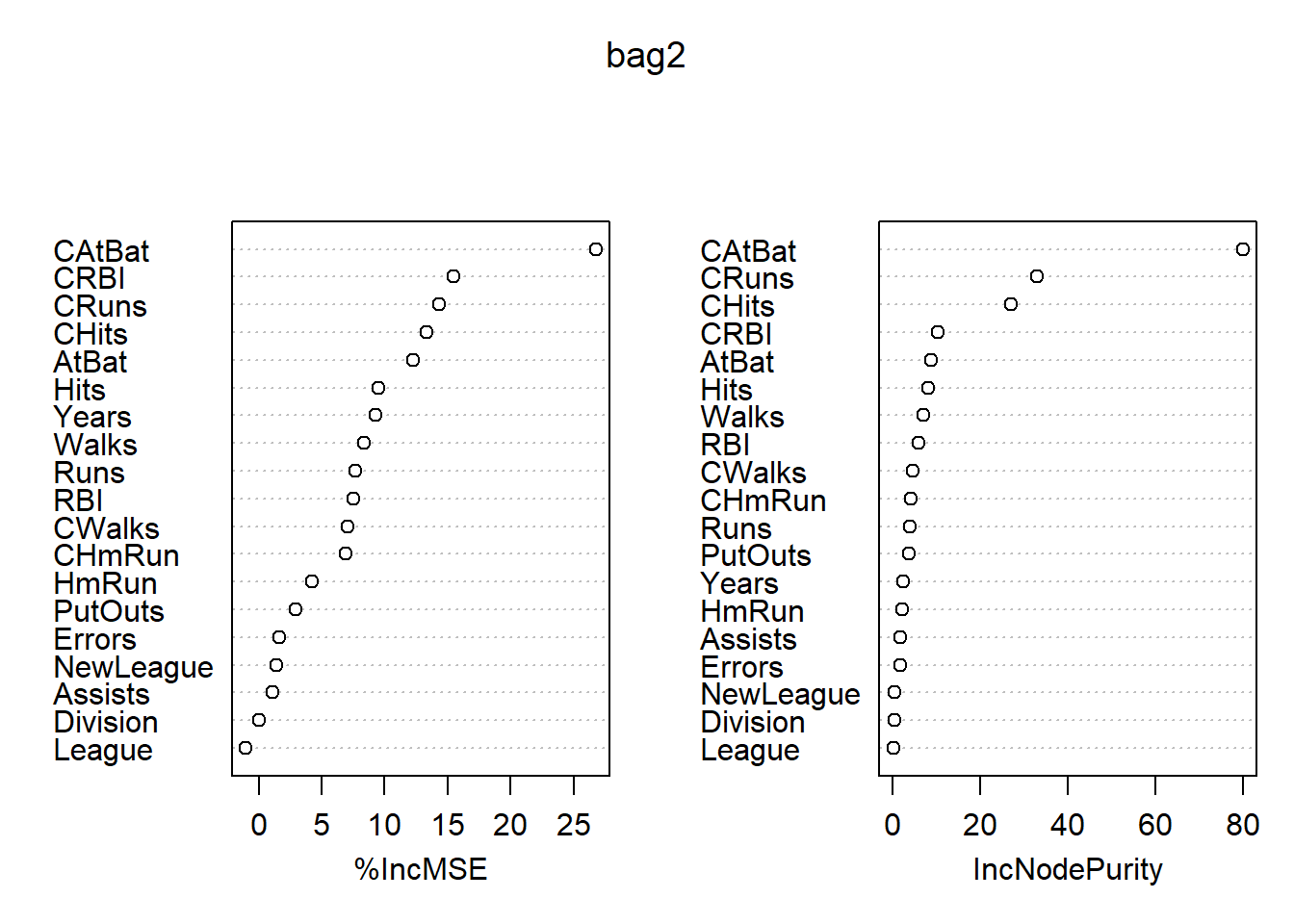

## % Var explained: 76.22变量的重要度数值和图形: 各变量的重要度数值及其图形:

## %IncMSE IncNodePurity

## AtBat 12.24054066 8.5996425

## Hits 9.47716109 8.0146504

## HmRun 4.19762724 2.1570060

## Runs 7.68565733 3.9542718

## RBI 7.51172719 5.7689615

## Walks 8.34560927 6.8880129

## Years 9.25986753 2.2505365

## CAtBat 26.74337522 79.8379721

## CHits 13.34178783 27.0067167

## CHmRun 6.93413792 3.9886086

## CRuns 14.33934038 32.9637351

## CRBI 15.42782700 10.2720711

## CWalks 7.02126823 4.6201072

## League -1.00937275 0.1668663

## Division 0.03884951 0.2590991

## PutOuts 2.96962100 3.7135650

## Assists 1.06380884 1.7233765

## Errors 1.62377900 1.6439786

## NewLeague 1.42880310 0.3362676

最重要的自变量是CAtBats, 其次有CRuns, CHits, CRBI等。

16.2.1.2 Heart数据用装袋法演示

对训练集用装袋法:

set.seed(1)

train_id <- sample(nrow(Heart), size=round(0.5*nrow(Heart)))

train <- rep(FALSE, nrow(Heart))

train[train_id] <- TRUE

test <- (!train)

test.y <- Heart[["AHD"]][test]

bag1 <- randomForest(AHD ~ ., data=Heart,

subset=train, mtry=13, importance=TRUE)

bag1##

## Call:

## randomForest(formula = AHD ~ ., data = Heart, mtry = 13, importance = TRUE, subset = train)

## Type of random forest: classification

## Number of trees: 500

## No. of variables tried at each split: 13

##

## OOB estimate of error rate: 22.97%

## Confusion matrix:

## No Yes class.error

## No 70 13 0.1566265

## Yes 21 44 0.3230769注意randomForest()函数实际是随机森林法,

但是当mtry的值取为所有自变量个数时就是装袋法。

袋外观测得到的错判率比较差。

对测试集进行预报:

## test.y

## pred2 No Yes

## No 66 16

## Yes 11 56## [1] 0.1812081测试集的错判率约为18%。

对全集用装袋法:

##

## Call:

## randomForest(formula = AHD ~ ., data = Heart, mtry = 13, importance = TRUE)

## Type of random forest: classification

## Number of trees: 500

## No. of variables tried at each split: 13

##

## OOB estimate of error rate: 19.53%

## Confusion matrix:

## No Yes class.error

## No 134 26 0.1625000

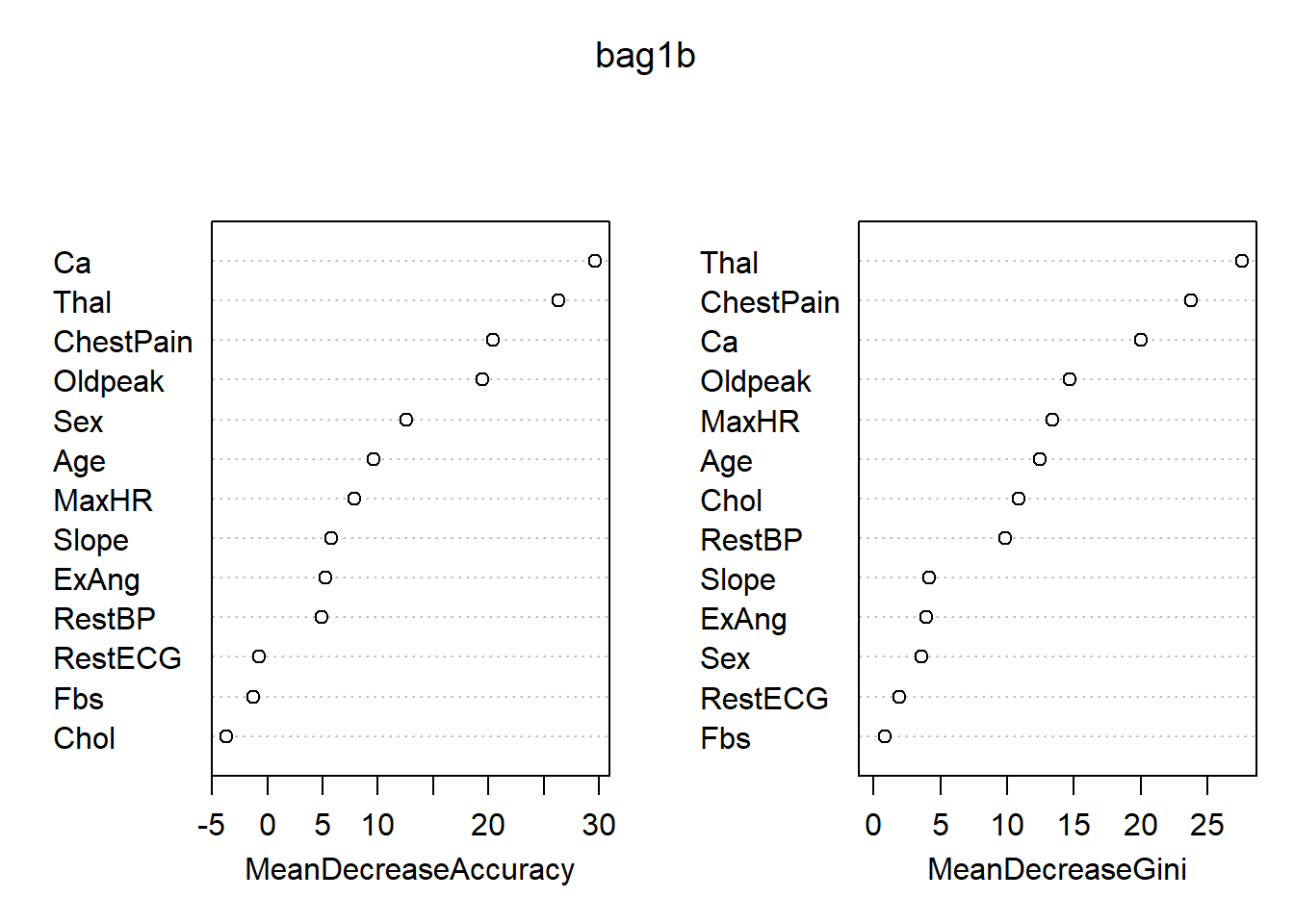

## Yes 32 105 0.2335766各变量的重要度数值及其图形:

## No Yes MeanDecreaseAccuracy MeanDecreaseGini

## Age 7.5914270 5.9300895 9.5901487 12.423534

## Sex 11.1035002 4.4224309 12.5552921 3.606976

## ChestPain 11.8110968 17.8134383 20.3909869 23.737158

## RestBP 4.2851096 2.6043117 4.9076443 9.865693

## Chol -1.9750832 -3.4792229 -3.6808119 10.838036

## Fbs 0.7931423 -2.7452667 -1.2269553 0.874251

## RestECG -1.7922866 0.8798907 -0.7548197 1.895233

## MaxHR 8.5471129 1.4362163 7.8503704 13.396300

## ExAng 1.8258307 5.7732467 5.2672188 3.941524

## Oldpeak 15.2223889 13.0068694 19.4903808 14.709831

## Slope 2.9181877 5.2329194 5.8296096 4.200541

## Ca 24.3509148 18.4287501 29.6110614 20.004071

## Thal 21.0348018 18.0554814 26.3633854 27.572038

最重要的变量是Thal, ChestPain, Ca。

16.2.2 随机森林

随机森林的思想与装袋法类似, 但是试图使得参加平均的各个树之间变得比较独立。 仍采用有放回抽样得到的多个bootstrap训练集, 但是对每个bootstrap训练集构造判别树时, 每次分叉时不考虑所有自变量, 而是仅考虑随机选取的一个自变量子集。

对判别树,每次分叉时选取的自变量个数通常取\(m \approx \sqrt{p}\)个。 比如,对Heart数据的13个自变量, 每次分叉时仅随机选取4个纳入考察范围。

随机森林的想法是基于正相关的样本在平均时并不能很好地降低方差, 独立样本能比较好地降低方差。 如果存在一个最重要的变量, 如果不加限制这个最重要的变量总会是第一个分叉, 使得\(B\)棵树相似程度很高。 随机森林解决这个问题的办法是限制分叉时可选的变量子集。

随机森林也可以用来改进其他的回归和判别方法。

装袋法和随机森林都可以用R扩展包randomForest的

randomForest()函数实现。

当此函数的mtry参数取为自变量个数时,执行的就是装袋法;

mtry取缺省值时,执行随机森林算法。

执行随机森林算法时,

randomForest()函数在回归问题时分叉时考虑的自变量个数取\(m \approx p/3\),

在判别问题时取\(m \approx \sqrt{p}\)。

16.2.2.1 Hitters数据随机森林演示

对训练集用随机森林法:

set.seed(1)

d <- na.omit(Hitters)

train_id <- sample(nrow(d), size=round(0.5*nrow(d)))

train <- rep(FALSE, nrow(d))

train[train_id] <- TRUE

test <- (!train)

rf1 <- randomForest(log(Salary) ~ ., data=d,

subset=train, importance=TRUE)

rf1##

## Call:

## randomForest(formula = log(Salary) ~ ., data = d, importance = TRUE, subset = train)

## Type of random forest: regression

## Number of trees: 500

## No. of variables tried at each split: 6

##

## Mean of squared residuals: 0.2437535

## % Var explained: 68.75当mtry的值取为缺省值时执行随机森林算法。

对测试集进行预报:

pred3 <- predict(rf1, newdata=d[test,])

test.mse3 <- mean( (d[test, 'Salary'] - exp(pred3))^2 )

test.mse3## [1] 96229.2结果与剪枝过的单课树、装袋法相近。

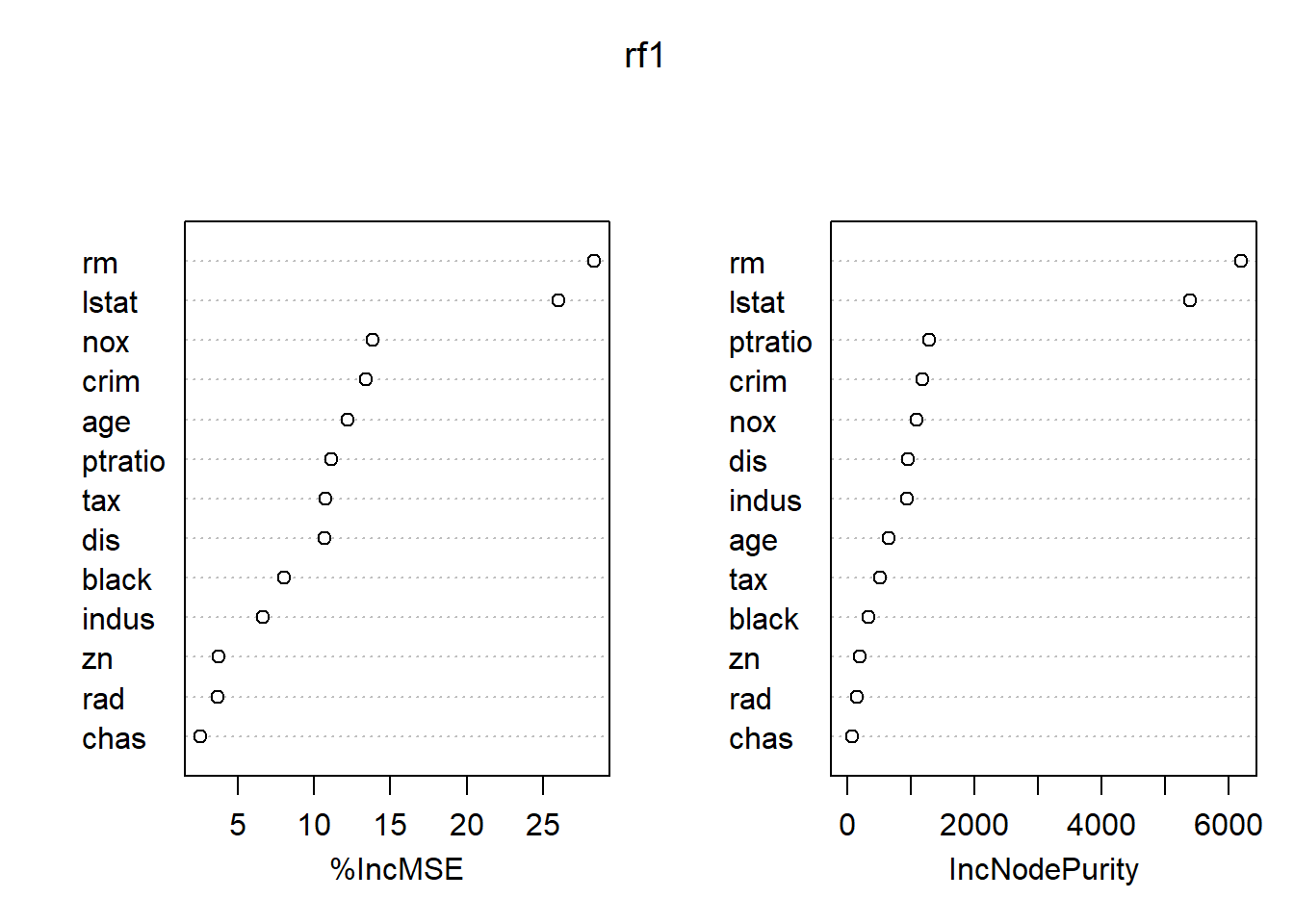

在全集上使用随机森林:

##

## Call:

## randomForest(formula = log(Salary) ~ ., data = d, importance = TRUE)

## Type of random forest: regression

## Number of trees: 500

## No. of variables tried at each split: 6

##

## Mean of squared residuals: 0.1782609

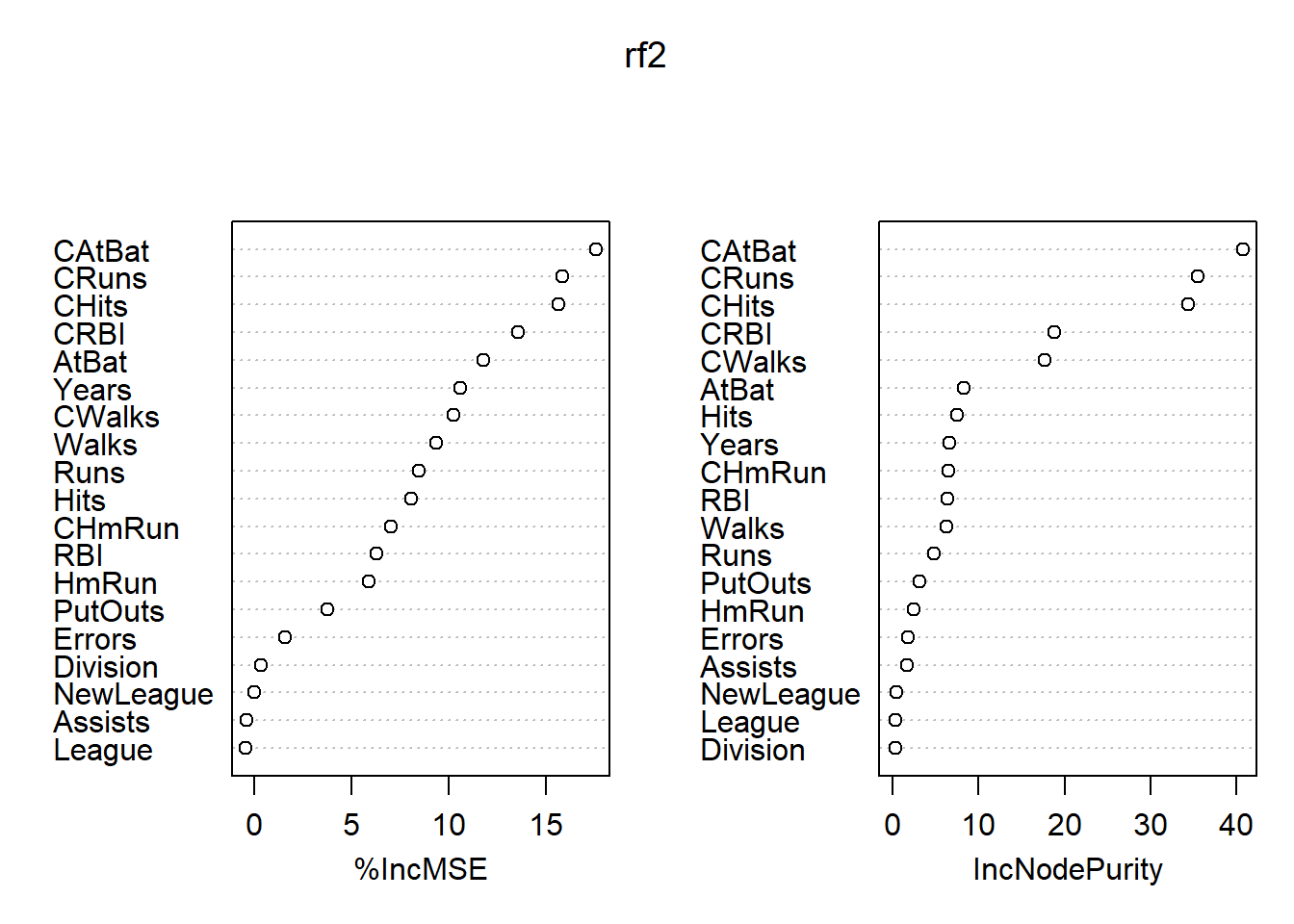

## % Var explained: 77.37各变量的重要度数值及其图形:

## %IncMSE IncNodePurity

## AtBat 11.81948475 8.2308776

## Hits 8.09787840 7.5075741

## HmRun 5.91570003 2.4320892

## Runs 8.48169025 4.8333805

## RBI 6.29673815 6.3026314

## Walks 9.36982692 6.2217510

## Years 10.61587730 6.5702146

## CAtBat 17.58354435 40.7827117

## CHits 15.64594781 34.4452277

## CHmRun 7.04523612 6.4528435

## CRuns 15.85901741 35.5844665

## CRBI 13.56732483 18.8060878

## CWalks 10.25381701 17.6924487

## League -0.44093551 0.3050351

## Division 0.32697628 0.2715000

## PutOuts 3.74477178 3.0747050

## Assists -0.43394356 1.6667284

## Errors 1.58044791 1.7246783

## NewLeague -0.01057482 0.3535943

最重要的自变量是CAtBats, CRuns, CHits, CWalks, CRBI等。

16.2.2.2 Heart数据随机森林演示

对训练集用随机森林法:

set.seed(1)

train_id <- sample(nrow(Heart), size=round(0.5*nrow(Heart)))

train <- rep(FALSE, nrow(Heart))

train[train_id] <- TRUE

test <- (!train)

test.y <- Heart[["AHD"]][test]

rf1 <- randomForest(AHD ~ ., data=Heart, subset=train, importance=TRUE)

rf1##

## Call:

## randomForest(formula = AHD ~ ., data = Heart, importance = TRUE, subset = train)

## Type of random forest: classification

## Number of trees: 500

## No. of variables tried at each split: 3

##

## OOB estimate of error rate: 22.97%

## Confusion matrix:

## No Yes class.error

## No 71 12 0.1445783

## Yes 22 43 0.3384615这里mtry取缺省值,对应于随机森林法。

对测试集进行预报:

## test.y

## pred3 No Yes

## No 70 17

## Yes 7 55## [1] 0.1610738测试集的错判率约为16%。

对全集用随机森林:

##

## Call:

## randomForest(formula = AHD ~ ., data = Heart, importance = TRUE)

## Type of random forest: classification

## Number of trees: 500

## No. of variables tried at each split: 3

##

## OOB estimate of error rate: 17.17%

## Confusion matrix:

## No Yes class.error

## No 137 23 0.1437500

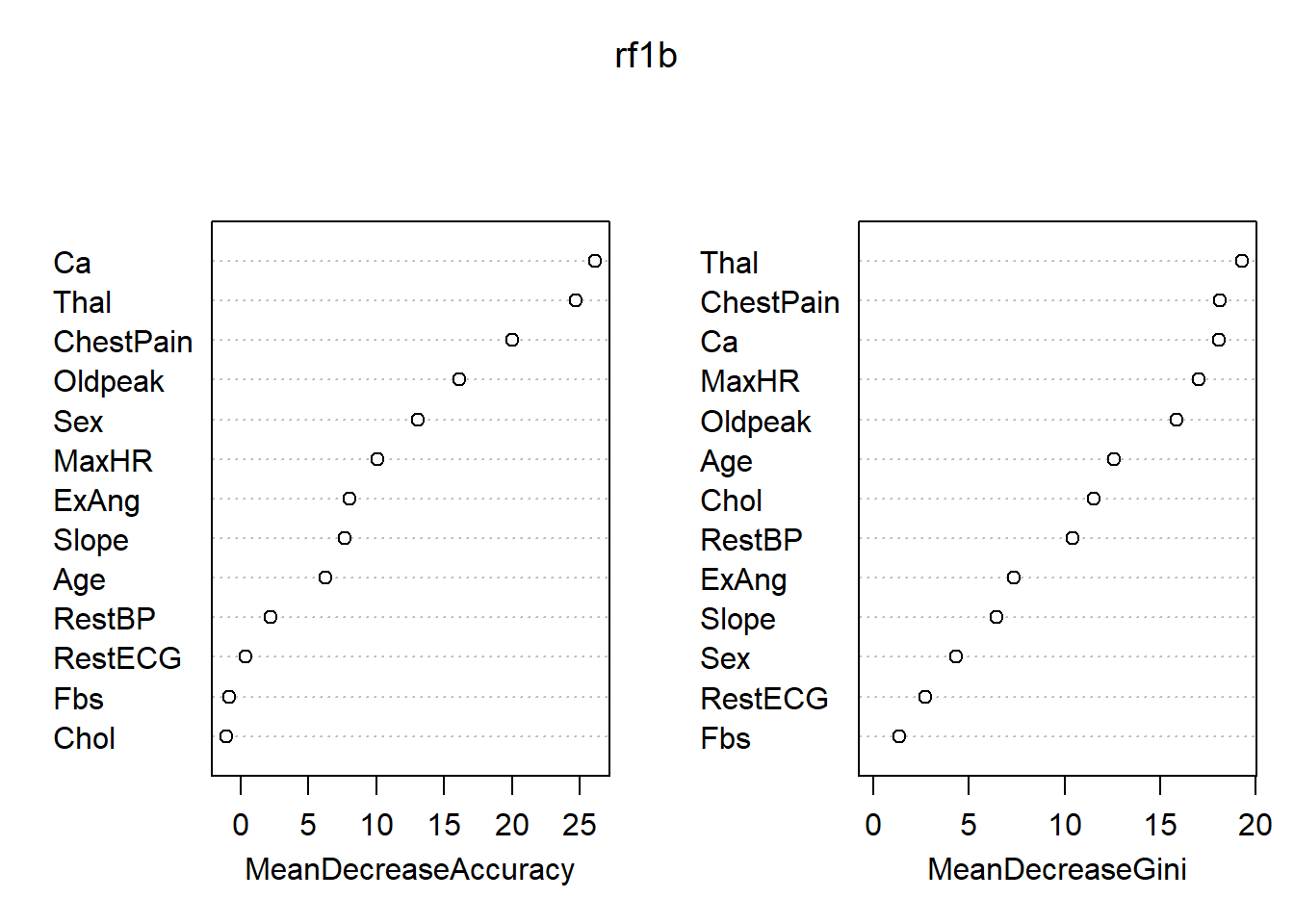

## Yes 28 109 0.2043796各变量的重要度数值及其图形:

## No Yes MeanDecreaseAccuracy MeanDecreaseGini

## Age 5.0451528 4.1243916 6.2544913 12.589891

## Sex 11.8830005 5.7666976 13.0527236 4.348853

## ChestPain 12.9853182 15.9344889 20.0063334 18.140518

## RestBP 2.3860139 0.8161766 2.2560609 10.402834

## Chol 0.4441293 -1.7627423 -1.0295881 11.550493

## Fbs 1.3711348 -2.5626917 -0.8151845 1.329501

## RestECG 0.1510264 0.5408153 0.4091251 2.734730

## MaxHR 9.6540260 4.8456013 10.0807989 17.028765

## ExAng 3.8100638 7.2834823 8.0332494 7.365512

## Oldpeak 9.9336236 12.8188739 16.1137642 15.876015

## Slope 1.9767505 8.3713104 7.6713543 6.421739

## Ca 21.6441820 18.4225083 26.1245597 18.106245

## Thal 19.6662985 16.5929688 24.6866916 19.282387

最重要的变量是ChestPain, Thal, Ca。

16.3 汽车销量数据的演示

Carseats是ISLR包的一个数据集,基本情况如下:

## 'data.frame': 400 obs. of 11 variables:

## $ Sales : num 9.5 11.22 10.06 7.4 4.15 ...

## $ CompPrice : num 138 111 113 117 141 124 115 136 132 132 ...

## $ Income : num 73 48 35 100 64 113 105 81 110 113 ...

## $ Advertising: num 11 16 10 4 3 13 0 15 0 0 ...

## $ Population : num 276 260 269 466 340 501 45 425 108 131 ...

## $ Price : num 120 83 80 97 128 72 108 120 124 124 ...

## $ ShelveLoc : Factor w/ 3 levels "Bad","Good","Medium": 1 2 3 3 1 1 3 2 3 3 ...

## $ Age : num 42 65 59 55 38 78 71 67 76 76 ...

## $ Education : num 17 10 12 14 13 16 15 10 10 17 ...

## $ Urban : Factor w/ 2 levels "No","Yes": 2 2 2 2 2 1 2 2 1 1 ...

## $ US : Factor w/ 2 levels "No","Yes": 2 2 2 2 1 2 1 2 1 2 ...## Sales CompPrice Income Advertising Population Price ShelveLoc Age Education Urban US

## Min. : 0.000 Min. : 77 Min. : 21.00 Min. : 0.000 Min. : 10.0 Min. : 24.0 Bad : 96 Min. :25.00 Min. :10.0 No :118 No :142

## 1st Qu.: 5.390 1st Qu.:115 1st Qu.: 42.75 1st Qu.: 0.000 1st Qu.:139.0 1st Qu.:100.0 Good : 85 1st Qu.:39.75 1st Qu.:12.0 Yes:282 Yes:258

## Median : 7.490 Median :125 Median : 69.00 Median : 5.000 Median :272.0 Median :117.0 Medium:219 Median :54.50 Median :14.0

## Mean : 7.496 Mean :125 Mean : 68.66 Mean : 6.635 Mean :264.8 Mean :115.8 Mean :53.32 Mean :13.9

## 3rd Qu.: 9.320 3rd Qu.:135 3rd Qu.: 91.00 3rd Qu.:12.000 3rd Qu.:398.5 3rd Qu.:131.0 3rd Qu.:66.00 3rd Qu.:16.0

## Max. :16.270 Max. :175 Max. :120.00 Max. :29.000 Max. :509.0 Max. :191.0 Max. :80.00 Max. :18.0把Salses变量按照大于8与否分成两组, 结果存入变量High,以High为因变量作判别分析。

## [1] 400 1216.3.1 判别树

16.3.1.1 全体数据的判别树

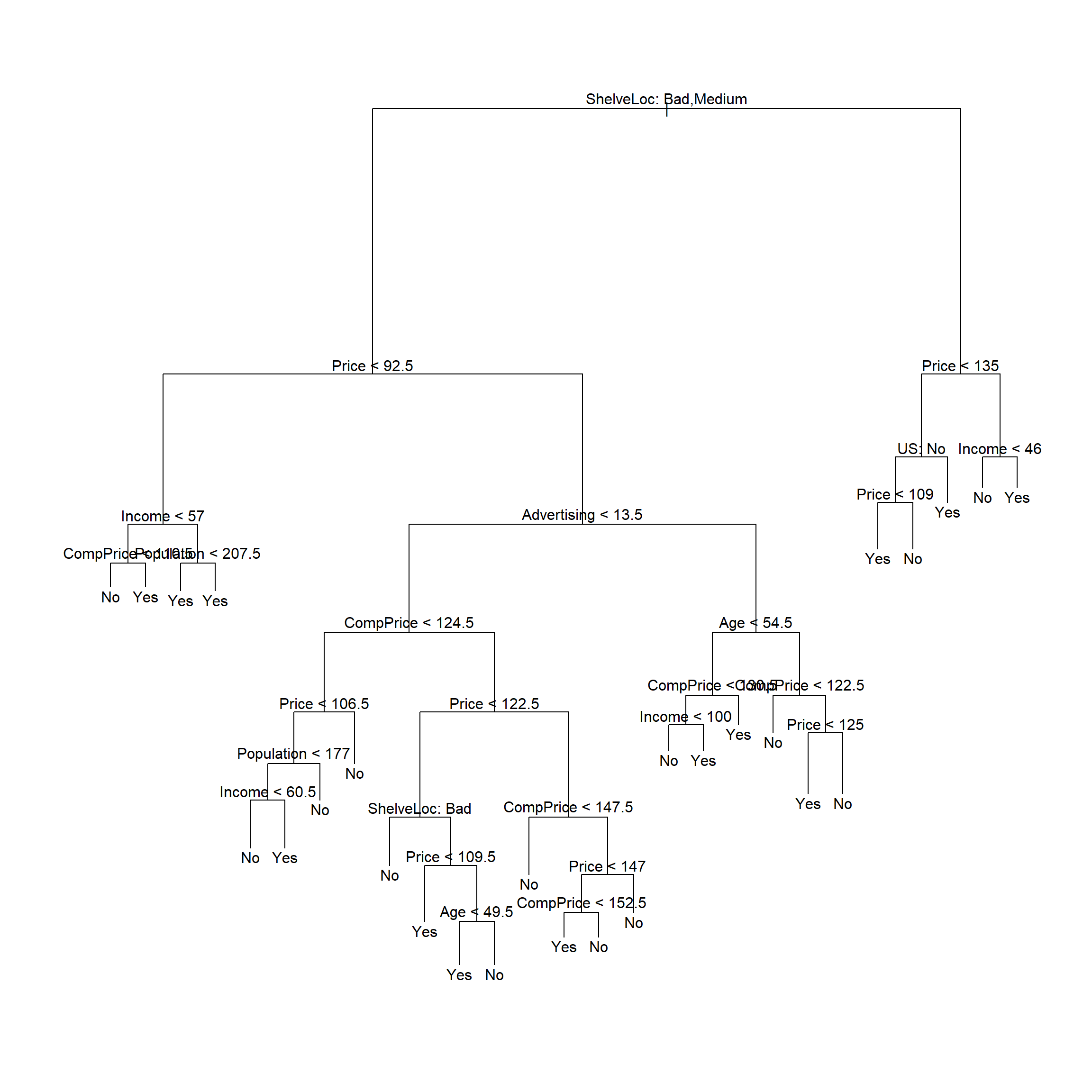

对全体数据建立未剪枝的判别树:

##

## Classification tree:

## tree(formula = High ~ . - Sales, data = d)

## Variables actually used in tree construction:

## [1] "ShelveLoc" "Price" "Income" "CompPrice" "Population" "Advertising" "Age" "US"

## Number of terminal nodes: 27

## Residual mean deviance: 0.4575 = 170.7 / 373

## Misclassification error rate: 0.09 = 36 / 400

16.3.1.2 划分训练集和测试集

把输入数据集随机地分一半当作训练集,另一半当作测试集:

d <- na.omit(Carseats)

d$High <- factor(ifelse(d$Sales > 8, 'Yes', 'No'))

set.seed(2)

train_id <- sample(nrow(d), size=round(0.5*nrow(d)))

train <- rep(FALSE, nrow(d))

train[train_id] <- TRUE

test <- (!train)

test.high <- d[["High"]][test]用训练数据建立未剪枝的判别树:

##

## Classification tree:

## tree(formula = High ~ . - Sales, data = d, subset = train)

## Variables actually used in tree construction:

## [1] "Price" "Population" "ShelveLoc" "Age" "Education" "CompPrice" "Advertising" "Income" "US"

## Number of terminal nodes: 21

## Residual mean deviance: 0.5543 = 99.22 / 179

## Misclassification error rate: 0.115 = 23 / 200

用未剪枝的树对测试集进行预测,并计算误判率:

## test.high

## pred2 No Yes

## No 104 33

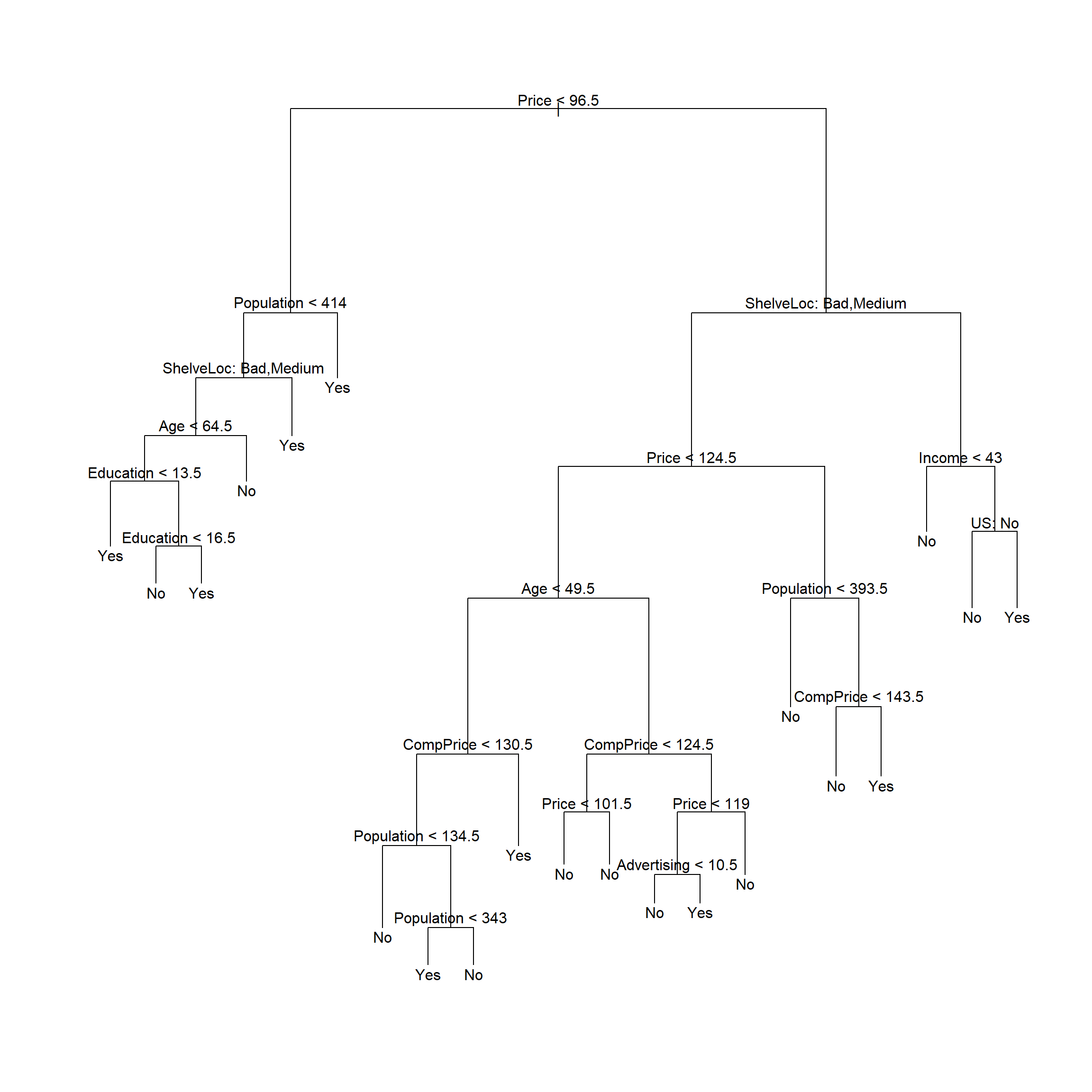

## Yes 13 50## [1] 0.2316.3.1.3 用交叉验证确定训练集的剪枝

## $size

## [1] 21 19 14 9 8 5 3 2 1

##

## $dev

## [1] 74 74 78 77 77 79 75 80 82

##

## $k

## [1] -Inf 0.0 1.0 1.4 2.0 3.0 4.0 9.0 18.0

##

## $method

## [1] "misclass"

##

## attr(,"class")

## [1] "prune" "tree.sequence"

用交叉验证方法自动选择的最佳树大小为21。

剪枝:

##

## Classification tree:

## tree(formula = High ~ . - Sales, data = d, subset = train)

## Variables actually used in tree construction:

## [1] "Price" "Population" "ShelveLoc" "Age" "Education" "CompPrice" "Advertising" "Income" "US"

## Number of terminal nodes: 21

## Residual mean deviance: 0.5543 = 99.22 / 179

## Misclassification error rate: 0.115 = 23 / 200

用剪枝后的树对测试集进行预测,计算误判率:

## test.high

## pred3 No Yes

## No 104 32

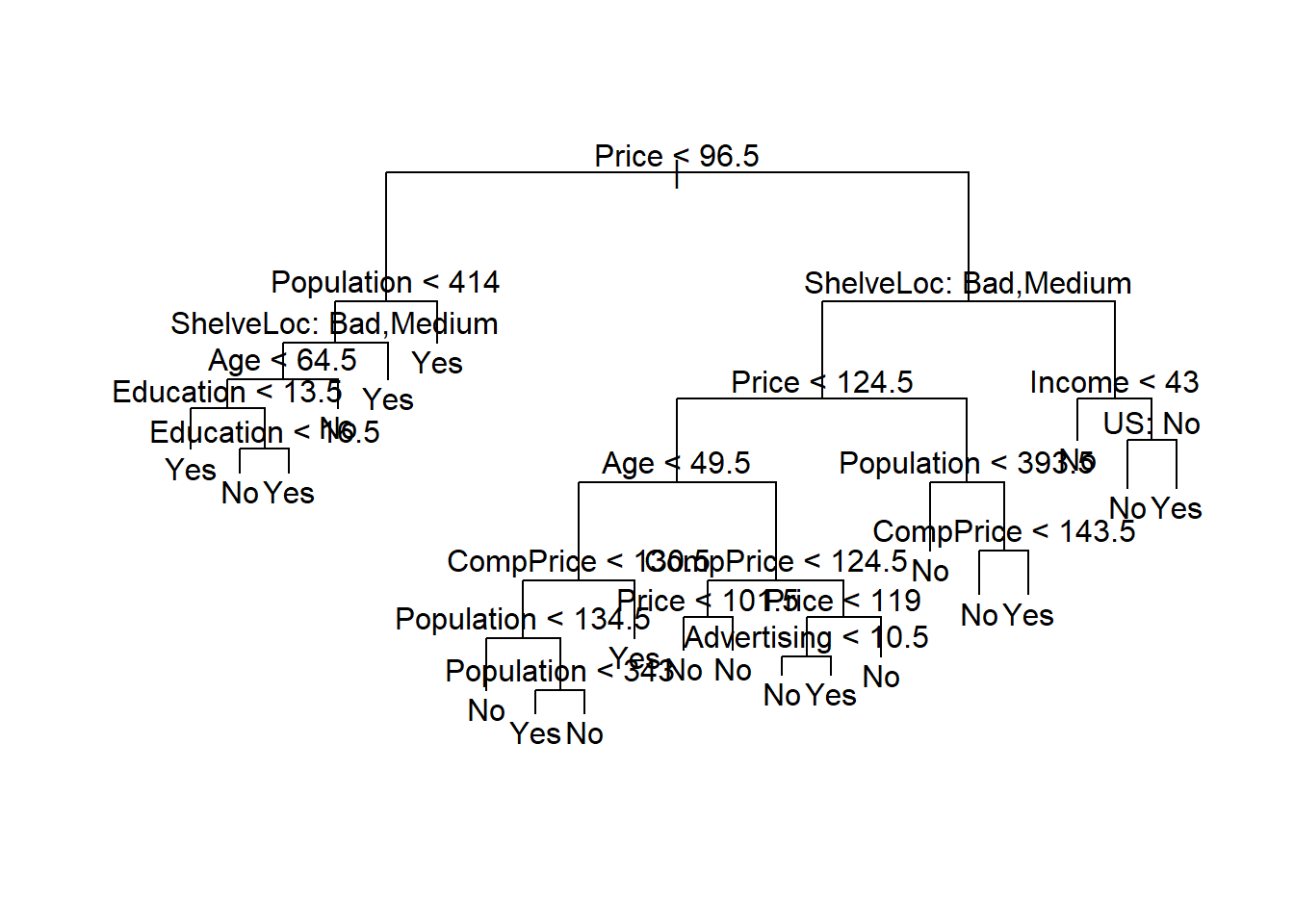

## Yes 13 51## [1] 0.22516.3.2 随机森林

对训练集用随机森林法:

##

## Call:

## randomForest(formula = High ~ . - Sales, data = d, importance = TRUE, subset = train)

## Type of random forest: classification

## Number of trees: 500

## No. of variables tried at each split: 3

##

## OOB estimate of error rate: 27%

## Confusion matrix:

## No Yes class.error

## No 100 19 0.1596639

## Yes 35 46 0.4320988这里mtry取缺省值,对应于随机森林法。

对测试集进行预报:

## test.high

## pred4 No Yes

## No 108 23

## Yes 9 60## [1] 0.16注意错判率结果依赖于训练集和测试集的划分, 另行选择训练集与测试集可能会得到很不一样的错判率结果。

对全集用随机森林:

##

## Call:

## randomForest(formula = High ~ . - Sales, data = d, importance = TRUE)

## Type of random forest: classification

## Number of trees: 500

## No. of variables tried at each split: 3

##

## OOB estimate of error rate: 19%

## Confusion matrix:

## No Yes class.error

## No 209 27 0.1144068

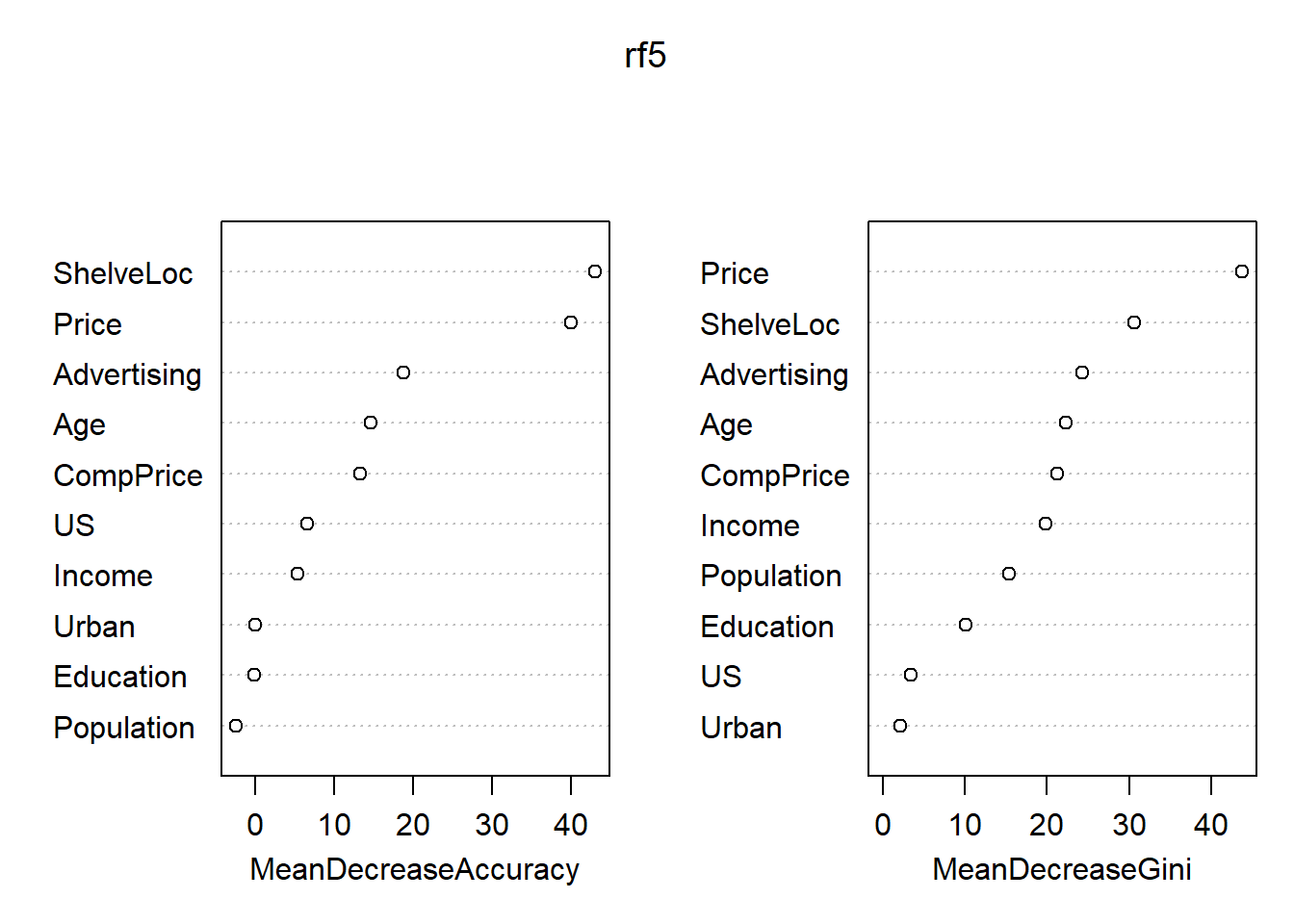

## Yes 49 115 0.2987805各变量的重要度数值及其图形:

## No Yes MeanDecreaseAccuracy MeanDecreaseGini

## CompPrice 11.033758100 7.7402932 13.33895983 21.229763

## Income 3.866551200 3.5223698 5.31053942 19.861721

## Advertising 10.907742775 16.4431361 18.85477287 24.265021

## Population -1.036771688 -2.6857178 -2.48427062 15.324194

## Price 33.443509343 27.9971005 40.05127916 43.779513

## ShelveLoc 34.255084236 34.3178518 43.13153318 30.636062

## Age 9.704435069 11.0226748 14.66681661 22.262051

## Education -0.009866974 -0.1131696 -0.18869317 10.058102

## Urban 0.687787338 -0.9368078 -0.06090853 2.094715

## US 2.929166411 5.1334006 6.60885378 3.436709

重要的自变量为Price, ShelfLoc, 其次有Age, Advertising, CompPrice, Income等。

16.4 提升法(Boosting)

提升法(Boosting)也是可以用在多种回归和判别问题中的方法。 提升法的想法是,用比较简单的模型拟合因变量, 计算残差, 然后以残差为新的因变量建模, 仍使用简单的模型, 把两次的回归函数作加权和, 得到新的残差后,再以新残差作为因变量建模, 如此重复地更新回归函数, 得到由多个回归函数加权和组成的最终的回归函数。

加权一般取为比较小的值, 其目的是降低逼近速度。 统计学习问题中降低逼近速度可以减轻过度拟合问题。

提升法算法:

(1)对训练集,设置\(r_i = y_i\),并令初始回归函数为\(\hat f(\cdot)=0\)。(2)对\(b=1,2,\dots,B\)重复执行:(a)以训练集的自变量为自变量,以\(r\)为因变量,拟合一个仅有\(d\)个分叉的简单树回归函数, 设为\(\hat f_b\);(b)更新回归函数,添加一个压缩过的树回归函数: \[\begin{aligned} \hat f(x) \leftarrow \hat f(x) + \lambda \hat f_b(x); \end{aligned}\](c)更新残差: \[\begin{aligned} r_i \leftarrow r_i - \lambda \hat f_b(x_i). \end{aligned}\]

(3)提升法的回归函数为 \[\begin{aligned} \hat f(x) = \sum_{b=1}^B \lambda \hat f_b(x) . \end{aligned}\]

用多少个回归函数做加权和,即\(B\)的选取问题。 取得\(B\)太大也会有过度拟合, 但是只要\(B\)不太大这个问题不严重。 可以用交叉验证选择\(B\)的值。

收缩系数\(\lambda\)是一个小的正数, 控制学习速度, 经常用0.01, 0.001这样的值, 与要解决的问题有关。 取\(\lambda\)很小,就需要取\(B\)很大。

用来控制每个回归函数复杂度的参数, 对树回归而言就是树的大小。 一个分叉的树往往就很好。 取单个分叉时结果模型是可加模型, 没有交互项, 这是因为每个加权相加得回归函数都只依赖于单一自变量。 \(d>1\)时就加入了交互项。

16.5 统计学习算法调用统一界面

不同的统计学习算法及其实现函数使用了不同的程序语法。 但是, 这些方法一般也有流程上的共同之处。 一般都将数据分为训练集与测试集, 在训练集上一般使用交叉验证方法求得调节参数的最优值, 然后在测试集上进行预测, 得到客观的模型效果评价。

R扩展包caret和mlr可以整合现有的统计学习函数, 用一个统一的调用界面访问统一的常用功能, 并可以实现交叉验证等功能。

16.6 波士顿郊区房价数据分析演示

MASS包的Boston数据包含了波士顿地区郊区房价的若干数据。以众位房价medv为因变量建立回归模型。 首先把缺失值去掉后存入数据集d:

数据集概况:

## 'data.frame': 506 obs. of 14 variables:

## $ crim : num 0.00632 0.02731 0.02729 0.03237 0.06905 ...

## $ zn : num 18 0 0 0 0 0 12.5 12.5 12.5 12.5 ...

## $ indus : num 2.31 7.07 7.07 2.18 2.18 2.18 7.87 7.87 7.87 7.87 ...

## $ chas : int 0 0 0 0 0 0 0 0 0 0 ...

## $ nox : num 0.538 0.469 0.469 0.458 0.458 0.458 0.524 0.524 0.524 0.524 ...

## $ rm : num 6.58 6.42 7.18 7 7.15 ...

## $ age : num 65.2 78.9 61.1 45.8 54.2 58.7 66.6 96.1 100 85.9 ...

## $ dis : num 4.09 4.97 4.97 6.06 6.06 ...

## $ rad : int 1 2 2 3 3 3 5 5 5 5 ...

## $ tax : num 296 242 242 222 222 222 311 311 311 311 ...

## $ ptratio: num 15.3 17.8 17.8 18.7 18.7 18.7 15.2 15.2 15.2 15.2 ...

## $ black : num 397 397 393 395 397 ...

## $ lstat : num 4.98 9.14 4.03 2.94 5.33 ...

## $ medv : num 24 21.6 34.7 33.4 36.2 28.7 22.9 27.1 16.5 18.9 ...## crim zn indus chas nox rm age dis rad tax ptratio black lstat medv

## Min. : 0.00632 Min. : 0.00 Min. : 0.46 Min. :0.00000 Min. :0.3850 Min. :3.561 Min. : 2.90 Min. : 1.130 Min. : 1.000 Min. :187.0 Min. :12.60 Min. : 0.32 Min. : 1.73 Min. : 5.00

## 1st Qu.: 0.08205 1st Qu.: 0.00 1st Qu.: 5.19 1st Qu.:0.00000 1st Qu.:0.4490 1st Qu.:5.886 1st Qu.: 45.02 1st Qu.: 2.100 1st Qu.: 4.000 1st Qu.:279.0 1st Qu.:17.40 1st Qu.:375.38 1st Qu.: 6.95 1st Qu.:17.02

## Median : 0.25651 Median : 0.00 Median : 9.69 Median :0.00000 Median :0.5380 Median :6.208 Median : 77.50 Median : 3.207 Median : 5.000 Median :330.0 Median :19.05 Median :391.44 Median :11.36 Median :21.20

## Mean : 3.61352 Mean : 11.36 Mean :11.14 Mean :0.06917 Mean :0.5547 Mean :6.285 Mean : 68.57 Mean : 3.795 Mean : 9.549 Mean :408.2 Mean :18.46 Mean :356.67 Mean :12.65 Mean :22.53

## 3rd Qu.: 3.67708 3rd Qu.: 12.50 3rd Qu.:18.10 3rd Qu.:0.00000 3rd Qu.:0.6240 3rd Qu.:6.623 3rd Qu.: 94.08 3rd Qu.: 5.188 3rd Qu.:24.000 3rd Qu.:666.0 3rd Qu.:20.20 3rd Qu.:396.23 3rd Qu.:16.95 3rd Qu.:25.00

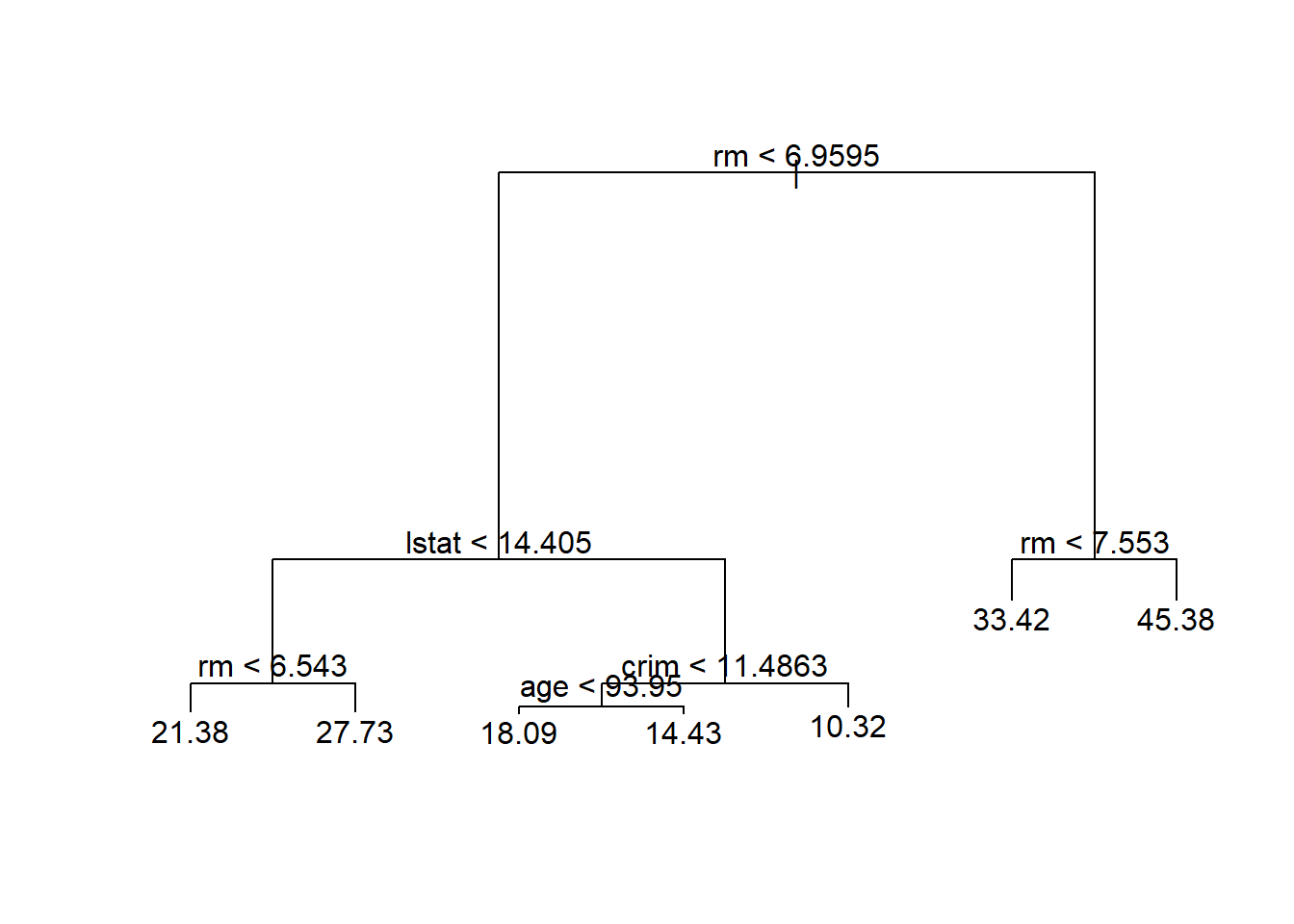

## Max. :88.97620 Max. :100.00 Max. :27.74 Max. :1.00000 Max. :0.8710 Max. :8.780 Max. :100.00 Max. :12.127 Max. :24.000 Max. :711.0 Max. :22.00 Max. :396.90 Max. :37.97 Max. :50.0016.6.1 回归树

16.6.1.1 划分训练集和测试集

set.seed(1)

d <- na.omit(Boston)

train_id <- sample(nrow(d), size=round(0.5*nrow(d)))

train <- rep(FALSE, nrow(d))

train[train_id] <- TRUE

test <- (!train)对训练集建立未剪枝的树:

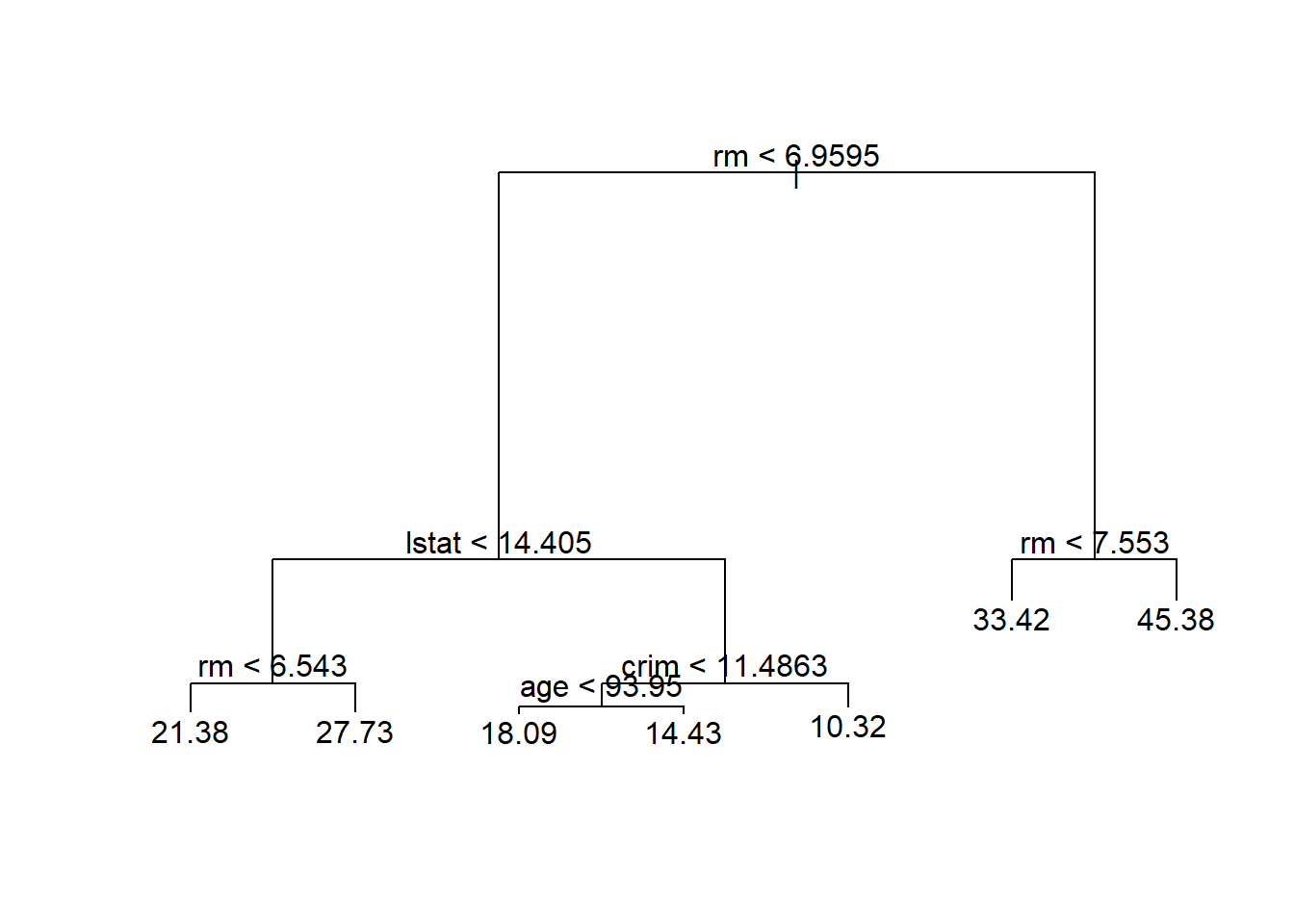

##

## Regression tree:

## tree(formula = medv ~ ., data = d, subset = train)

## Variables actually used in tree construction:

## [1] "rm" "lstat" "crim" "age"

## Number of terminal nodes: 7

## Residual mean deviance: 10.38 = 2555 / 246

## Distribution of residuals:

## Min. 1st Qu. Median Mean 3rd Qu. Max.

## -10.1800 -1.7770 -0.1775 0.0000 1.9230 16.5800

用未剪枝的树对测试集进行预测,计算均方误差:

## [1] 35.2868816.6.2 装袋法

用randomForest包计算。

当参数mtry取为自变量个数时按照装袋法计算。

对训练集计算。

set.seed(1)

bag1 <- randomForest(medv ~ ., data=d,

subset=train, mtry=ncol(d)-1, importance=TRUE)

bag1##

## Call:

## randomForest(formula = medv ~ ., data = d, mtry = ncol(d) - 1, importance = TRUE, subset = train)

## Type of random forest: regression

## Number of trees: 500

## No. of variables tried at each split: 13

##

## Mean of squared residuals: 11.24028

## % Var explained: 85.38在测试集上计算装袋法的均方误差:

## [1] 23.2778比单棵树的结果有明显改善。

16.6.3 随机森林

用randomForest包计算。

当参数mtry取为缺省值时按照随机森林方法计算。

对训练集计算。

##

## Call:

## randomForest(formula = medv ~ ., data = d, importance = TRUE, subset = train)

## Type of random forest: regression

## Number of trees: 500

## No. of variables tried at each split: 4

##

## Mean of squared residuals: 10.65524

## % Var explained: 86.14在测试集上计算随机森林法的均方误差:

## [1] 18.73938比单棵树的结果有明显改善, 比装袋法结果好。 但是,如果重新划分训练集合测试集, 结果可能就很不一样。

各变量的重要度数值及其图形:

## %IncMSE IncNodePurity

## crim 13.395947 1187.68244

## zn 3.764558 205.19223

## indus 6.634904 941.08254

## chas 2.556251 73.20277

## nox 13.848778 1092.57116

## rm 28.310083 6188.44612

## age 12.175066 659.38628

## dis 10.688898 958.36302

## rad 3.702112 151.38660

## tax 10.732676 516.77833

## ptratio 11.114057 1296.12067

## black 8.047270 336.29628

## lstat 25.983897 5396.58419

16.6.4 提升法

16.6.4.1 使用gbm包

这里使用gbm包。

在训练集上拟合:

set.seed(1)

bst1 <- gbm(

medv ~ .,

data=d[train,],

distribution='gaussian',

n.trees=5000,

interaction.depth=4)

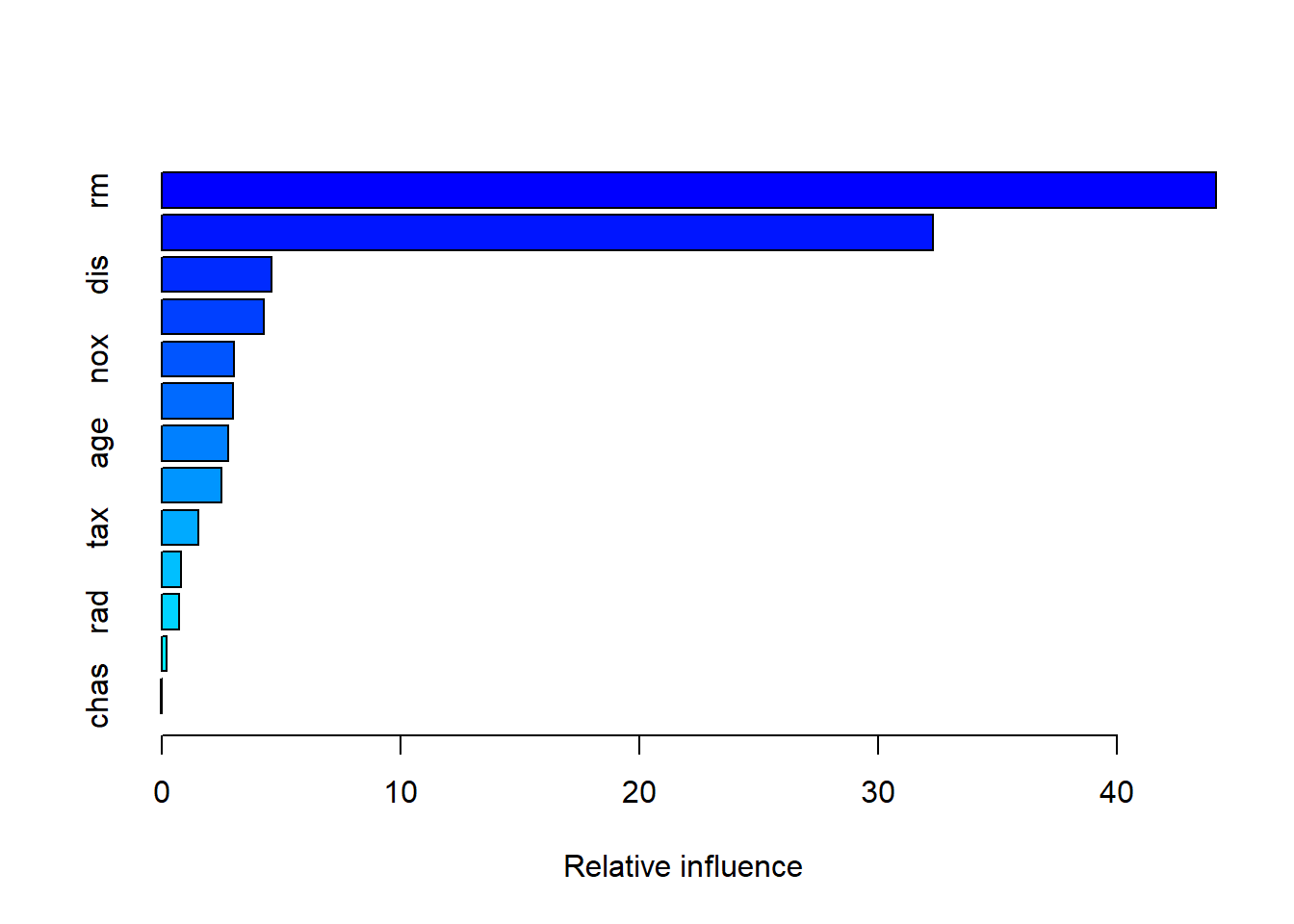

summary(bst1)

## var rel.inf

## rm rm 44.15951160

## lstat lstat 32.29267660

## dis dis 4.61448193

## crim crim 4.26335913

## nox nox 3.04501491

## black black 3.00055105

## age age 2.78125346

## ptratio ptratio 2.49234381

## tax tax 1.53209088

## indus indus 0.83074496

## rad rad 0.74565664

## zn zn 0.21988113

## chas chas 0.02243389lstat和rm是最重要的变量。

在测试集上预报,并计算均方误差:

## [1] 18.5659与随机森林方法结果相近。

如果提高学习速度:

bst2 <- gbm(

medv ~ .,

data=d[train,],

distribution='gaussian',

n.trees=5000,

interaction.depth=4,

shrinkage=0.2)

yhat <- predict(

bst2,

newdata=d[test,],

n.trees=5000)

mean( (yhat - d[test, 'medv'])^2 )## [1] 20.45657效果差不多。 但是,如果重新划分训练集和测试集, 结果可能很不一样。

16.6.4.2 使用xgboost包

使用xgboost计算。训练:

library(xgboost)

dx.train <- list(

data=as.matrix(d[train, -ncol(d)]),

label=d[["medv"]][train])

dx.test <- list(

data=as.matrix(d[test, -ncol(d)]),

label=d[["medv"]][test])

xg1 <- xgboost(

data = dx.train$data,

label = dx.train$label,

booster = "gbtree", # 基础模型

objective ="reg:squarederror", # 目标函数

max_depth = 2,

nrounds = 20) # 迭代次数## [1] train-rmse:16.550534

## [2] train-rmse:12.001012

## [3] train-rmse:8.828046

## [4] train-rmse:6.666827

## [5] train-rmse:5.179067

## [6] train-rmse:4.194855

## [7] train-rmse:3.574384

## [8] train-rmse:3.186898

## [9] train-rmse:2.915877

## [10] train-rmse:2.750005

## [11] train-rmse:2.631165

## [12] train-rmse:2.553116

## [13] train-rmse:2.484000

## [14] train-rmse:2.441754

## [15] train-rmse:2.374598

## [16] train-rmse:2.297728

## [17] train-rmse:2.239265

## [18] train-rmse:2.187598

## [19] train-rmse:2.161275

## [20] train-rmse:2.134788预测:

## [1] 20.28769比gbm结果差一些。

注意,xgboost的自变量需要是矩阵类型,

不支持数据框。

Boston数据集的自变量都是数值型的。

如果有数据中有因子型,

应该用Matrix::sparse.model.matrix()函数将数据框转换为数值型矩阵,

结果中的因子型变量被转换成哑变量形式。

16.6.4.3 使用lightgbm包

使用lightgbm计算。训练:

## Loading required package: R6##

## Attaching package: 'lightgbm'## The following objects are masked from 'package:xgboost':

##

## getinfo, setinfo, slicedl.train <- list(

data=as.matrix(d[train, -ncol(d)]),

label=d[["medv"]][train])

dl.test <- list(

data=as.matrix(d[test, -ncol(d)]),

label=d[["medv"]][test])

lgbm1 <- lightgbm(

data = dl.train$data,

label = dl.train$label,

obj ="regression", # 目标函数

max_depth = 2, # 树的深度

nrounds = 50) # 迭代次数## Warning in (function (params = list(), data, nrounds = 100L, valids = list(), : lgb.train: Found the following passed through '...': max_depth. These will be used, but in future releases of lightgbm, this warning will become an error. Add these to 'params' instead. See ?lgb.train for documentation on how to call this function.## [LightGBM] [Warning] Auto-choosing col-wise multi-threading, the overhead of testing was 0.000872 seconds.

## You can set `force_col_wise=true` to remove the overhead.

## [LightGBM] [Info] Total Bins 658

## [LightGBM] [Info] Number of data points in the train set: 253, number of used features: 12

## [LightGBM] [Info] Start training from score 21.786561

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[1]: train's l2:66.2431"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[2]: train's l2:57.636"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[3]: train's l2:50.5392"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[4]: train's l2:44.7832"

## [1] "[5]: train's l2:39.0265"

## [1] "[6]: train's l2:34.3006"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[7]: train's l2:30.6794"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[8]: train's l2:27.8866"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[9]: train's l2:25.2917"

## [1] "[10]: train's l2:22.7395"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[11]: train's l2:20.8562"

## [1] "[12]: train's l2:18.9931"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[13]: train's l2:17.6703"

## [1] "[14]: train's l2:16.2959"

## [1] "[15]: train's l2:15.1774"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[16]: train's l2:14.3231"

## [1] "[17]: train's l2:13.453"

## [1] "[18]: train's l2:12.7437"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[19]: train's l2:12.1731"

## [1] "[20]: train's l2:11.6418"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[21]: train's l2:11.2383"

## [1] "[22]: train's l2:10.7768"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[23]: train's l2:10.4449"

## [1] "[24]: train's l2:10.0823"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[25]: train's l2:9.84566"

## [1] "[26]: train's l2:9.56599"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[27]: train's l2:9.38185"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[28]: train's l2:9.21302"

## [1] "[29]: train's l2:8.96784"

## [1] "[30]: train's l2:8.76156"

## [1] "[31]: train's l2:8.56684"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[32]: train's l2:8.45506"

## [1] "[33]: train's l2:8.30198"

## [1] "[34]: train's l2:8.15626"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[35]: train's l2:8.0615"

## [1] "[36]: train's l2:7.91956"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[37]: train's l2:7.8353"

## [1] "[38]: train's l2:7.71917"

## [1] "[39]: train's l2:7.58138"

## [1] "[40]: train's l2:7.48035"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[41]: train's l2:7.40417"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[42]: train's l2:7.35067"

## [1] "[43]: train's l2:7.25805"

## [1] "[44]: train's l2:7.14817"

## [1] "[45]: train's l2:7.07001"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[46]: train's l2:7.0208"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[47]: train's l2:6.97039"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[48]: train's l2:6.90809"

## [1] "[49]: train's l2:6.84257"

## [LightGBM] [Warning] No further splits with positive gain, best gain: -inf

## [1] "[50]: train's l2:6.80303"预测:

## [1] 24.12621效果不好,误差比较大。 有可能需要调整参数。

注意,xgboost的自变量需要是矩阵类型,

不支持数据框。

Boston数据集的自变量都是数值型的。

如果有数据中有因子型,

应该用Matrix::sparse.model.matrix()函数将数据框转换为数值型矩阵,

结果中的因子型变量被转换成哑变量形式。

lightgbm对输入数据格式也有特殊要求, 详见文档。

16.7 附录:数据

16.7.1 Heart数据

| Age | Sex | ChestPain | RestBP | Chol | Fbs | RestECG | MaxHR | ExAng | Oldpeak | Slope | Ca | Thal | AHD | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | 63 | 1 | typical | 145 | 233 | 1 | 2 | 150 | 0 | 2.3 | 3 | 0 | fixed | No |

| 2 | 67 | 1 | asymptomatic | 160 | 286 | 0 | 2 | 108 | 1 | 1.5 | 2 | 3 | normal | Yes |

| 3 | 67 | 1 | asymptomatic | 120 | 229 | 0 | 2 | 129 | 1 | 2.6 | 2 | 2 | reversable | Yes |

| 4 | 37 | 1 | nonanginal | 130 | 250 | 0 | 0 | 187 | 0 | 3.5 | 3 | 0 | normal | No |

| 5 | 41 | 0 | nontypical | 130 | 204 | 0 | 2 | 172 | 0 | 1.4 | 1 | 0 | normal | No |

| 6 | 56 | 1 | nontypical | 120 | 236 | 0 | 0 | 178 | 0 | 0.8 | 1 | 0 | normal | No |

| 7 | 62 | 0 | asymptomatic | 140 | 268 | 0 | 2 | 160 | 0 | 3.6 | 3 | 2 | normal | Yes |

| 8 | 57 | 0 | asymptomatic | 120 | 354 | 0 | 0 | 163 | 1 | 0.6 | 1 | 0 | normal | No |

| 9 | 63 | 1 | asymptomatic | 130 | 254 | 0 | 2 | 147 | 0 | 1.4 | 2 | 1 | reversable | Yes |

| 10 | 53 | 1 | asymptomatic | 140 | 203 | 1 | 2 | 155 | 1 | 3.1 | 3 | 0 | reversable | Yes |

| 11 | 57 | 1 | asymptomatic | 140 | 192 | 0 | 0 | 148 | 0 | 0.4 | 2 | 0 | fixed | No |

| 12 | 56 | 0 | nontypical | 140 | 294 | 0 | 2 | 153 | 0 | 1.3 | 2 | 0 | normal | No |

| 13 | 56 | 1 | nonanginal | 130 | 256 | 1 | 2 | 142 | 1 | 0.6 | 2 | 1 | fixed | Yes |

| 14 | 44 | 1 | nontypical | 120 | 263 | 0 | 0 | 173 | 0 | 0.0 | 1 | 0 | reversable | No |

| 15 | 52 | 1 | nonanginal | 172 | 199 | 1 | 0 | 162 | 0 | 0.5 | 1 | 0 | reversable | No |

| 16 | 57 | 1 | nonanginal | 150 | 168 | 0 | 0 | 174 | 0 | 1.6 | 1 | 0 | normal | No |

| 17 | 48 | 1 | nontypical | 110 | 229 | 0 | 0 | 168 | 0 | 1.0 | 3 | 0 | reversable | Yes |

| 18 | 54 | 1 | asymptomatic | 140 | 239 | 0 | 0 | 160 | 0 | 1.2 | 1 | 0 | normal | No |

| 19 | 48 | 0 | nonanginal | 130 | 275 | 0 | 0 | 139 | 0 | 0.2 | 1 | 0 | normal | No |

| 20 | 49 | 1 | nontypical | 130 | 266 | 0 | 0 | 171 | 0 | 0.6 | 1 | 0 | normal | No |

| 21 | 64 | 1 | typical | 110 | 211 | 0 | 2 | 144 | 1 | 1.8 | 2 | 0 | normal | No |

| 22 | 58 | 0 | typical | 150 | 283 | 1 | 2 | 162 | 0 | 1.0 | 1 | 0 | normal | No |

| 23 | 58 | 1 | nontypical | 120 | 284 | 0 | 2 | 160 | 0 | 1.8 | 2 | 0 | normal | Yes |

| 24 | 58 | 1 | nonanginal | 132 | 224 | 0 | 2 | 173 | 0 | 3.2 | 1 | 2 | reversable | Yes |

| 25 | 60 | 1 | asymptomatic | 130 | 206 | 0 | 2 | 132 | 1 | 2.4 | 2 | 2 | reversable | Yes |

| 26 | 50 | 0 | nonanginal | 120 | 219 | 0 | 0 | 158 | 0 | 1.6 | 2 | 0 | normal | No |

| 27 | 58 | 0 | nonanginal | 120 | 340 | 0 | 0 | 172 | 0 | 0.0 | 1 | 0 | normal | No |

| 28 | 66 | 0 | typical | 150 | 226 | 0 | 0 | 114 | 0 | 2.6 | 3 | 0 | normal | No |

| 29 | 43 | 1 | asymptomatic | 150 | 247 | 0 | 0 | 171 | 0 | 1.5 | 1 | 0 | normal | No |

| 30 | 40 | 1 | asymptomatic | 110 | 167 | 0 | 2 | 114 | 1 | 2.0 | 2 | 0 | reversable | Yes |

| 31 | 69 | 0 | typical | 140 | 239 | 0 | 0 | 151 | 0 | 1.8 | 1 | 2 | normal | No |

| 32 | 60 | 1 | asymptomatic | 117 | 230 | 1 | 0 | 160 | 1 | 1.4 | 1 | 2 | reversable | Yes |

| 33 | 64 | 1 | nonanginal | 140 | 335 | 0 | 0 | 158 | 0 | 0.0 | 1 | 0 | normal | Yes |

| 34 | 59 | 1 | asymptomatic | 135 | 234 | 0 | 0 | 161 | 0 | 0.5 | 2 | 0 | reversable | No |

| 35 | 44 | 1 | nonanginal | 130 | 233 | 0 | 0 | 179 | 1 | 0.4 | 1 | 0 | normal | No |

| 36 | 42 | 1 | asymptomatic | 140 | 226 | 0 | 0 | 178 | 0 | 0.0 | 1 | 0 | normal | No |

| 37 | 43 | 1 | asymptomatic | 120 | 177 | 0 | 2 | 120 | 1 | 2.5 | 2 | 0 | reversable | Yes |

| 38 | 57 | 1 | asymptomatic | 150 | 276 | 0 | 2 | 112 | 1 | 0.6 | 2 | 1 | fixed | Yes |

| 39 | 55 | 1 | asymptomatic | 132 | 353 | 0 | 0 | 132 | 1 | 1.2 | 2 | 1 | reversable | Yes |

| 40 | 61 | 1 | nonanginal | 150 | 243 | 1 | 0 | 137 | 1 | 1.0 | 2 | 0 | normal | No |

| 41 | 65 | 0 | asymptomatic | 150 | 225 | 0 | 2 | 114 | 0 | 1.0 | 2 | 3 | reversable | Yes |

| 42 | 40 | 1 | typical | 140 | 199 | 0 | 0 | 178 | 1 | 1.4 | 1 | 0 | reversable | No |

| 43 | 71 | 0 | nontypical | 160 | 302 | 0 | 0 | 162 | 0 | 0.4 | 1 | 2 | normal | No |

| 44 | 59 | 1 | nonanginal | 150 | 212 | 1 | 0 | 157 | 0 | 1.6 | 1 | 0 | normal | No |

| 45 | 61 | 0 | asymptomatic | 130 | 330 | 0 | 2 | 169 | 0 | 0.0 | 1 | 0 | normal | Yes |

| 46 | 58 | 1 | nonanginal | 112 | 230 | 0 | 2 | 165 | 0 | 2.5 | 2 | 1 | reversable | Yes |

| 47 | 51 | 1 | nonanginal | 110 | 175 | 0 | 0 | 123 | 0 | 0.6 | 1 | 0 | normal | No |

| 48 | 50 | 1 | asymptomatic | 150 | 243 | 0 | 2 | 128 | 0 | 2.6 | 2 | 0 | reversable | Yes |

| 49 | 65 | 0 | nonanginal | 140 | 417 | 1 | 2 | 157 | 0 | 0.8 | 1 | 1 | normal | No |

| 50 | 53 | 1 | nonanginal | 130 | 197 | 1 | 2 | 152 | 0 | 1.2 | 3 | 0 | normal | No |

| 51 | 41 | 0 | nontypical | 105 | 198 | 0 | 0 | 168 | 0 | 0.0 | 1 | 1 | normal | No |

| 52 | 65 | 1 | asymptomatic | 120 | 177 | 0 | 0 | 140 | 0 | 0.4 | 1 | 0 | reversable | No |

| 53 | 44 | 1 | asymptomatic | 112 | 290 | 0 | 2 | 153 | 0 | 0.0 | 1 | 1 | normal | Yes |

| 54 | 44 | 1 | nontypical | 130 | 219 | 0 | 2 | 188 | 0 | 0.0 | 1 | 0 | normal | No |

| 55 | 60 | 1 | asymptomatic | 130 | 253 | 0 | 0 | 144 | 1 | 1.4 | 1 | 1 | reversable | Yes |

| 56 | 54 | 1 | asymptomatic | 124 | 266 | 0 | 2 | 109 | 1 | 2.2 | 2 | 1 | reversable | Yes |

| 57 | 50 | 1 | nonanginal | 140 | 233 | 0 | 0 | 163 | 0 | 0.6 | 2 | 1 | reversable | Yes |

| 58 | 41 | 1 | asymptomatic | 110 | 172 | 0 | 2 | 158 | 0 | 0.0 | 1 | 0 | reversable | Yes |

| 59 | 54 | 1 | nonanginal | 125 | 273 | 0 | 2 | 152 | 0 | 0.5 | 3 | 1 | normal | No |

| 60 | 51 | 1 | typical | 125 | 213 | 0 | 2 | 125 | 1 | 1.4 | 1 | 1 | normal | No |

| 61 | 51 | 0 | asymptomatic | 130 | 305 | 0 | 0 | 142 | 1 | 1.2 | 2 | 0 | reversable | Yes |

| 62 | 46 | 0 | nonanginal | 142 | 177 | 0 | 2 | 160 | 1 | 1.4 | 3 | 0 | normal | No |

| 63 | 58 | 1 | asymptomatic | 128 | 216 | 0 | 2 | 131 | 1 | 2.2 | 2 | 3 | reversable | Yes |

| 64 | 54 | 0 | nonanginal | 135 | 304 | 1 | 0 | 170 | 0 | 0.0 | 1 | 0 | normal | No |

| 65 | 54 | 1 | asymptomatic | 120 | 188 | 0 | 0 | 113 | 0 | 1.4 | 2 | 1 | reversable | Yes |

| 66 | 60 | 1 | asymptomatic | 145 | 282 | 0 | 2 | 142 | 1 | 2.8 | 2 | 2 | reversable | Yes |

| 67 | 60 | 1 | nonanginal | 140 | 185 | 0 | 2 | 155 | 0 | 3.0 | 2 | 0 | normal | Yes |

| 68 | 54 | 1 | nonanginal | 150 | 232 | 0 | 2 | 165 | 0 | 1.6 | 1 | 0 | reversable | No |

| 69 | 59 | 1 | asymptomatic | 170 | 326 | 0 | 2 | 140 | 1 | 3.4 | 3 | 0 | reversable | Yes |

| 70 | 46 | 1 | nonanginal | 150 | 231 | 0 | 0 | 147 | 0 | 3.6 | 2 | 0 | normal | Yes |

| 71 | 65 | 0 | nonanginal | 155 | 269 | 0 | 0 | 148 | 0 | 0.8 | 1 | 0 | normal | No |

| 72 | 67 | 1 | asymptomatic | 125 | 254 | 1 | 0 | 163 | 0 | 0.2 | 2 | 2 | reversable | Yes |

| 73 | 62 | 1 | asymptomatic | 120 | 267 | 0 | 0 | 99 | 1 | 1.8 | 2 | 2 | reversable | Yes |

| 74 | 65 | 1 | asymptomatic | 110 | 248 | 0 | 2 | 158 | 0 | 0.6 | 1 | 2 | fixed | Yes |

| 75 | 44 | 1 | asymptomatic | 110 | 197 | 0 | 2 | 177 | 0 | 0.0 | 1 | 1 | normal | Yes |

| 76 | 65 | 0 | nonanginal | 160 | 360 | 0 | 2 | 151 | 0 | 0.8 | 1 | 0 | normal | No |

| 77 | 60 | 1 | asymptomatic | 125 | 258 | 0 | 2 | 141 | 1 | 2.8 | 2 | 1 | reversable | Yes |

| 78 | 51 | 0 | nonanginal | 140 | 308 | 0 | 2 | 142 | 0 | 1.5 | 1 | 1 | normal | No |

| 79 | 48 | 1 | nontypical | 130 | 245 | 0 | 2 | 180 | 0 | 0.2 | 2 | 0 | normal | No |

| 80 | 58 | 1 | asymptomatic | 150 | 270 | 0 | 2 | 111 | 1 | 0.8 | 1 | 0 | reversable | Yes |

| 81 | 45 | 1 | asymptomatic | 104 | 208 | 0 | 2 | 148 | 1 | 3.0 | 2 | 0 | normal | No |

| 82 | 53 | 0 | asymptomatic | 130 | 264 | 0 | 2 | 143 | 0 | 0.4 | 2 | 0 | normal | No |

| 83 | 39 | 1 | nonanginal | 140 | 321 | 0 | 2 | 182 | 0 | 0.0 | 1 | 0 | normal | No |

| 84 | 68 | 1 | nonanginal | 180 | 274 | 1 | 2 | 150 | 1 | 1.6 | 2 | 0 | reversable | Yes |

| 85 | 52 | 1 | nontypical | 120 | 325 | 0 | 0 | 172 | 0 | 0.2 | 1 | 0 | normal | No |

| 86 | 44 | 1 | nonanginal | 140 | 235 | 0 | 2 | 180 | 0 | 0.0 | 1 | 0 | normal | No |

| 87 | 47 | 1 | nonanginal | 138 | 257 | 0 | 2 | 156 | 0 | 0.0 | 1 | 0 | normal | No |

| 89 | 53 | 0 | asymptomatic | 138 | 234 | 0 | 2 | 160 | 0 | 0.0 | 1 | 0 | normal | No |

| 90 | 51 | 0 | nonanginal | 130 | 256 | 0 | 2 | 149 | 0 | 0.5 | 1 | 0 | normal | No |

| 91 | 66 | 1 | asymptomatic | 120 | 302 | 0 | 2 | 151 | 0 | 0.4 | 2 | 0 | normal | No |

| 92 | 62 | 0 | asymptomatic | 160 | 164 | 0 | 2 | 145 | 0 | 6.2 | 3 | 3 | reversable | Yes |

| 93 | 62 | 1 | nonanginal | 130 | 231 | 0 | 0 | 146 | 0 | 1.8 | 2 | 3 | reversable | No |

| 94 | 44 | 0 | nonanginal | 108 | 141 | 0 | 0 | 175 | 0 | 0.6 | 2 | 0 | normal | No |

| 95 | 63 | 0 | nonanginal | 135 | 252 | 0 | 2 | 172 | 0 | 0.0 | 1 | 0 | normal | No |

| 96 | 52 | 1 | asymptomatic | 128 | 255 | 0 | 0 | 161 | 1 | 0.0 | 1 | 1 | reversable | Yes |

| 97 | 59 | 1 | asymptomatic | 110 | 239 | 0 | 2 | 142 | 1 | 1.2 | 2 | 1 | reversable | Yes |

| 98 | 60 | 0 | asymptomatic | 150 | 258 | 0 | 2 | 157 | 0 | 2.6 | 2 | 2 | reversable | Yes |

| 99 | 52 | 1 | nontypical | 134 | 201 | 0 | 0 | 158 | 0 | 0.8 | 1 | 1 | normal | No |

| 100 | 48 | 1 | asymptomatic | 122 | 222 | 0 | 2 | 186 | 0 | 0.0 | 1 | 0 | normal | No |

| 101 | 45 | 1 | asymptomatic | 115 | 260 | 0 | 2 | 185 | 0 | 0.0 | 1 | 0 | normal | No |

| 102 | 34 | 1 | typical | 118 | 182 | 0 | 2 | 174 | 0 | 0.0 | 1 | 0 | normal | No |

| 103 | 57 | 0 | asymptomatic | 128 | 303 | 0 | 2 | 159 | 0 | 0.0 | 1 | 1 | normal | No |

| 104 | 71 | 0 | nonanginal | 110 | 265 | 1 | 2 | 130 | 0 | 0.0 | 1 | 1 | normal | No |

| 105 | 49 | 1 | nonanginal | 120 | 188 | 0 | 0 | 139 | 0 | 2.0 | 2 | 3 | reversable | Yes |

| 106 | 54 | 1 | nontypical | 108 | 309 | 0 | 0 | 156 | 0 | 0.0 | 1 | 0 | reversable | No |

| 107 | 59 | 1 | asymptomatic | 140 | 177 | 0 | 0 | 162 | 1 | 0.0 | 1 | 1 | reversable | Yes |

| 108 | 57 | 1 | nonanginal | 128 | 229 | 0 | 2 | 150 | 0 | 0.4 | 2 | 1 | reversable | Yes |

| 109 | 61 | 1 | asymptomatic | 120 | 260 | 0 | 0 | 140 | 1 | 3.6 | 2 | 1 | reversable | Yes |

| 110 | 39 | 1 | asymptomatic | 118 | 219 | 0 | 0 | 140 | 0 | 1.2 | 2 | 0 | reversable | Yes |

| 111 | 61 | 0 | asymptomatic | 145 | 307 | 0 | 2 | 146 | 1 | 1.0 | 2 | 0 | reversable | Yes |

| 112 | 56 | 1 | asymptomatic | 125 | 249 | 1 | 2 | 144 | 1 | 1.2 | 2 | 1 | normal | Yes |

| 113 | 52 | 1 | typical | 118 | 186 | 0 | 2 | 190 | 0 | 0.0 | 2 | 0 | fixed | No |

| 114 | 43 | 0 | asymptomatic | 132 | 341 | 1 | 2 | 136 | 1 | 3.0 | 2 | 0 | reversable | Yes |

| 115 | 62 | 0 | nonanginal | 130 | 263 | 0 | 0 | 97 | 0 | 1.2 | 2 | 1 | reversable | Yes |

| 116 | 41 | 1 | nontypical | 135 | 203 | 0 | 0 | 132 | 0 | 0.0 | 2 | 0 | fixed | No |

| 117 | 58 | 1 | nonanginal | 140 | 211 | 1 | 2 | 165 | 0 | 0.0 | 1 | 0 | normal | No |

| 118 | 35 | 0 | asymptomatic | 138 | 183 | 0 | 0 | 182 | 0 | 1.4 | 1 | 0 | normal | No |

| 119 | 63 | 1 | asymptomatic | 130 | 330 | 1 | 2 | 132 | 1 | 1.8 | 1 | 3 | reversable | Yes |

| 120 | 65 | 1 | asymptomatic | 135 | 254 | 0 | 2 | 127 | 0 | 2.8 | 2 | 1 | reversable | Yes |

| 121 | 48 | 1 | asymptomatic | 130 | 256 | 1 | 2 | 150 | 1 | 0.0 | 1 | 2 | reversable | Yes |

| 122 | 63 | 0 | asymptomatic | 150 | 407 | 0 | 2 | 154 | 0 | 4.0 | 2 | 3 | reversable | Yes |

| 123 | 51 | 1 | nonanginal | 100 | 222 | 0 | 0 | 143 | 1 | 1.2 | 2 | 0 | normal | No |

| 124 | 55 | 1 | asymptomatic | 140 | 217 | 0 | 0 | 111 | 1 | 5.6 | 3 | 0 | reversable | Yes |

| 125 | 65 | 1 | typical | 138 | 282 | 1 | 2 | 174 | 0 | 1.4 | 2 | 1 | normal | Yes |

| 126 | 45 | 0 | nontypical | 130 | 234 | 0 | 2 | 175 | 0 | 0.6 | 2 | 0 | normal | No |

| 127 | 56 | 0 | asymptomatic | 200 | 288 | 1 | 2 | 133 | 1 | 4.0 | 3 | 2 | reversable | Yes |

| 128 | 54 | 1 | asymptomatic | 110 | 239 | 0 | 0 | 126 | 1 | 2.8 | 2 | 1 | reversable | Yes |

| 129 | 44 | 1 | nontypical | 120 | 220 | 0 | 0 | 170 | 0 | 0.0 | 1 | 0 | normal | No |

| 130 | 62 | 0 | asymptomatic | 124 | 209 | 0 | 0 | 163 | 0 | 0.0 | 1 | 0 | normal | No |

| 131 | 54 | 1 | nonanginal | 120 | 258 | 0 | 2 | 147 | 0 | 0.4 | 2 | 0 | reversable | No |

| 132 | 51 | 1 | nonanginal | 94 | 227 | 0 | 0 | 154 | 1 | 0.0 | 1 | 1 | reversable | No |

| 133 | 29 | 1 | nontypical | 130 | 204 | 0 | 2 | 202 | 0 | 0.0 | 1 | 0 | normal | No |

| 134 | 51 | 1 | asymptomatic | 140 | 261 | 0 | 2 | 186 | 1 | 0.0 | 1 | 0 | normal | No |

| 135 | 43 | 0 | nonanginal | 122 | 213 | 0 | 0 | 165 | 0 | 0.2 | 2 | 0 | normal | No |

| 136 | 55 | 0 | nontypical | 135 | 250 | 0 | 2 | 161 | 0 | 1.4 | 2 | 0 | normal | No |

| 137 | 70 | 1 | asymptomatic | 145 | 174 | 0 | 0 | 125 | 1 | 2.6 | 3 | 0 | reversable | Yes |

| 138 | 62 | 1 | nontypical | 120 | 281 | 0 | 2 | 103 | 0 | 1.4 | 2 | 1 | reversable | Yes |

| 139 | 35 | 1 | asymptomatic | 120 | 198 | 0 | 0 | 130 | 1 | 1.6 | 2 | 0 | reversable | Yes |

| 140 | 51 | 1 | nonanginal | 125 | 245 | 1 | 2 | 166 | 0 | 2.4 | 2 | 0 | normal | No |

| 141 | 59 | 1 | nontypical | 140 | 221 | 0 | 0 | 164 | 1 | 0.0 | 1 | 0 | normal | No |

| 142 | 59 | 1 | typical | 170 | 288 | 0 | 2 | 159 | 0 | 0.2 | 2 | 0 | reversable | Yes |

| 143 | 52 | 1 | nontypical | 128 | 205 | 1 | 0 | 184 | 0 | 0.0 | 1 | 0 | normal | No |

| 144 | 64 | 1 | nonanginal | 125 | 309 | 0 | 0 | 131 | 1 | 1.8 | 2 | 0 | reversable | Yes |

| 145 | 58 | 1 | nonanginal | 105 | 240 | 0 | 2 | 154 | 1 | 0.6 | 2 | 0 | reversable | No |

| 146 | 47 | 1 | nonanginal | 108 | 243 | 0 | 0 | 152 | 0 | 0.0 | 1 | 0 | normal | Yes |

| 147 | 57 | 1 | asymptomatic | 165 | 289 | 1 | 2 | 124 | 0 | 1.0 | 2 | 3 | reversable | Yes |

| 148 | 41 | 1 | nonanginal | 112 | 250 | 0 | 0 | 179 | 0 | 0.0 | 1 | 0 | normal | No |

| 149 | 45 | 1 | nontypical | 128 | 308 | 0 | 2 | 170 | 0 | 0.0 | 1 | 0 | normal | No |

| 150 | 60 | 0 | nonanginal | 102 | 318 | 0 | 0 | 160 | 0 | 0.0 | 1 | 1 | normal | No |

| 151 | 52 | 1 | typical | 152 | 298 | 1 | 0 | 178 | 0 | 1.2 | 2 | 0 | reversable | No |

| 152 | 42 | 0 | asymptomatic | 102 | 265 | 0 | 2 | 122 | 0 | 0.6 | 2 | 0 | normal | No |

| 153 | 67 | 0 | nonanginal | 115 | 564 | 0 | 2 | 160 | 0 | 1.6 | 2 | 0 | reversable | No |

| 154 | 55 | 1 | asymptomatic | 160 | 289 | 0 | 2 | 145 | 1 | 0.8 | 2 | 1 | reversable | Yes |

| 155 | 64 | 1 | asymptomatic | 120 | 246 | 0 | 2 | 96 | 1 | 2.2 | 3 | 1 | normal | Yes |

| 156 | 70 | 1 | asymptomatic | 130 | 322 | 0 | 2 | 109 | 0 | 2.4 | 2 | 3 | normal | Yes |

| 157 | 51 | 1 | asymptomatic | 140 | 299 | 0 | 0 | 173 | 1 | 1.6 | 1 | 0 | reversable | Yes |

| 158 | 58 | 1 | asymptomatic | 125 | 300 | 0 | 2 | 171 | 0 | 0.0 | 1 | 2 | reversable | Yes |

| 159 | 60 | 1 | asymptomatic | 140 | 293 | 0 | 2 | 170 | 0 | 1.2 | 2 | 2 | reversable | Yes |

| 160 | 68 | 1 | nonanginal | 118 | 277 | 0 | 0 | 151 | 0 | 1.0 | 1 | 1 | reversable | No |

| 161 | 46 | 1 | nontypical | 101 | 197 | 1 | 0 | 156 | 0 | 0.0 | 1 | 0 | reversable | No |

| 162 | 77 | 1 | asymptomatic | 125 | 304 | 0 | 2 | 162 | 1 | 0.0 | 1 | 3 | normal | Yes |

| 163 | 54 | 0 | nonanginal | 110 | 214 | 0 | 0 | 158 | 0 | 1.6 | 2 | 0 | normal | No |

| 164 | 58 | 0 | asymptomatic | 100 | 248 | 0 | 2 | 122 | 0 | 1.0 | 2 | 0 | normal | No |

| 165 | 48 | 1 | nonanginal | 124 | 255 | 1 | 0 | 175 | 0 | 0.0 | 1 | 2 | normal | No |

| 166 | 57 | 1 | asymptomatic | 132 | 207 | 0 | 0 | 168 | 1 | 0.0 | 1 | 0 | reversable | No |

| 168 | 54 | 0 | nontypical | 132 | 288 | 1 | 2 | 159 | 1 | 0.0 | 1 | 1 | normal | No |

| 169 | 35 | 1 | asymptomatic | 126 | 282 | 0 | 2 | 156 | 1 | 0.0 | 1 | 0 | reversable | Yes |

| 170 | 45 | 0 | nontypical | 112 | 160 | 0 | 0 | 138 | 0 | 0.0 | 2 | 0 | normal | No |

| 171 | 70 | 1 | nonanginal | 160 | 269 | 0 | 0 | 112 | 1 | 2.9 | 2 | 1 | reversable | Yes |

| 172 | 53 | 1 | asymptomatic | 142 | 226 | 0 | 2 | 111 | 1 | 0.0 | 1 | 0 | reversable | No |

| 173 | 59 | 0 | asymptomatic | 174 | 249 | 0 | 0 | 143 | 1 | 0.0 | 2 | 0 | normal | Yes |

| 174 | 62 | 0 | asymptomatic | 140 | 394 | 0 | 2 | 157 | 0 | 1.2 | 2 | 0 | normal | No |

| 175 | 64 | 1 | asymptomatic | 145 | 212 | 0 | 2 | 132 | 0 | 2.0 | 2 | 2 | fixed | Yes |

| 176 | 57 | 1 | asymptomatic | 152 | 274 | 0 | 0 | 88 | 1 | 1.2 | 2 | 1 | reversable | Yes |

| 177 | 52 | 1 | asymptomatic | 108 | 233 | 1 | 0 | 147 | 0 | 0.1 | 1 | 3 | reversable | No |

| 178 | 56 | 1 | asymptomatic | 132 | 184 | 0 | 2 | 105 | 1 | 2.1 | 2 | 1 | fixed | Yes |

| 179 | 43 | 1 | nonanginal | 130 | 315 | 0 | 0 | 162 | 0 | 1.9 | 1 | 1 | normal | No |

| 180 | 53 | 1 | nonanginal | 130 | 246 | 1 | 2 | 173 | 0 | 0.0 | 1 | 3 | normal | No |

| 181 | 48 | 1 | asymptomatic | 124 | 274 | 0 | 2 | 166 | 0 | 0.5 | 2 | 0 | reversable | Yes |

| 182 | 56 | 0 | asymptomatic | 134 | 409 | 0 | 2 | 150 | 1 | 1.9 | 2 | 2 | reversable | Yes |

| 183 | 42 | 1 | typical | 148 | 244 | 0 | 2 | 178 | 0 | 0.8 | 1 | 2 | normal | No |

| 184 | 59 | 1 | typical | 178 | 270 | 0 | 2 | 145 | 0 | 4.2 | 3 | 0 | reversable | No |

| 185 | 60 | 0 | asymptomatic | 158 | 305 | 0 | 2 | 161 | 0 | 0.0 | 1 | 0 | normal | Yes |

| 186 | 63 | 0 | nontypical | 140 | 195 | 0 | 0 | 179 | 0 | 0.0 | 1 | 2 | normal | No |

| 187 | 42 | 1 | nonanginal | 120 | 240 | 1 | 0 | 194 | 0 | 0.8 | 3 | 0 | reversable | No |

| 188 | 66 | 1 | nontypical | 160 | 246 | 0 | 0 | 120 | 1 | 0.0 | 2 | 3 | fixed | Yes |

| 189 | 54 | 1 | nontypical | 192 | 283 | 0 | 2 | 195 | 0 | 0.0 | 1 | 1 | reversable | Yes |

| 190 | 69 | 1 | nonanginal | 140 | 254 | 0 | 2 | 146 | 0 | 2.0 | 2 | 3 | reversable | Yes |

| 191 | 50 | 1 | nonanginal | 129 | 196 | 0 | 0 | 163 | 0 | 0.0 | 1 | 0 | normal | No |

| 192 | 51 | 1 | asymptomatic | 140 | 298 | 0 | 0 | 122 | 1 | 4.2 | 2 | 3 | reversable | Yes |

| 194 | 62 | 0 | asymptomatic | 138 | 294 | 1 | 0 | 106 | 0 | 1.9 | 2 | 3 | normal | Yes |

| 195 | 68 | 0 | nonanginal | 120 | 211 | 0 | 2 | 115 | 0 | 1.5 | 2 | 0 | normal | No |

| 196 | 67 | 1 | asymptomatic | 100 | 299 | 0 | 2 | 125 | 1 | 0.9 | 2 | 2 | normal | Yes |

| 197 | 69 | 1 | typical | 160 | 234 | 1 | 2 | 131 | 0 | 0.1 | 2 | 1 | normal | No |

| 198 | 45 | 0 | asymptomatic | 138 | 236 | 0 | 2 | 152 | 1 | 0.2 | 2 | 0 | normal | No |

| 199 | 50 | 0 | nontypical | 120 | 244 | 0 | 0 | 162 | 0 | 1.1 | 1 | 0 | normal | No |

| 200 | 59 | 1 | typical | 160 | 273 | 0 | 2 | 125 | 0 | 0.0 | 1 | 0 | normal | Yes |

| 201 | 50 | 0 | asymptomatic | 110 | 254 | 0 | 2 | 159 | 0 | 0.0 | 1 | 0 | normal | No |

| 202 | 64 | 0 | asymptomatic | 180 | 325 | 0 | 0 | 154 | 1 | 0.0 | 1 | 0 | normal | No |

| 203 | 57 | 1 | nonanginal | 150 | 126 | 1 | 0 | 173 | 0 | 0.2 | 1 | 1 | reversable | No |

| 204 | 64 | 0 | nonanginal | 140 | 313 | 0 | 0 | 133 | 0 | 0.2 | 1 | 0 | reversable | No |

| 205 | 43 | 1 | asymptomatic | 110 | 211 | 0 | 0 | 161 | 0 | 0.0 | 1 | 0 | reversable | No |

| 206 | 45 | 1 | asymptomatic | 142 | 309 | 0 | 2 | 147 | 1 | 0.0 | 2 | 3 | reversable | Yes |

| 207 | 58 | 1 | asymptomatic | 128 | 259 | 0 | 2 | 130 | 1 | 3.0 | 2 | 2 | reversable | Yes |

| 208 | 50 | 1 | asymptomatic | 144 | 200 | 0 | 2 | 126 | 1 | 0.9 | 2 | 0 | reversable | Yes |

| 209 | 55 | 1 | nontypical | 130 | 262 | 0 | 0 | 155 | 0 | 0.0 | 1 | 0 | normal | No |

| 210 | 62 | 0 | asymptomatic | 150 | 244 | 0 | 0 | 154 | 1 | 1.4 | 2 | 0 | normal | Yes |

| 211 | 37 | 0 | nonanginal | 120 | 215 | 0 | 0 | 170 | 0 | 0.0 | 1 | 0 | normal | No |

| 212 | 38 | 1 | typical | 120 | 231 | 0 | 0 | 182 | 1 | 3.8 | 2 | 0 | reversable | Yes |

| 213 | 41 | 1 | nonanginal | 130 | 214 | 0 | 2 | 168 | 0 | 2.0 | 2 | 0 | normal | No |

| 214 | 66 | 0 | asymptomatic | 178 | 228 | 1 | 0 | 165 | 1 | 1.0 | 2 | 2 | reversable | Yes |

| 215 | 52 | 1 | asymptomatic | 112 | 230 | 0 | 0 | 160 | 0 | 0.0 | 1 | 1 | normal | Yes |

| 216 | 56 | 1 | typical | 120 | 193 | 0 | 2 | 162 | 0 | 1.9 | 2 | 0 | reversable | No |

| 217 | 46 | 0 | nontypical | 105 | 204 | 0 | 0 | 172 | 0 | 0.0 | 1 | 0 | normal | No |

| 218 | 46 | 0 | asymptomatic | 138 | 243 | 0 | 2 | 152 | 1 | 0.0 | 2 | 0 | normal | No |

| 219 | 64 | 0 | asymptomatic | 130 | 303 | 0 | 0 | 122 | 0 | 2.0 | 2 | 2 | normal | No |

| 220 | 59 | 1 | asymptomatic | 138 | 271 | 0 | 2 | 182 | 0 | 0.0 | 1 | 0 | normal | No |

| 221 | 41 | 0 | nonanginal | 112 | 268 | 0 | 2 | 172 | 1 | 0.0 | 1 | 0 | normal | No |

| 222 | 54 | 0 | nonanginal | 108 | 267 | 0 | 2 | 167 | 0 | 0.0 | 1 | 0 | normal | No |

| 223 | 39 | 0 | nonanginal | 94 | 199 | 0 | 0 | 179 | 0 | 0.0 | 1 | 0 | normal | No |

| 224 | 53 | 1 | asymptomatic | 123 | 282 | 0 | 0 | 95 | 1 | 2.0 | 2 | 2 | reversable | Yes |

| 225 | 63 | 0 | asymptomatic | 108 | 269 | 0 | 0 | 169 | 1 | 1.8 | 2 | 2 | normal | Yes |

| 226 | 34 | 0 | nontypical | 118 | 210 | 0 | 0 | 192 | 0 | 0.7 | 1 | 0 | normal | No |

| 227 | 47 | 1 | asymptomatic | 112 | 204 | 0 | 0 | 143 | 0 | 0.1 | 1 | 0 | normal | No |

| 228 | 67 | 0 | nonanginal | 152 | 277 | 0 | 0 | 172 | 0 | 0.0 | 1 | 1 | normal | No |

| 229 | 54 | 1 | asymptomatic | 110 | 206 | 0 | 2 | 108 | 1 | 0.0 | 2 | 1 | normal | Yes |

| 230 | 66 | 1 | asymptomatic | 112 | 212 | 0 | 2 | 132 | 1 | 0.1 | 1 | 1 | normal | Yes |

| 231 | 52 | 0 | nonanginal | 136 | 196 | 0 | 2 | 169 | 0 | 0.1 | 2 | 0 | normal | No |

| 232 | 55 | 0 | asymptomatic | 180 | 327 | 0 | 1 | 117 | 1 | 3.4 | 2 | 0 | normal | Yes |

| 233 | 49 | 1 | nonanginal | 118 | 149 | 0 | 2 | 126 | 0 | 0.8 | 1 | 3 | normal | Yes |

| 234 | 74 | 0 | nontypical | 120 | 269 | 0 | 2 | 121 | 1 | 0.2 | 1 | 1 | normal | No |

| 235 | 54 | 0 | nonanginal | 160 | 201 | 0 | 0 | 163 | 0 | 0.0 | 1 | 1 | normal | No |

| 236 | 54 | 1 | asymptomatic | 122 | 286 | 0 | 2 | 116 | 1 | 3.2 | 2 | 2 | normal | Yes |

| 237 | 56 | 1 | asymptomatic | 130 | 283 | 1 | 2 | 103 | 1 | 1.6 | 3 | 0 | reversable | Yes |

| 238 | 46 | 1 | asymptomatic | 120 | 249 | 0 | 2 | 144 | 0 | 0.8 | 1 | 0 | reversable | Yes |

| 239 | 49 | 0 | nontypical | 134 | 271 | 0 | 0 | 162 | 0 | 0.0 | 2 | 0 | normal | No |

| 240 | 42 | 1 | nontypical | 120 | 295 | 0 | 0 | 162 | 0 | 0.0 | 1 | 0 | normal | No |

| 241 | 41 | 1 | nontypical | 110 | 235 | 0 | 0 | 153 | 0 | 0.0 | 1 | 0 | normal | No |

| 242 | 41 | 0 | nontypical | 126 | 306 | 0 | 0 | 163 | 0 | 0.0 | 1 | 0 | normal | No |

| 243 | 49 | 0 | asymptomatic | 130 | 269 | 0 | 0 | 163 | 0 | 0.0 | 1 | 0 | normal | No |

| 244 | 61 | 1 | typical | 134 | 234 | 0 | 0 | 145 | 0 | 2.6 | 2 | 2 | normal | Yes |

| 245 | 60 | 0 | nonanginal | 120 | 178 | 1 | 0 | 96 | 0 | 0.0 | 1 | 0 | normal | No |

| 246 | 67 | 1 | asymptomatic | 120 | 237 | 0 | 0 | 71 | 0 | 1.0 | 2 | 0 | normal | Yes |

| 247 | 58 | 1 | asymptomatic | 100 | 234 | 0 | 0 | 156 | 0 | 0.1 | 1 | 1 | reversable | Yes |

| 248 | 47 | 1 | asymptomatic | 110 | 275 | 0 | 2 | 118 | 1 | 1.0 | 2 | 1 | normal | Yes |

| 249 | 52 | 1 | asymptomatic | 125 | 212 | 0 | 0 | 168 | 0 | 1.0 | 1 | 2 | reversable | Yes |

| 250 | 62 | 1 | nontypical | 128 | 208 | 1 | 2 | 140 | 0 | 0.0 | 1 | 0 | normal | No |

| 251 | 57 | 1 | asymptomatic | 110 | 201 | 0 | 0 | 126 | 1 | 1.5 | 2 | 0 | fixed | No |

| 252 | 58 | 1 | asymptomatic | 146 | 218 | 0 | 0 | 105 | 0 | 2.0 | 2 | 1 | reversable | Yes |

| 253 | 64 | 1 | asymptomatic | 128 | 263 | 0 | 0 | 105 | 1 | 0.2 | 2 | 1 | reversable | No |

| 254 | 51 | 0 | nonanginal | 120 | 295 | 0 | 2 | 157 | 0 | 0.6 | 1 | 0 | normal | No |

| 255 | 43 | 1 | asymptomatic | 115 | 303 | 0 | 0 | 181 | 0 | 1.2 | 2 | 0 | normal | No |

| 256 | 42 | 0 | nonanginal | 120 | 209 | 0 | 0 | 173 | 0 | 0.0 | 2 | 0 | normal | No |

| 257 | 67 | 0 | asymptomatic | 106 | 223 | 0 | 0 | 142 | 0 | 0.3 | 1 | 2 | normal | No |

| 258 | 76 | 0 | nonanginal | 140 | 197 | 0 | 1 | 116 | 0 | 1.1 | 2 | 0 | normal | No |

| 259 | 70 | 1 | nontypical | 156 | 245 | 0 | 2 | 143 | 0 | 0.0 | 1 | 0 | normal | No |

| 260 | 57 | 1 | nontypical | 124 | 261 | 0 | 0 | 141 | 0 | 0.3 | 1 | 0 | reversable | Yes |

| 261 | 44 | 0 | nonanginal | 118 | 242 | 0 | 0 | 149 | 0 | 0.3 | 2 | 1 | normal | No |

| 262 | 58 | 0 | nontypical | 136 | 319 | 1 | 2 | 152 | 0 | 0.0 | 1 | 2 | normal | Yes |

| 263 | 60 | 0 | typical | 150 | 240 | 0 | 0 | 171 | 0 | 0.9 | 1 | 0 | normal | No |

| 264 | 44 | 1 | nonanginal | 120 | 226 | 0 | 0 | 169 | 0 | 0.0 | 1 | 0 | normal | No |

| 265 | 61 | 1 | asymptomatic | 138 | 166 | 0 | 2 | 125 | 1 | 3.6 | 2 | 1 | normal | Yes |

| 266 | 42 | 1 | asymptomatic | 136 | 315 | 0 | 0 | 125 | 1 | 1.8 | 2 | 0 | fixed | Yes |

| 268 | 59 | 1 | nonanginal | 126 | 218 | 1 | 0 | 134 | 0 | 2.2 | 2 | 1 | fixed | Yes |

| 269 | 40 | 1 | asymptomatic | 152 | 223 | 0 | 0 | 181 | 0 | 0.0 | 1 | 0 | reversable | Yes |

| 270 | 42 | 1 | nonanginal | 130 | 180 | 0 | 0 | 150 | 0 | 0.0 | 1 | 0 | normal | No |

| 271 | 61 | 1 | asymptomatic | 140 | 207 | 0 | 2 | 138 | 1 | 1.9 | 1 | 1 | reversable | Yes |

| 272 | 66 | 1 | asymptomatic | 160 | 228 | 0 | 2 | 138 | 0 | 2.3 | 1 | 0 | fixed | No |

| 273 | 46 | 1 | asymptomatic | 140 | 311 | 0 | 0 | 120 | 1 | 1.8 | 2 | 2 | reversable | Yes |

| 274 | 71 | 0 | asymptomatic | 112 | 149 | 0 | 0 | 125 | 0 | 1.6 | 2 | 0 | normal | No |

| 275 | 59 | 1 | typical | 134 | 204 | 0 | 0 | 162 | 0 | 0.8 | 1 | 2 | normal | Yes |

| 276 | 64 | 1 | typical | 170 | 227 | 0 | 2 | 155 | 0 | 0.6 | 2 | 0 | reversable | No |

| 277 | 66 | 0 | nonanginal | 146 | 278 | 0 | 2 | 152 | 0 | 0.0 | 2 | 1 | normal | No |

| 278 | 39 | 0 | nonanginal | 138 | 220 | 0 | 0 | 152 | 0 | 0.0 | 2 | 0 | normal | No |

| 279 | 57 | 1 | nontypical | 154 | 232 | 0 | 2 | 164 | 0 | 0.0 | 1 | 1 | normal | Yes |

| 280 | 58 | 0 | asymptomatic | 130 | 197 | 0 | 0 | 131 | 0 | 0.6 | 2 | 0 | normal | No |

| 281 | 57 | 1 | asymptomatic | 110 | 335 | 0 | 0 | 143 | 1 | 3.0 | 2 | 1 | reversable | Yes |

| 282 | 47 | 1 | nonanginal | 130 | 253 | 0 | 0 | 179 | 0 | 0.0 | 1 | 0 | normal | No |

| 283 | 55 | 0 | asymptomatic | 128 | 205 | 0 | 1 | 130 | 1 | 2.0 | 2 | 1 | reversable | Yes |

| 284 | 35 | 1 | nontypical | 122 | 192 | 0 | 0 | 174 | 0 | 0.0 | 1 | 0 | normal | No |

| 285 | 61 | 1 | asymptomatic | 148 | 203 | 0 | 0 | 161 | 0 | 0.0 | 1 | 1 | reversable | Yes |

| 286 | 58 | 1 | asymptomatic | 114 | 318 | 0 | 1 | 140 | 0 | 4.4 | 3 | 3 | fixed | Yes |

| 287 | 58 | 0 | asymptomatic | 170 | 225 | 1 | 2 | 146 | 1 | 2.8 | 2 | 2 | fixed | Yes |

| 289 | 56 | 1 | nontypical | 130 | 221 | 0 | 2 | 163 | 0 | 0.0 | 1 | 0 | reversable | No |

| 290 | 56 | 1 | nontypical | 120 | 240 | 0 | 0 | 169 | 0 | 0.0 | 3 | 0 | normal | No |

| 291 | 67 | 1 | nonanginal | 152 | 212 | 0 | 2 | 150 | 0 | 0.8 | 2 | 0 | reversable | Yes |

| 292 | 55 | 0 | nontypical | 132 | 342 | 0 | 0 | 166 | 0 | 1.2 | 1 | 0 | normal | No |

| 293 | 44 | 1 | asymptomatic | 120 | 169 | 0 | 0 | 144 | 1 | 2.8 | 3 | 0 | fixed | Yes |

| 294 | 63 | 1 | asymptomatic | 140 | 187 | 0 | 2 | 144 | 1 | 4.0 | 1 | 2 | reversable | Yes |

| 295 | 63 | 0 | asymptomatic | 124 | 197 | 0 | 0 | 136 | 1 | 0.0 | 2 | 0 | normal | Yes |

| 296 | 41 | 1 | nontypical | 120 | 157 | 0 | 0 | 182 | 0 | 0.0 | 1 | 0 | normal | No |

| 297 | 59 | 1 | asymptomatic | 164 | 176 | 1 | 2 | 90 | 0 | 1.0 | 2 | 2 | fixed | Yes |

| 298 | 57 | 0 | asymptomatic | 140 | 241 | 0 | 0 | 123 | 1 | 0.2 | 2 | 0 | reversable | Yes |

| 299 | 45 | 1 | typical | 110 | 264 | 0 | 0 | 132 | 0 | 1.2 | 2 | 0 | reversable | Yes |

| 300 | 68 | 1 | asymptomatic | 144 | 193 | 1 | 0 | 141 | 0 | 3.4 | 2 | 2 | reversable | Yes |

| 301 | 57 | 1 | asymptomatic | 130 | 131 | 0 | 0 | 115 | 1 | 1.2 | 2 | 1 | reversable | Yes |

| 302 | 57 | 0 | nontypical | 130 | 236 | 0 | 2 | 174 | 0 | 0.0 | 2 | 1 | normal | Yes |

16.7.2 CarSeats数据

| Sales | CompPrice | Income | Advertising | Population | Price | ShelveLoc | Age | Education | Urban | US |

|---|---|---|---|---|---|---|---|---|---|---|

| 9.50 | 138 | 73 | 11 | 276 | 120 | Bad | 42 | 17 | Yes | Yes |

| 11.22 | 111 | 48 | 16 | 260 | 83 | Good | 65 | 10 | Yes | Yes |

| 10.06 | 113 | 35 | 10 | 269 | 80 | Medium | 59 | 12 | Yes | Yes |

| 7.40 | 117 | 100 | 4 | 466 | 97 | Medium | 55 | 14 | Yes | Yes |

| 4.15 | 141 | 64 | 3 | 340 | 128 | Bad | 38 | 13 | Yes | No |

| 10.81 | 124 | 113 | 13 | 501 | 72 | Bad | 78 | 16 | No | Yes |

| 6.63 | 115 | 105 | 0 | 45 | 108 | Medium | 71 | 15 | Yes | No |

| 11.85 | 136 | 81 | 15 | 425 | 120 | Good | 67 | 10 | Yes | Yes |

| 6.54 | 132 | 110 | 0 | 108 | 124 | Medium | 76 | 10 | No | No |

| 4.69 | 132 | 113 | 0 | 131 | 124 | Medium | 76 | 17 | No | Yes |

| 9.01 | 121 | 78 | 9 | 150 | 100 | Bad | 26 | 10 | No | Yes |

| 11.96 | 117 | 94 | 4 | 503 | 94 | Good | 50 | 13 | Yes | Yes |

| 3.98 | 122 | 35 | 2 | 393 | 136 | Medium | 62 | 18 | Yes | No |

| 10.96 | 115 | 28 | 11 | 29 | 86 | Good | 53 | 18 | Yes | Yes |

| 11.17 | 107 | 117 | 11 | 148 | 118 | Good | 52 | 18 | Yes | Yes |

| 8.71 | 149 | 95 | 5 | 400 | 144 | Medium | 76 | 18 | No | No |

| 7.58 | 118 | 32 | 0 | 284 | 110 | Good | 63 | 13 | Yes | No |

| 12.29 | 147 | 74 | 13 | 251 | 131 | Good | 52 | 10 | Yes | Yes |

| 13.91 | 110 | 110 | 0 | 408 | 68 | Good | 46 | 17 | No | Yes |

| 8.73 | 129 | 76 | 16 | 58 | 121 | Medium | 69 | 12 | Yes | Yes |

| 6.41 | 125 | 90 | 2 | 367 | 131 | Medium | 35 | 18 | Yes | Yes |

| 12.13 | 134 | 29 | 12 | 239 | 109 | Good | 62 | 18 | No | Yes |

| 5.08 | 128 | 46 | 6 | 497 | 138 | Medium | 42 | 13 | Yes | No |

| 5.87 | 121 | 31 | 0 | 292 | 109 | Medium | 79 | 10 | Yes | No |

| 10.14 | 145 | 119 | 16 | 294 | 113 | Bad | 42 | 12 | Yes | Yes |

| 14.90 | 139 | 32 | 0 | 176 | 82 | Good | 54 | 11 | No | No |

| 8.33 | 107 | 115 | 11 | 496 | 131 | Good | 50 | 11 | No | Yes |

| 5.27 | 98 | 118 | 0 | 19 | 107 | Medium | 64 | 17 | Yes | No |

| 2.99 | 103 | 74 | 0 | 359 | 97 | Bad | 55 | 11 | Yes | Yes |

| 7.81 | 104 | 99 | 15 | 226 | 102 | Bad | 58 | 17 | Yes | Yes |

| 13.55 | 125 | 94 | 0 | 447 | 89 | Good | 30 | 12 | Yes | No |

| 8.25 | 136 | 58 | 16 | 241 | 131 | Medium | 44 | 18 | Yes | Yes |

| 6.20 | 107 | 32 | 12 | 236 | 137 | Good | 64 | 10 | No | Yes |

| 8.77 | 114 | 38 | 13 | 317 | 128 | Good | 50 | 16 | Yes | Yes |

| 2.67 | 115 | 54 | 0 | 406 | 128 | Medium | 42 | 17 | Yes | Yes |

| 11.07 | 131 | 84 | 11 | 29 | 96 | Medium | 44 | 17 | No | Yes |

| 8.89 | 122 | 76 | 0 | 270 | 100 | Good | 60 | 18 | No | No |

| 4.95 | 121 | 41 | 5 | 412 | 110 | Medium | 54 | 10 | Yes | Yes |

| 6.59 | 109 | 73 | 0 | 454 | 102 | Medium | 65 | 15 | Yes | No |

| 3.24 | 130 | 60 | 0 | 144 | 138 | Bad | 38 | 10 | No | No |

| 2.07 | 119 | 98 | 0 | 18 | 126 | Bad | 73 | 17 | No | No |

| 7.96 | 157 | 53 | 0 | 403 | 124 | Bad | 58 | 16 | Yes | No |

| 10.43 | 77 | 69 | 0 | 25 | 24 | Medium | 50 | 18 | Yes | No |

| 4.12 | 123 | 42 | 11 | 16 | 134 | Medium | 59 | 13 | Yes | Yes |

| 4.16 | 85 | 79 | 6 | 325 | 95 | Medium | 69 | 13 | Yes | Yes |

| 4.56 | 141 | 63 | 0 | 168 | 135 | Bad | 44 | 12 | Yes | Yes |

| 12.44 | 127 | 90 | 14 | 16 | 70 | Medium | 48 | 15 | No | Yes |

| 4.38 | 126 | 98 | 0 | 173 | 108 | Bad | 55 | 16 | Yes | No |

| 3.91 | 116 | 52 | 0 | 349 | 98 | Bad | 69 | 18 | Yes | No |

| 10.61 | 157 | 93 | 0 | 51 | 149 | Good | 32 | 17 | Yes | No |

| 1.42 | 99 | 32 | 18 | 341 | 108 | Bad | 80 | 16 | Yes | Yes |

| 4.42 | 121 | 90 | 0 | 150 | 108 | Bad | 75 | 16 | Yes | No |

| 7.91 | 153 | 40 | 3 | 112 | 129 | Bad | 39 | 18 | Yes | Yes |

| 6.92 | 109 | 64 | 13 | 39 | 119 | Medium | 61 | 17 | Yes | Yes |

| 4.90 | 134 | 103 | 13 | 25 | 144 | Medium | 76 | 17 | No | Yes |

| 6.85 | 143 | 81 | 5 | 60 | 154 | Medium | 61 | 18 | Yes | Yes |

| 11.91 | 133 | 82 | 0 | 54 | 84 | Medium | 50 | 17 | Yes | No |

| 0.91 | 93 | 91 | 0 | 22 | 117 | Bad | 75 | 11 | Yes | No |

| 5.42 | 103 | 93 | 15 | 188 | 103 | Bad | 74 | 16 | Yes | Yes |

| 5.21 | 118 | 71 | 4 | 148 | 114 | Medium | 80 | 13 | Yes | No |

| 8.32 | 122 | 102 | 19 | 469 | 123 | Bad | 29 | 13 | Yes | Yes |

| 7.32 | 105 | 32 | 0 | 358 | 107 | Medium | 26 | 13 | No | No |

| 1.82 | 139 | 45 | 0 | 146 | 133 | Bad | 77 | 17 | Yes | Yes |

| 8.47 | 119 | 88 | 10 | 170 | 101 | Medium | 61 | 13 | Yes | Yes |

| 7.80 | 100 | 67 | 12 | 184 | 104 | Medium | 32 | 16 | No | Yes |

| 4.90 | 122 | 26 | 0 | 197 | 128 | Medium | 55 | 13 | No | No |

| 8.85 | 127 | 92 | 0 | 508 | 91 | Medium | 56 | 18 | Yes | No |

| 9.01 | 126 | 61 | 14 | 152 | 115 | Medium | 47 | 16 | Yes | Yes |

| 13.39 | 149 | 69 | 20 | 366 | 134 | Good | 60 | 13 | Yes | Yes |

| 7.99 | 127 | 59 | 0 | 339 | 99 | Medium | 65 | 12 | Yes | No |

| 9.46 | 89 | 81 | 15 | 237 | 99 | Good | 74 | 12 | Yes | Yes |

| 6.50 | 148 | 51 | 16 | 148 | 150 | Medium | 58 | 17 | No | Yes |

| 5.52 | 115 | 45 | 0 | 432 | 116 | Medium | 25 | 15 | Yes | No |

| 12.61 | 118 | 90 | 10 | 54 | 104 | Good | 31 | 11 | No | Yes |

| 6.20 | 150 | 68 | 5 | 125 | 136 | Medium | 64 | 13 | No | Yes |

| 8.55 | 88 | 111 | 23 | 480 | 92 | Bad | 36 | 16 | No | Yes |

| 10.64 | 102 | 87 | 10 | 346 | 70 | Medium | 64 | 15 | Yes | Yes |

| 7.70 | 118 | 71 | 12 | 44 | 89 | Medium | 67 | 18 | No | Yes |

| 4.43 | 134 | 48 | 1 | 139 | 145 | Medium | 65 | 12 | Yes | Yes |

| 9.14 | 134 | 67 | 0 | 286 | 90 | Bad | 41 | 13 | Yes | No |

| 8.01 | 113 | 100 | 16 | 353 | 79 | Bad | 68 | 11 | Yes | Yes |

| 7.52 | 116 | 72 | 0 | 237 | 128 | Good | 70 | 13 | Yes | No |

| 11.62 | 151 | 83 | 4 | 325 | 139 | Good | 28 | 17 | Yes | Yes |

| 4.42 | 109 | 36 | 7 | 468 | 94 | Bad | 56 | 11 | Yes | Yes |

| 2.23 | 111 | 25 | 0 | 52 | 121 | Bad | 43 | 18 | No | No |

| 8.47 | 125 | 103 | 0 | 304 | 112 | Medium | 49 | 13 | No | No |

| 8.70 | 150 | 84 | 9 | 432 | 134 | Medium | 64 | 15 | Yes | No |

| 11.70 | 131 | 67 | 7 | 272 | 126 | Good | 54 | 16 | No | Yes |

| 6.56 | 117 | 42 | 7 | 144 | 111 | Medium | 62 | 10 | Yes | Yes |

| 7.95 | 128 | 66 | 3 | 493 | 119 | Medium | 45 | 16 | No | No |

| 5.33 | 115 | 22 | 0 | 491 | 103 | Medium | 64 | 11 | No | No |

| 4.81 | 97 | 46 | 11 | 267 | 107 | Medium | 80 | 15 | Yes | Yes |

| 4.53 | 114 | 113 | 0 | 97 | 125 | Medium | 29 | 12 | Yes | No |

| 8.86 | 145 | 30 | 0 | 67 | 104 | Medium | 55 | 17 | Yes | No |

| 8.39 | 115 | 97 | 5 | 134 | 84 | Bad | 55 | 11 | Yes | Yes |

| 5.58 | 134 | 25 | 10 | 237 | 148 | Medium | 59 | 13 | Yes | Yes |

| 9.48 | 147 | 42 | 10 | 407 | 132 | Good | 73 | 16 | No | Yes |

| 7.45 | 161 | 82 | 5 | 287 | 129 | Bad | 33 | 16 | Yes | Yes |

| 12.49 | 122 | 77 | 24 | 382 | 127 | Good | 36 | 16 | No | Yes |

| 4.88 | 121 | 47 | 3 | 220 | 107 | Bad | 56 | 16 | No | Yes |

| 4.11 | 113 | 69 | 11 | 94 | 106 | Medium | 76 | 12 | No | Yes |

| 6.20 | 128 | 93 | 0 | 89 | 118 | Medium | 34 | 18 | Yes | No |

| 5.30 | 113 | 22 | 0 | 57 | 97 | Medium | 65 | 16 | No | No |

| 5.07 | 123 | 91 | 0 | 334 | 96 | Bad | 78 | 17 | Yes | Yes |

| 4.62 | 121 | 96 | 0 | 472 | 138 | Medium | 51 | 12 | Yes | No |

| 5.55 | 104 | 100 | 8 | 398 | 97 | Medium | 61 | 11 | Yes | Yes |

| 0.16 | 102 | 33 | 0 | 217 | 139 | Medium | 70 | 18 | No | No |

| 8.55 | 134 | 107 | 0 | 104 | 108 | Medium | 60 | 12 | Yes | No |

| 3.47 | 107 | 79 | 2 | 488 | 103 | Bad | 65 | 16 | Yes | No |

| 8.98 | 115 | 65 | 0 | 217 | 90 | Medium | 60 | 17 | No | No |

| 9.00 | 128 | 62 | 7 | 125 | 116 | Medium | 43 | 14 | Yes | Yes |

| 6.62 | 132 | 118 | 12 | 272 | 151 | Medium | 43 | 14 | Yes | Yes |

| 6.67 | 116 | 99 | 5 | 298 | 125 | Good | 62 | 12 | Yes | Yes |

| 6.01 | 131 | 29 | 11 | 335 | 127 | Bad | 33 | 12 | Yes | Yes |

| 9.31 | 122 | 87 | 9 | 17 | 106 | Medium | 65 | 13 | Yes | Yes |

| 8.54 | 139 | 35 | 0 | 95 | 129 | Medium | 42 | 13 | Yes | No |

| 5.08 | 135 | 75 | 0 | 202 | 128 | Medium | 80 | 10 | No | No |

| 8.80 | 145 | 53 | 0 | 507 | 119 | Medium | 41 | 12 | Yes | No |

| 7.57 | 112 | 88 | 2 | 243 | 99 | Medium | 62 | 11 | Yes | Yes |

| 7.37 | 130 | 94 | 8 | 137 | 128 | Medium | 64 | 12 | Yes | Yes |

| 6.87 | 128 | 105 | 11 | 249 | 131 | Medium | 63 | 13 | Yes | Yes |

| 11.67 | 125 | 89 | 10 | 380 | 87 | Bad | 28 | 10 | Yes | Yes |

| 6.88 | 119 | 100 | 5 | 45 | 108 | Medium | 75 | 10 | Yes | Yes |

| 8.19 | 127 | 103 | 0 | 125 | 155 | Good | 29 | 15 | No | Yes |

| 8.87 | 131 | 113 | 0 | 181 | 120 | Good | 63 | 14 | Yes | No |

| 9.34 | 89 | 78 | 0 | 181 | 49 | Medium | 43 | 15 | No | No |

| 11.27 | 153 | 68 | 2 | 60 | 133 | Good | 59 | 16 | Yes | Yes |

| 6.52 | 125 | 48 | 3 | 192 | 116 | Medium | 51 | 14 | Yes | Yes |

| 4.96 | 133 | 100 | 3 | 350 | 126 | Bad | 55 | 13 | Yes | Yes |

| 4.47 | 143 | 120 | 7 | 279 | 147 | Bad | 40 | 10 | No | Yes |

| 8.41 | 94 | 84 | 13 | 497 | 77 | Medium | 51 | 12 | Yes | Yes |

| 6.50 | 108 | 69 | 3 | 208 | 94 | Medium | 77 | 16 | Yes | No |

| 9.54 | 125 | 87 | 9 | 232 | 136 | Good | 72 | 10 | Yes | Yes |

| 7.62 | 132 | 98 | 2 | 265 | 97 | Bad | 62 | 12 | Yes | Yes |

| 3.67 | 132 | 31 | 0 | 327 | 131 | Medium | 76 | 16 | Yes | No |

| 6.44 | 96 | 94 | 14 | 384 | 120 | Medium | 36 | 18 | No | Yes |

| 5.17 | 131 | 75 | 0 | 10 | 120 | Bad | 31 | 18 | No | No |

| 6.52 | 128 | 42 | 0 | 436 | 118 | Medium | 80 | 11 | Yes | No |

| 10.27 | 125 | 103 | 12 | 371 | 109 | Medium | 44 | 10 | Yes | Yes |

| 12.30 | 146 | 62 | 10 | 310 | 94 | Medium | 30 | 13 | No | Yes |

| 6.03 | 133 | 60 | 10 | 277 | 129 | Medium | 45 | 18 | Yes | Yes |

| 6.53 | 140 | 42 | 0 | 331 | 131 | Bad | 28 | 15 | Yes | No |

| 7.44 | 124 | 84 | 0 | 300 | 104 | Medium | 77 | 15 | Yes | No |

| 0.53 | 122 | 88 | 7 | 36 | 159 | Bad | 28 | 17 | Yes | Yes |

| 9.09 | 132 | 68 | 0 | 264 | 123 | Good | 34 | 11 | No | No |

| 8.77 | 144 | 63 | 11 | 27 | 117 | Medium | 47 | 17 | Yes | Yes |

| 3.90 | 114 | 83 | 0 | 412 | 131 | Bad | 39 | 14 | Yes | No |