41 统计学习介绍

统计学习介绍的主要参考书: (James et al. 2013): Gareth James, Daniela Witten, Trevor Hastie, Robert Tibshirani(2013) An Introduction to Statistical Learning: with Applications in R, Springer.

调入需要的扩展包:

library(leaps) # 全子集回归

library(ISLR) # 参考书对应的包

library(glmnet) # 岭回归和lasso

library(tree) # 树回归

library(randomForest) # 随机森林和装袋法

library(MASS)

library(gbm) # boosting

library(e1071) # svm41.1 统计学习的基本概念和一般步骤

41.1.1 统计学习的基本概念和方法

统计学习(statistical learning), 也有数据挖掘(data mining),机器学习(machine learning)等称呼。 主要目的是用一些计算机算法从大量数据中发现知识。 方兴未艾的数据科学就以统计学习为重要支柱。 方法分为有监督(supervised)学习与无监督(unsupervised)学习。

无监督学习方法如聚类问题、购物篮问题、主成分分析等。

有监督学习即统计中回归分析和判别分析解决的问题, 现在又有树回归、树判别、随机森林、lasso、支持向量机、 神经网络、贝叶斯网络、排序算法等许多方法。

无监督学习在给了数据之后, 直接从数据中发现规律, 比如聚类分析是发现数据中的聚集和分组现象, 购物篮分析是从数据中找到更多的共同出现的条目 (比如购买啤酒的用户也有较大可能购买火腿肠)。

有监督学习方法众多。 通常,需要把数据分为训练样本和检验样本, 训练样本的因变量(数值型或分类型)是已知的, 根据训练样本中自变量和因变量的关系训练出一个回归函数, 此函数以自变量为输入, 可以输出因变量的预测值。

训练出的函数有可能是有简单表达式的(例如,logistic回归)、 有参数众多的表达式的(如神经网络), 也有可能是依赖于所有训练样本而无法写出表达式的(例如k近邻分类)。

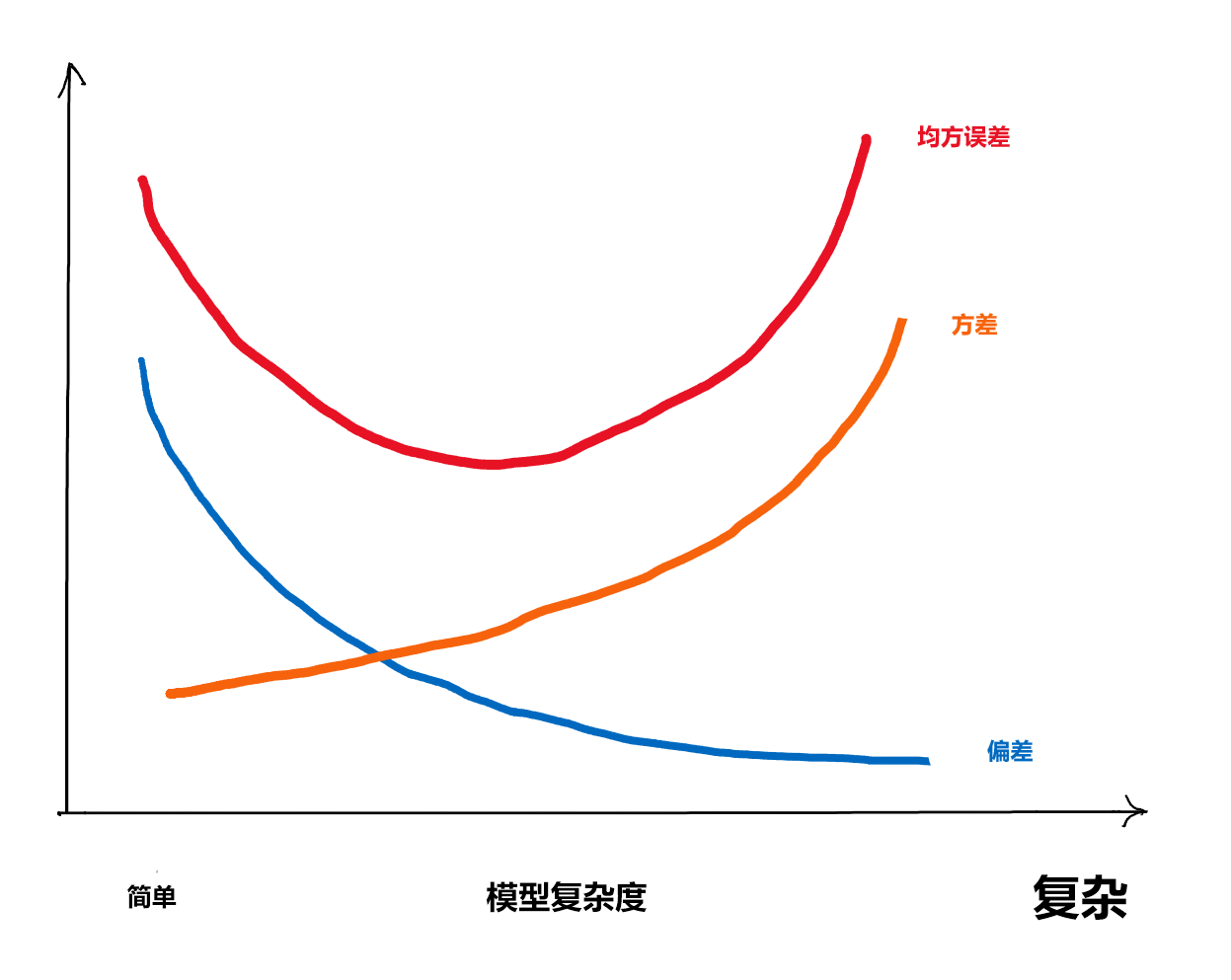

41.1.2 偏差与方差折衷

对回归问题,经常使用均方误差\(E|Ey - \hat y|^2\)来衡量精度。 对分类问题,经常使用分类准确率等来衡量精度。 易见\(E|Ey - \hat y|^2 = \text{Var}(\hat y) + (E\hat y - E y)^2\),所以均方误差可以分解为 \[ \text{均方误差} = \text{方差} + \text{偏差}^2, \]

训练的回归函数如果仅考虑对训练样本解释尽可能好, 就会使得估计结果方差很大,在对检验样本进行计算时因方差大而导致很大的误差, 所以选取的回归函数应该尽可能简单。

如果选取的回归函数过于简单而实际上自变量与因变量关系比较复杂, 就会使得估计的回归函数偏差比较大, 这样在对检验样本进行计算时也会有比较大的误差。

所以,在有监督学习时, 回归函数的复杂程度是一个很关键的量, 太复杂和太简单都可能导致差的结果, 需要找到一个折衷的值。

复杂程度在线性回归中就是自变量个数, 在一元曲线拟合中就是曲线的不光滑程度。 在其它指标类似的情况下,简单的模型更稳定、可解释更好, 所以统计学特别重视模型的简化。

41.1.3 交叉验证

即使是在从训练样本中修炼(估计)回归函数时, 也需要适当地选择模型的复杂度。 仅考虑对训练数据的拟合程度是不够的, 这会造成过度拟合问题。

为了相对客观地度量模型的预报误差, 假设训练样本有\(n\)个观测, 可以留出第一个观测不用, 用剩余的\(n-1\)个观测建模,然后预测第一个观测的因变量值, 得到一个误差;对每个观测都这样做, 就可以得到\(n\)个误差。 这样的方法叫做留一法。

更常用的是五折或十折交叉验证。 假设训练集有\(n\)个观测, 将其均分成\(10\)份, 保留第一份不用, 将其余九份合并在一起用来建模,然后预报第一份; 对每一份都这样做, 也可以得到\(n\)个误差, 这叫做十折交叉验证(ten-fold cross validation)方法。

因为要预报的数据没有用来建模, 交叉验证得到的误差估计更准确。

rsample的vfold_cv可以生成这样的划分,

并对每一份,

可以用analysis()和assessment()分别提取建模用部分和验证用部分。

机器学习算法函数一般都包含了用交叉验证方法调参的功能,

不需要用户自己去划分数据。

41.2 Hitters数据分析

用例子来演示统计学习的各种方法。

41.2.1 介绍

考虑ISLR包的Hitters数据集。 此数据集有322个运动员的20个变量的数据, 其中的变量Salary(工资)是我们关心的。 变量包括:

## [1] "AtBat" "Hits" "HmRun" "Runs" "RBI" "Walks" "Years" "CAtBat" "CHits" "CHmRun" "CRuns" "CRBI" "CWalks" "League" "Division" "PutOuts" "Assists" "Errors" "Salary" "NewLeague"数据集的详细变量信息如下:

## 'data.frame': 322 obs. of 20 variables:

## $ AtBat : int 293 315 479 496 321 594 185 298 323 401 ...

## $ Hits : int 66 81 130 141 87 169 37 73 81 92 ...

## $ HmRun : int 1 7 18 20 10 4 1 0 6 17 ...

## $ Runs : int 30 24 66 65 39 74 23 24 26 49 ...

## $ RBI : int 29 38 72 78 42 51 8 24 32 66 ...

## $ Walks : int 14 39 76 37 30 35 21 7 8 65 ...

## $ Years : int 1 14 3 11 2 11 2 3 2 13 ...

## $ CAtBat : int 293 3449 1624 5628 396 4408 214 509 341 5206 ...

## $ CHits : int 66 835 457 1575 101 1133 42 108 86 1332 ...

## $ CHmRun : int 1 69 63 225 12 19 1 0 6 253 ...

## $ CRuns : int 30 321 224 828 48 501 30 41 32 784 ...

## $ CRBI : int 29 414 266 838 46 336 9 37 34 890 ...

## $ CWalks : int 14 375 263 354 33 194 24 12 8 866 ...

## $ League : Factor w/ 2 levels "A","N": 1 2 1 2 2 1 2 1 2 1 ...

## $ Division : Factor w/ 2 levels "E","W": 1 2 2 1 1 2 1 2 2 1 ...

## $ PutOuts : int 446 632 880 200 805 282 76 121 143 0 ...

## $ Assists : int 33 43 82 11 40 421 127 283 290 0 ...

## $ Errors : int 20 10 14 3 4 25 7 9 19 0 ...

## $ Salary : num NA 475 480 500 91.5 750 70 100 75 1100 ...

## $ NewLeague: Factor w/ 2 levels "A","N": 1 2 1 2 2 1 1 1 2 1 ...希望以Salary为因变量,查看其缺失值个数:

## [1] 59为简单起见,去掉有缺失值的观测:

## [1] 263 2041.2.3 回归自变量选择

41.2.3.1 最优子集选择

用leaps包的regsubsets()函数计算最优子集回归,

办法是对某个试验性的子集自变量个数\(\hat p\)值,

都找到\(\hat p\)固定情况下残差平方和最小的变量子集,

这样只要在这些不同\(\hat p\)的最优子集中挑选就可以了。

挑选可以用AIC、BIC等方法。

可以先进行一个包含所有自变量的全集回归:

regfit.full <- regsubsets(

Salary ~ ., data=hit_train, nvmax=19)

reg.summary <- summary(regfit.full)

reg.summary## Subset selection object

## Call: regsubsets.formula(Salary ~ ., data = hit_train, nvmax = 19)

## 19 Variables (and intercept)

## Forced in Forced out

## AtBat FALSE FALSE

## Hits FALSE FALSE

## HmRun FALSE FALSE

## Runs FALSE FALSE

## RBI FALSE FALSE

## Walks FALSE FALSE

## Years FALSE FALSE

## CAtBat FALSE FALSE

## CHits FALSE FALSE

## CHmRun FALSE FALSE

## CRuns FALSE FALSE

## CRBI FALSE FALSE

## CWalks FALSE FALSE

## LeagueN FALSE FALSE

## DivisionW FALSE FALSE

## PutOuts FALSE FALSE

## Assists FALSE FALSE

## Errors FALSE FALSE

## NewLeagueN FALSE FALSE

## 1 subsets of each size up to 19

## Selection Algorithm: exhaustive

## AtBat Hits HmRun Runs RBI Walks Years CAtBat CHits CHmRun CRuns CRBI CWalks LeagueN DivisionW PutOuts Assists Errors NewLeagueN

## 1 ( 1 ) " " " " " " " " " " " " " " " " " " " " " " "*" " " " " " " " " " " " " " "

## 2 ( 1 ) " " "*" " " " " " " " " " " " " " " " " " " "*" " " " " " " " " " " " " " "

## 3 ( 1 ) " " "*" " " " " " " " " " " " " " " " " " " "*" " " " " "*" " " " " " " " "

## 4 ( 1 ) " " "*" " " " " " " " " " " " " " " " " " " "*" " " " " "*" "*" " " " " " "

## 5 ( 1 ) "*" "*" " " " " " " " " " " " " " " " " " " "*" " " " " "*" "*" " " " " " "

## 6 ( 1 ) "*" "*" " " " " " " "*" " " " " " " " " " " "*" " " " " "*" "*" " " " " " "

## 7 ( 1 ) "*" "*" " " " " " " "*" " " " " " " " " " " "*" "*" " " "*" "*" " " " " " "

## 8 ( 1 ) "*" "*" " " " " " " "*" " " " " " " " " "*" "*" "*" " " "*" "*" " " " " " "

## 9 ( 1 ) "*" "*" " " " " " " "*" " " " " " " "*" "*" " " "*" " " "*" "*" "*" " " " "

## 10 ( 1 ) "*" "*" " " " " " " "*" " " "*" " " " " "*" "*" "*" " " "*" "*" "*" " " " "

## 11 ( 1 ) "*" "*" " " " " " " "*" " " "*" " " " " "*" "*" "*" "*" "*" "*" "*" " " " "

## 12 ( 1 ) "*" "*" " " "*" " " "*" " " "*" " " " " "*" "*" "*" "*" "*" "*" "*" " " " "

## 13 ( 1 ) "*" "*" " " "*" " " "*" "*" "*" " " " " "*" "*" "*" "*" "*" "*" "*" " " " "

## 14 ( 1 ) "*" "*" " " "*" "*" "*" "*" "*" " " " " "*" "*" "*" "*" "*" "*" "*" " " " "

## 15 ( 1 ) "*" "*" " " "*" "*" "*" "*" "*" " " " " "*" "*" "*" "*" "*" "*" "*" "*" " "

## 16 ( 1 ) "*" "*" " " "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" " " " "

## 17 ( 1 ) "*" "*" " " "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" " "

## 18 ( 1 ) "*" "*" " " "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*"

## 19 ( 1 ) "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*" "*"这里用nvmax=指定了允许所有的自变量都参加,

缺省行为是限制最多个数的。

上述结果表格中每一行给出了固定\(\hat p\)条件下的最优子集。

试比较这些最优模型的BIC值:

## [1] -63.90242 -86.59469 -90.68877 -93.51559 -96.29865 -96.35699 -95.24328 -94.33547 -91.79438 -89.31463 -85.07463 -80.40798 -75.33025 -70.12122 -64.82873 -59.53306 -54.25553 -48.92352 -43.58870

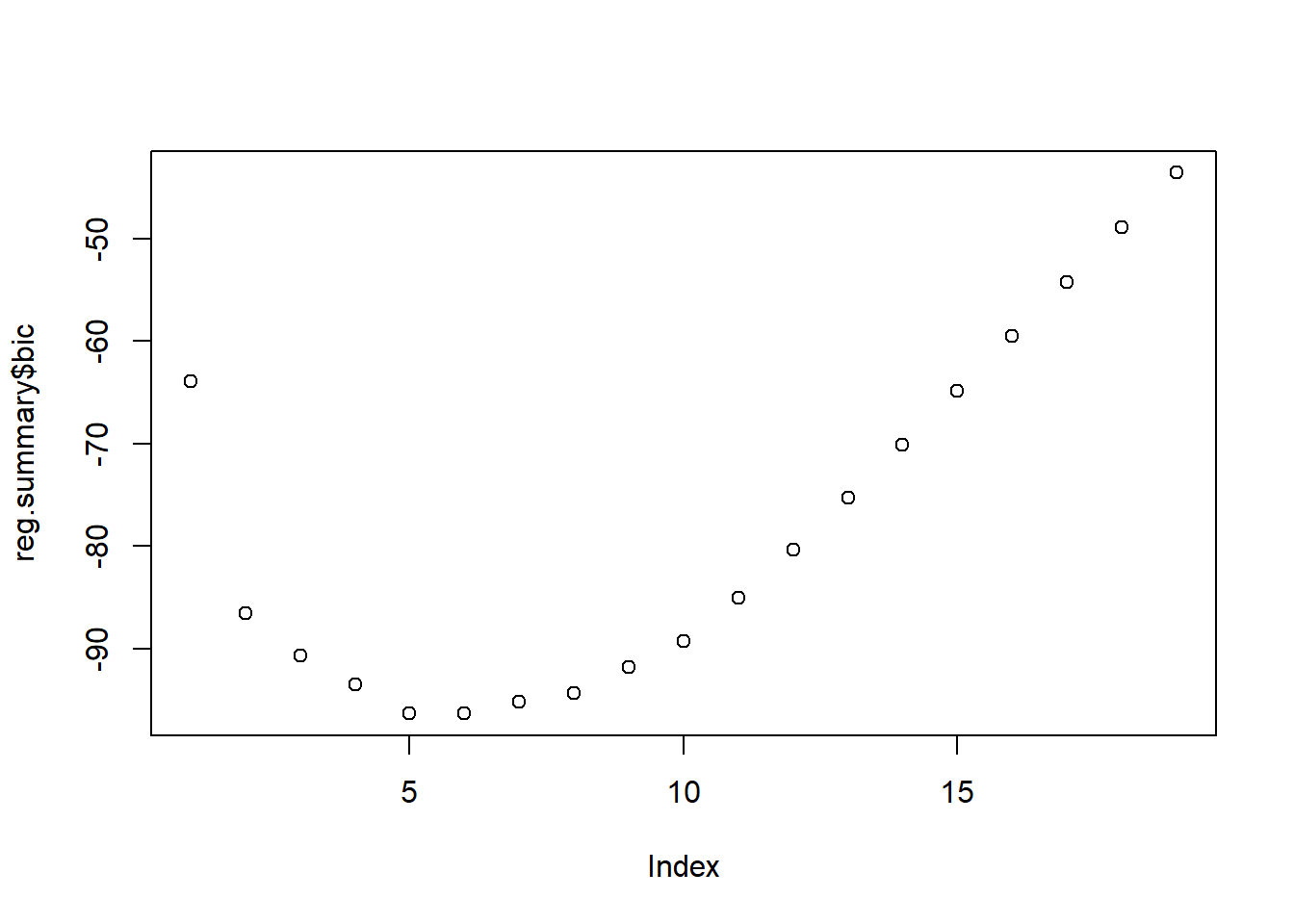

图41.1: Hitters数据最优子集回归BIC

其中\(\hat p=5\)的值相近,都很低,

取\(\hat p=6\)。

用coef()加id=6指定第六种子集:

## (Intercept) AtBat Hits Walks CRBI DivisionW PutOuts

## 149.0951521 -2.1064928 8.2070703 3.2517011 0.6351933 -136.2935330 0.2646021这种方法实现了选取BIC最小的自变量子集。

41.2.3.2 逐步回归方法

在用lm()做了全集回归后,

把全集回归结果输入到stats::step()函数中可以执行逐步回归。

如:

##

## Call:

## lm(formula = Salary ~ ., data = hit_train)

##

## Residuals:

## Min 1Q Median 3Q Max

## -918.96 -183.16 -35.62 138.30 1799.45

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 241.67291 109.57064 2.206 0.02862 *

## AtBat -2.48494 0.76899 -3.231 0.00145 **

## Hits 8.15485 2.84403 2.867 0.00461 **

## HmRun -0.37929 7.64779 -0.050 0.96050

## Runs -2.12109 3.59273 -0.590 0.55564

## RBI 0.76668 3.11770 0.246 0.80602

## Walks 6.27568 2.18144 2.877 0.00448 **

## Years -7.18987 15.10209 -0.476 0.63457

## CAtBat -0.14891 0.16372 -0.909 0.36425

## CHits 0.23486 0.78151 0.301 0.76411

## CHmRun 0.50158 1.97716 0.254 0.80002

## CRuns 1.11476 0.92330 1.207 0.22881

## CRBI 0.70183 0.84282 0.833 0.40606

## CWalks -0.83644 0.37968 -2.203 0.02881 *

## LeagueN 47.02170 94.26262 0.499 0.61848

## DivisionW -120.60207 48.51038 -2.486 0.01379 *

## PutOuts 0.26292 0.09121 2.883 0.00440 **

## Assists 0.38272 0.26915 1.422 0.15670

## Errors -1.28251 5.36074 -0.239 0.81118

## NewLeagueN -7.16809 94.61668 -0.076 0.93969

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 336.8 on 188 degrees of freedom

## Multiple R-squared: 0.5146, Adjusted R-squared: 0.4655

## F-statistic: 10.49 on 19 and 188 DF, p-value: < 2.2e-16## Start: AIC=2439.89

## Salary ~ AtBat + Hits + HmRun + Runs + RBI + Walks + Years +

## CAtBat + CHits + CHmRun + CRuns + CRBI + CWalks + League +

## Division + PutOuts + Assists + Errors + NewLeague

##

## Df Sum of Sq RSS AIC

## - HmRun 1 279 21327132 2437.9

## - NewLeague 1 651 21327504 2437.9

## - Errors 1 6493 21333346 2437.9

## - RBI 1 6860 21333713 2438.0

## - CHmRun 1 7301 21334153 2438.0

## - CHits 1 10245 21337098 2438.0

## - Years 1 25712 21352565 2438.1

## - League 1 28228 21355081 2438.2

## - Runs 1 39540 21366393 2438.3

## - CRBI 1 78662 21405515 2438.7

## - CAtBat 1 93836 21420689 2438.8

## - CRuns 1 165367 21492220 2439.5

## <none> 21326853 2439.9

## - Assists 1 229372 21556225 2440.1

## - CWalks 1 550572 21877425 2443.2

## - Division 1 701147 22028000 2444.6

## - Hits 1 932679 22259532 2446.8

## - Walks 1 938864 22265716 2446.8

## - PutOuts 1 942588 22269441 2446.9

## - AtBat 1 1184571 22511424 2449.1

##

## Step: AIC=2437.89

## Salary ~ AtBat + Hits + Runs + RBI + Walks + Years + CAtBat +

## CHits + CHmRun + CRuns + CRBI + CWalks + League + Division +

## PutOuts + Assists + Errors + NewLeague

##

## Df Sum of Sq RSS AIC

## - NewLeague 1 566 21327698 2435.9

## - Errors 1 6443 21333575 2436.0

## - CHmRun 1 7539 21334671 2436.0

## - CHits 1 9986 21337118 2436.0

## - RBI 1 12495 21339627 2436.0

## - Years 1 25478 21352610 2436.1

## - League 1 27950 21355082 2436.2

## - Runs 1 53429 21380561 2436.4

## - CAtBat 1 94340 21421471 2436.8

## - CRBI 1 96689 21423821 2436.8

## - CRuns 1 185367 21512499 2437.7

## <none> 21327132 2437.9

## - Assists 1 235593 21562725 2438.2

## - CWalks 1 575407 21902539 2441.4

## - Division 1 720408 22047540 2442.8

## - PutOuts 1 947076 22274208 2444.9

## - Walks 1 1002501 22329633 2445.4

## - Hits 1 1073306 22400438 2446.1

## - AtBat 1 1185325 22512457 2447.1

##

## Step: AIC=2435.9

## Salary ~ AtBat + Hits + Runs + RBI + Walks + Years + CAtBat +

## CHits + CHmRun + CRuns + CRBI + CWalks + League + Division +

## PutOuts + Assists + Errors

##

## Df Sum of Sq RSS AIC

## - Errors 1 6155 21333853 2434.0

## - CHmRun 1 7339 21335037 2434.0

## - CHits 1 9541 21337239 2434.0

## - RBI 1 12817 21340515 2434.0

## - Years 1 25398 21353097 2434.2

## - Runs 1 53335 21381033 2434.4

## - League 1 75071 21402769 2434.6

## - CAtBat 1 93812 21421510 2434.8

## - CRBI 1 98282 21425981 2434.9

## - CRuns 1 190610 21518308 2435.8

## <none> 21327698 2435.9

## - Assists 1 236010 21563708 2436.2

## - CWalks 1 577288 21904986 2439.4

## - Division 1 720061 22047759 2440.8

## - PutOuts 1 948064 22275762 2442.9

## - Walks 1 1003786 22331484 2443.5

## - Hits 1 1091940 22419639 2444.3

## - AtBat 1 1223590 22551289 2445.5

##

## Step: AIC=2433.96

## Salary ~ AtBat + Hits + Runs + RBI + Walks + Years + CAtBat +

## CHits + CHmRun + CRuns + CRBI + CWalks + League + Division +

## PutOuts + Assists

##

## Df Sum of Sq RSS AIC

## - CHmRun 1 6724 21340577 2432.0

## - CHits 1 7824 21341677 2432.0

## - RBI 1 11220 21345072 2432.1

## - Years 1 24104 21357956 2432.2

## - Runs 1 57526 21391379 2432.5

## - League 1 70922 21404775 2432.7

## - CAtBat 1 90644 21424497 2432.8

## - CRBI 1 100984 21434837 2432.9

## - CRuns 1 201382 21535235 2433.9

## <none> 21333853 2434.0

## - Assists 1 313674 21647527 2435.0

## - CWalks 1 593539 21927392 2437.7

## - Division 1 722945 22056798 2438.9

## - PutOuts 1 942739 22276592 2440.9

## - Walks 1 1040700 22374553 2441.9

## - Hits 1 1161864 22495717 2443.0

## - AtBat 1 1281359 22615212 2444.1

##

## Step: AIC=2432.03

## Salary ~ AtBat + Hits + Runs + RBI + Walks + Years + CAtBat +

## CHits + CRuns + CRBI + CWalks + League + Division + PutOuts +

## Assists

##

## Df Sum of Sq RSS AIC

## - CHits 1 2192 21342770 2430.1

## - RBI 1 12586 21353163 2430.2

## - Years 1 24971 21365548 2430.3

## - Runs 1 63054 21403631 2430.6

## - League 1 71042 21411619 2430.7

## - CAtBat 1 86281 21426858 2430.9

## <none> 21340577 2432.0

## - Assists 1 306971 21647548 2433.0

## - CRuns 1 433335 21773912 2434.2

## - CWalks 1 631568 21972145 2436.1

## - Division 1 716579 22057157 2436.9

## - PutOuts 1 954537 22295114 2439.1

## - CRBI 1 1001899 22342476 2439.6

## - Walks 1 1036407 22376984 2439.9

## - Hits 1 1187105 22527683 2441.3

## - AtBat 1 1283747 22624325 2442.2

##

## Step: AIC=2430.05

## Salary ~ AtBat + Hits + Runs + RBI + Walks + Years + CAtBat +

## CRuns + CRBI + CWalks + League + Division + PutOuts + Assists

##

## Df Sum of Sq RSS AIC

## - RBI 1 13190 21355960 2428.2

## - Years 1 29638 21372407 2428.3

## - League 1 72742 21415512 2428.8

## - Runs 1 81521 21424290 2428.8

## <none> 21342770 2430.1

## - CAtBat 1 230265 21573034 2430.3

## - Assists 1 307170 21649939 2431.0

## - CRuns 1 713710 22056479 2434.9

## - Division 1 715586 22058356 2434.9

## - CWalks 1 929774 22272544 2436.9

## - PutOuts 1 978714 22321484 2437.4

## - CRBI 1 1002770 22345540 2437.6

## - Walks 1 1086910 22429680 2438.4

## - AtBat 1 1599684 22942453 2443.1

## - Hits 1 1779918 23122687 2444.7

##

## Step: AIC=2428.18

## Salary ~ AtBat + Hits + Runs + Walks + Years + CAtBat + CRuns +

## CRBI + CWalks + League + Division + PutOuts + Assists

##

## Df Sum of Sq RSS AIC

## - Years 1 26692 21382651 2426.4

## - League 1 70307 21426266 2426.9

## - Runs 1 73753 21429713 2426.9

## <none> 21355960 2428.2

## - CAtBat 1 249406 21605365 2428.6

## - Assists 1 295538 21651497 2429.0

## - CRuns 1 702284 22058244 2432.9

## - Division 1 734085 22090044 2433.2

## - CWalks 1 937348 22293308 2435.1

## - PutOuts 1 1002301 22358261 2435.7

## - Walks 1 1086003 22441962 2436.5

## - CRBI 1 1439193 22795152 2439.7

## - AtBat 1 1640165 22996124 2441.6

## - Hits 1 1787801 23143761 2442.9

##

## Step: AIC=2426.43

## Salary ~ AtBat + Hits + Runs + Walks + CAtBat + CRuns + CRBI +

## CWalks + League + Division + PutOuts + Assists

##

## Df Sum of Sq RSS AIC

## - Runs 1 69079 21451730 2425.1

## - League 1 87548 21470199 2425.3

## <none> 21382651 2426.4

## - Assists 1 314039 21696690 2427.5

## - CAtBat 1 492567 21875218 2429.2

## - Division 1 725175 22107827 2431.4

## - CRuns 1 880113 22262764 2432.8

## - CWalks 1 988001 22370652 2433.8

## - PutOuts 1 1049648 22432299 2434.4

## - Walks 1 1079896 22462547 2434.7

## - CRBI 1 1420036 22802687 2437.8

## - AtBat 1 1614330 22996981 2439.6

## - Hits 1 1772982 23155633 2441.0

##

## Step: AIC=2425.11

## Salary ~ AtBat + Hits + Walks + CAtBat + CRuns + CRBI + CWalks +

## League + Division + PutOuts + Assists

##

## Df Sum of Sq RSS AIC

## - League 1 113492 21565223 2424.2

## <none> 21451730 2425.1

## - Assists 1 399827 21851557 2426.9

## - CAtBat 1 428452 21880182 2427.2

## - Division 1 727359 22179089 2430.0

## - CRuns 1 811308 22263038 2430.8

## - CWalks 1 947776 22399506 2432.1

## - Walks 1 1029714 22481444 2432.9

## - PutOuts 1 1153252 22604982 2434.0

## - CRBI 1 1434607 22886337 2436.6

## - AtBat 1 1793723 23245454 2439.8

## - Hits 1 1825947 23277677 2440.1

##

## Step: AIC=2424.2

## Salary ~ AtBat + Hits + Walks + CAtBat + CRuns + CRBI + CWalks +

## Division + PutOuts + Assists

##

## Df Sum of Sq RSS AIC

## <none> 21565223 2424.2

## - CAtBat 1 366456 21931678 2425.7

## - Assists 1 423017 21988240 2426.2

## - CRuns 1 756041 22321264 2429.4

## - Division 1 762166 22327389 2429.4

## - CWalks 1 998625 22563847 2431.6

## - Walks 1 1124976 22690198 2432.8

## - PutOuts 1 1245275 22810497 2433.9

## - CRBI 1 1393594 22958817 2435.2

## - Hits 1 1785448 23350671 2438.8

## - AtBat 1 1830070 23395292 2439.2##

## Call:

## lm(formula = Salary ~ AtBat + Hits + Walks + CAtBat + CRuns +

## CRBI + CWalks + Division + PutOuts + Assists, data = hit_train)

##

## Coefficients:

## (Intercept) AtBat Hits Walks CAtBat CRuns CRBI CWalks DivisionW PutOuts Assists

## 235.9278 -2.5863 7.7364 5.9827 -0.1210 1.2468 0.9302 -0.9100 -123.4092 0.2893 0.3770最后保留了10个自变量。

41.2.3.4 用10折交叉验证方法选择最优子集

下列程序对数据中每一行分配一个折号:

下面,对10折中每一折都分别当作测试集一次, 得到不同子集大小的均方误差:

cv.errors <- matrix( as.numeric(NA), 10, 19, dimnames=list(NULL, paste(1:19)) )

for(j in 1:10){ # 折

d_ana <- analysis(hit_fold$splits[[1]])

d_ass <- assessment((hit_fold$splits[[1]]))

best.fit <- regsubsets(

Salary ~ .,

data = d_ana, nvmax=19)

for(i in 1:19){

pred <- predict(

best.fit, d_ass, id=i)

cv.errors[j, i] <- mean( (d_ass[["Salary"]] - pred)^2 )

}

}

head(cv.errors)## 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19

## [1,] 277846.6 201416.7 292984.6 236472.5 250270.8 201979.7 197639.6 165602.5 178146.8 174549.5 178469.3 183560.7 185073.1 181778.8 182629.5 181893.4 181501.3 181587.7 181638.5

## [2,] 277846.6 201416.7 292984.6 236472.5 250270.8 201979.7 197639.6 165602.5 178146.8 174549.5 178469.3 183560.7 185073.1 181778.8 182629.5 181893.4 181501.3 181587.7 181638.5

## [3,] 277846.6 201416.7 292984.6 236472.5 250270.8 201979.7 197639.6 165602.5 178146.8 174549.5 178469.3 183560.7 185073.1 181778.8 182629.5 181893.4 181501.3 181587.7 181638.5

## [4,] 277846.6 201416.7 292984.6 236472.5 250270.8 201979.7 197639.6 165602.5 178146.8 174549.5 178469.3 183560.7 185073.1 181778.8 182629.5 181893.4 181501.3 181587.7 181638.5

## [5,] 277846.6 201416.7 292984.6 236472.5 250270.8 201979.7 197639.6 165602.5 178146.8 174549.5 178469.3 183560.7 185073.1 181778.8 182629.5 181893.4 181501.3 181587.7 181638.5

## [6,] 277846.6 201416.7 292984.6 236472.5 250270.8 201979.7 197639.6 165602.5 178146.8 174549.5 178469.3 183560.7 185073.1 181778.8 182629.5 181893.4 181501.3 181587.7 181638.5cv.errors是一个\(10\times 19\)矩阵,

每行对应一折作为测试集的情形,

每列是一个子集大小,

元素值是测试均方误差。

对每列的10个元素求平均, 可以得到每个子集大小的平均均方误差:

## 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19

## 277846.6 201416.7 292984.6 236472.5 250270.8 201979.7 197639.6 165602.5 178146.8 174549.5 178469.3 183560.7 185073.1 181778.8 182629.5 181893.4 181501.3 181587.7 181638.5

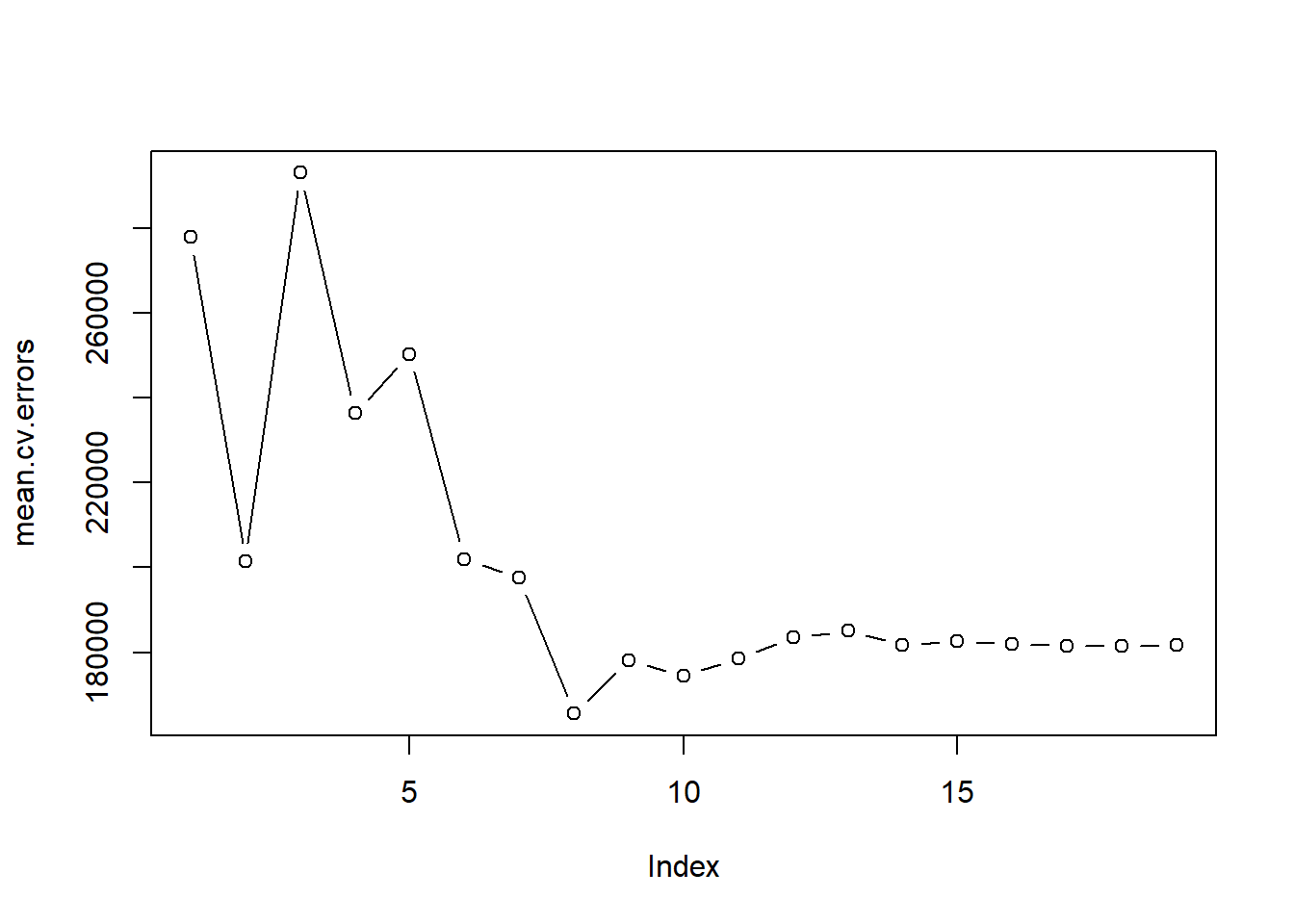

图41.2: Hitters数据CV均方误差

这样找到的最优子集大小是8。 注意, 一般不需要用户自己进行这种交叉验证调参, 机器学习的函数一般都集成了这个功能。

用这种方法找到最优子集大小后, 可以对全数据集重新建模但是选择最优子集大小为8:

## (Intercept) AtBat Hits Walks CHmRun CRuns CWalks DivisionW PutOuts

## 130.9691577 -2.1731903 7.3582935 6.0037597 1.2339718 0.9651349 -0.8323788 -117.9657795 0.2908431事实上, 划分训练集和验证集与交叉验证方法经常联合运用。 取一个固定的较小规模的测试集, 此测试集不用来作子集选择, 对训练集用交叉验证方法选择最优子集, 然后在测试集上评估最终模型的性能。

41.2.4 岭回归

当自变量个数太多时,模型复杂度高, 可能有过度拟合, 模型不稳定。

一种方法是对较大的模型系数施加二次惩罚, 把最小二乘问题变成带有二次惩罚项的惩罚最小二乘问题: \[\begin{aligned} \min\; \sum_{i=1}^n \left( y_i - \beta_0 - \beta_1 x_{i1} - \dots - \beta_p x_{ip} \right)^2 + \lambda \sum_{j=1}^p \beta_j^2 . \end{aligned}\] 这比通常最小二乘得到的回归系数绝对值变小, 但是求解的稳定性增加了,避免了共线问题。

实际上, 与线性模型\(\boldsymbol Y = \boldsymbol X \boldsymbol\beta + \boldsymbol\varepsilon\) 的普通最小二乘解 \(\hat{\boldsymbol\beta} = (\boldsymbol X^T \boldsymbol X)^{-1} \boldsymbol X^T \boldsymbol Y\) 相比, 岭回归问题的解为 \[ \tilde{\boldsymbol\beta} = (\boldsymbol X^T \boldsymbol X + s \boldsymbol I)^{-1} \boldsymbol X^T \boldsymbol Y \] 其中\(\boldsymbol I\)为单位阵,\(s>0\)与\(\lambda\)有关。

\(\lambda\)称为调节参数,\(\lambda\)越大,相当于模型复杂度越低。 适当选择\(\lambda\)可以在方差与偏差之间找到适当的折衷, 从而减小预测误差。

由于量纲问题,在不同自变量不可比时,数据集应该进行标准化。

用R的glmnet包计算岭回归。

用glmnet()函数,

指定参数alpha=0时执行的是岭回归。

用参数lambda=指定一个调节参数网格,

岭回归将在这些调节参数上计算。

用coef()从回归结果中取得不同调节参数对应的回归系数估计,

结果是一个矩阵,每列对应于一个调节参数。

仍采用上面去掉了缺失值的Hitters数据集结果da_hit。

如下程序把回归的设计阵与因变量提取出来:

岭回归涉及到调节参数\(\lambda\)的选择, 为了绘图, 先选择\(\lambda\)的一个网格:

用所有数据针对这样的调节参数网格计算岭回归结果,

注意glmnet()函数允许调节参数\(\lambda\)输入多个值:

## [1] 20 100glmnet()函数默认对数据进行标准化。

coef()的结果是一个矩阵,每列对应一个调节参数值。

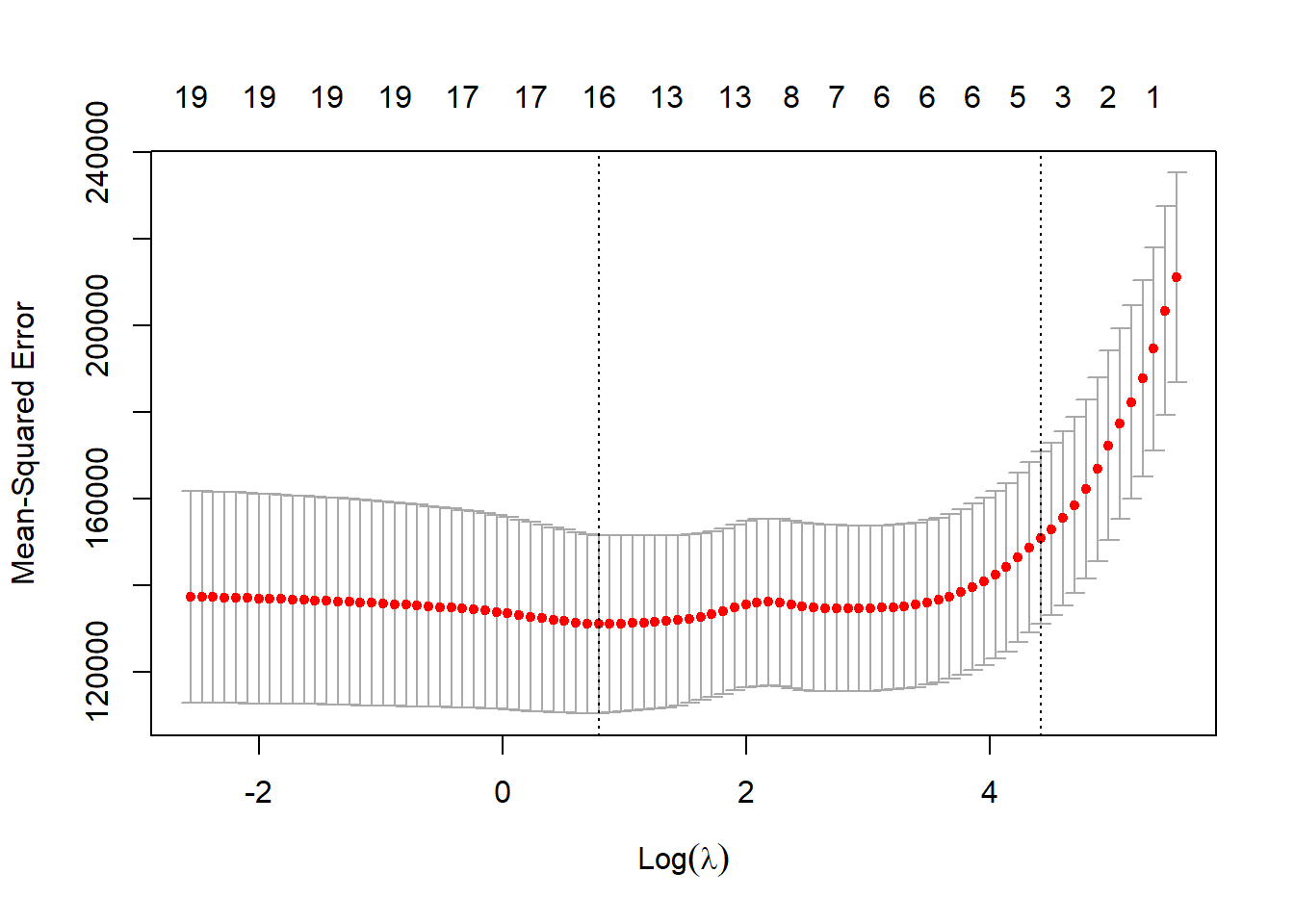

41.2.4.1 用10折交叉验证选取调节参数

仍使用训练集,

但训练集再进行交叉验证。

cv.glmnet()函数可以执行交叉验证。

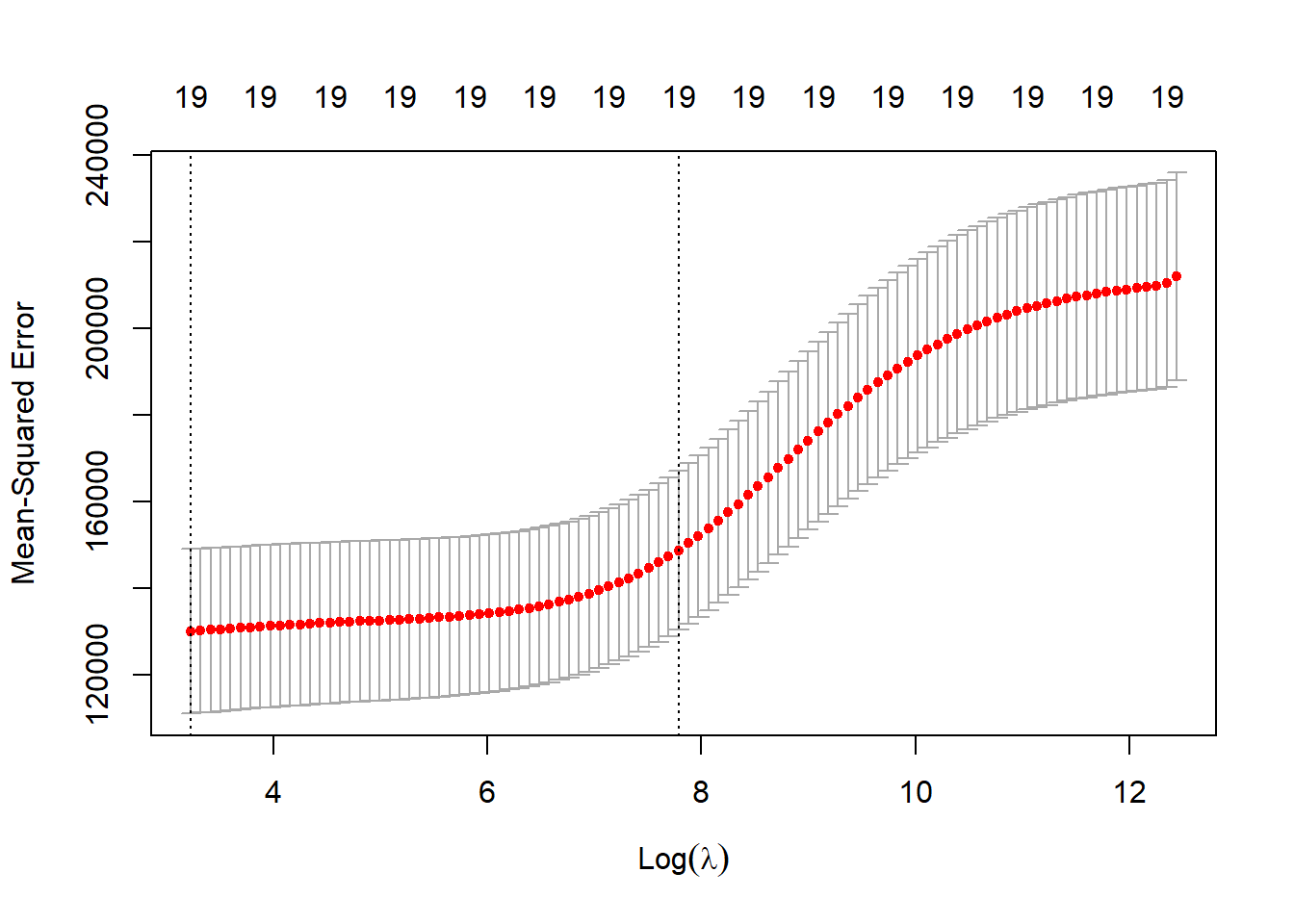

图41.3: Hitters数据岭回归参数选择

这样获得了最优调节参数\(\lambda=\) 25.2283126。 用最优调节参数对测试集作预测, 得到预测均方误差:

ridge.pred <- predict(

ridge.mod, s = bestlam,

newx = model.matrix(Salary ~ ., hit_test)[,-1])

mean( (ridge.pred - hit_test$Salary)^2 )## [1] 57954.62最后,用选取的最优调节系数对全数据集建模, 得到相应的岭回归系数估计:

x <- model.matrix(Salary ~ ., da_hit)[,-1]

y <- da_hit$Salary

out <- glmnet(x, y, alpha=0)

predict(out, type='coefficients', s=bestlam)[1:20,]## (Intercept) AtBat Hits HmRun Runs RBI Walks Years CAtBat CHits CHmRun CRuns CRBI CWalks LeagueN DivisionW PutOuts Assists Errors NewLeagueN

## 8.112693e+01 -6.815959e-01 2.772312e+00 -1.365680e+00 1.014826e+00 7.130224e-01 3.378558e+00 -9.066800e+00 -1.199478e-03 1.361029e-01 6.979958e-01 2.958896e-01 2.570711e-01 -2.789666e-01 5.321272e+01 -1.228345e+02 2.638876e-01 1.698796e-01 -3.685645e+00 -1.810510e+0141.2.5 Lasso回归

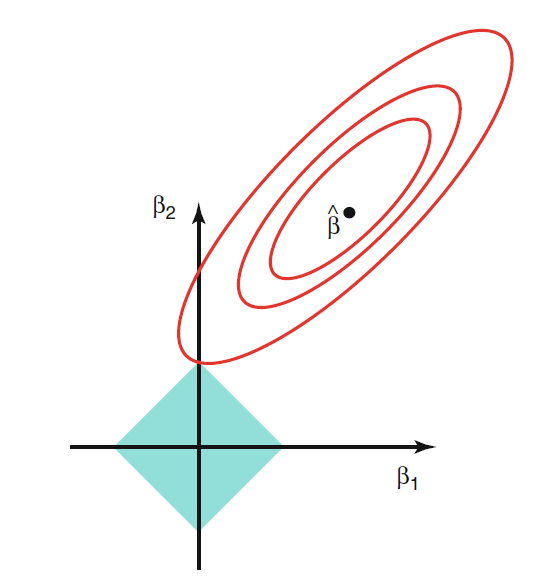

另一种对回归系数的惩罚是\(L_1\)惩罚: \[\begin{align} \min\; \sum_{i=1}^n \left( y_i - \beta_0 - \beta_1 x_{i1} - \dots - \beta_p x_{ip} \right)^2 + \lambda \sum_{j=1}^p |\beta_j| . \tag{41.1} \end{align}\] 奇妙地是,适当选择调节参数\(\lambda\),可以使得部分回归系数变成零, 达到了即减小回归系数的绝对值又挑选重要变量子集的效果。

事实上,(41.1)等价于约束最小值问题 \[\begin{aligned} & \min\; \sum_{i=1}^n \left( y_i - \beta_0 - \beta_1 x_{i1} - \dots - \beta_p x_{ip} \right)^2 \quad \text{s.t.} \\ & \sum_{j=1}^p |\beta_j| \leq s \end{aligned}\] 其中\(s\)与\(\lambda\)一一对应。 这样的约束区域是带有顶点的凸集, 而目标函数是二次函数, 最小值点经常在约束区域顶点达到, 这些顶点是某些坐标等于零的点。 见图41.4。

图41.4: Lasso约束优化问题图示

对于每个调节参数\(\lambda\), 都应该解出(41.1)的相应解, 记为\(\hat{\boldsymbol\beta}(\lambda)\)。 幸运的是, 不需要对每个\(\lambda\)去解最小值问题(41.1), 存在巧妙的算法使得问题的计算量与求解一次最小二乘相仿。

通常选取\(\lambda\)的格子点,计算相应的惩罚回归系数。 用交叉验证方法估计预测的均方误差。 选取使得交叉验证均方误差最小的调节参数(一般R函数中已经作为选项)。

用R的glmnet包计算lasso。

用glmnet()函数,

指定参数alpha=1时执行的是lasso。

用参数lambda=指定一个调节参数网格,

lasso将输出这些调节参数对应的结果。

对回归结果使用plot()函数可以画出调节参数变化时系数估计的变化情况。

仍使用gmlnet包的glmnet()函数计算Lasso回归,

指定一个调节参数网格(沿用前面的网格):

x <- model.matrix(Salary ~ ., hit_train)[,-1]

y <- hit_train$Salary

lasso.mod <- glmnet(x, y, alpha=1, lambda=grid)

plot(lasso.mod)## Warning in regularize.values(x, y, ties, missing(ties), na.rm = na.rm): collapsing to unique 'x' values

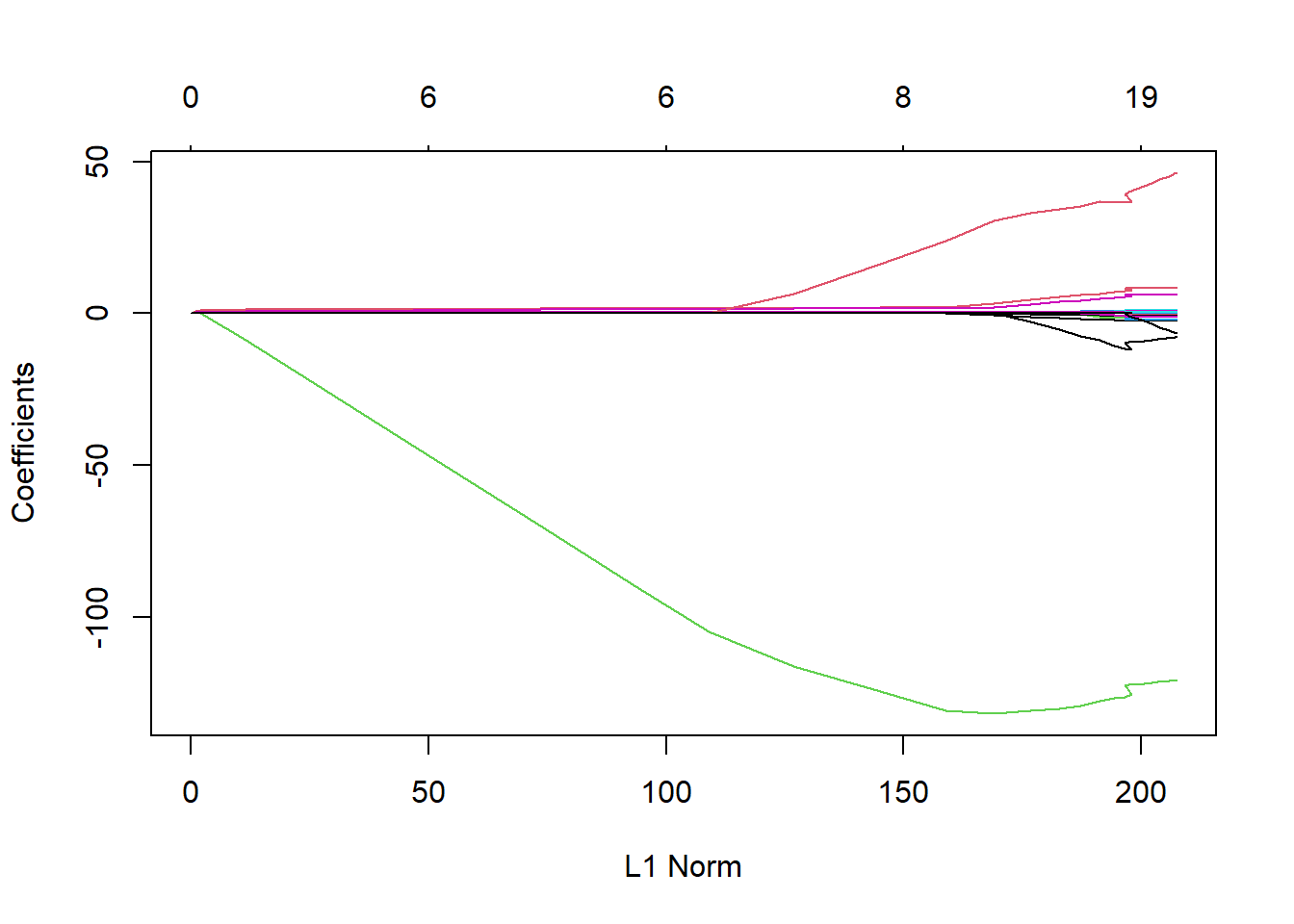

图41.5: Hitters数据lasso轨迹

对lasso结果使用plot()函数可以绘制延调节参数网格变化的各回归系数估计,横坐标不是调节参数而是调节参数对应的系数绝对值和,

可以看出随着系数绝对值和增大,实际是调节参数变小,

更多地自变量进入模型。

41.2.5.1 用交叉验证估计调节参数

按照前面划分的训练集与测试集, 仅使用训练集数据做交叉验证估计最优调节参数:

## [1] 2.19423得到调节参数估计后,对测试集计算预测均方误差:

lasso.pred <- predict(

lasso.mod, s = bestlam,

newx = model.matrix(Salary ~ ., hit_test)[,-1])

mean( (lasso.pred - hit_test$Salary)^2 )## [1] 58582.15这个效果比岭回归效果略差。

为了充分利用数据, 使用前面获得的最优调节参数, 对全数据集建模:

x <- model.matrix(Salary ~ ., da_hit)[,-1]

y <- da_hit$Salary

out <- glmnet(x, y, alpha=1, lambda=grid)

lasso.coef <- predict(

out, type='coefficients', s=bestlam)[1:20,]

lasso.coef## (Intercept) AtBat Hits HmRun Runs RBI Walks Years CAtBat CHits CHmRun CRuns CRBI CWalks LeagueN DivisionW PutOuts Assists Errors NewLeagueN

## 1.348925e+02 -1.689582e+00 5.971182e+00 9.734402e-02 0.000000e+00 0.000000e+00 4.978211e+00 -1.019167e+01 -9.794493e-05 0.000000e+00 5.650266e-01 7.036826e-01 3.867695e-01 -5.851131e-01 3.305686e+01 -1.193420e+02 2.760478e-01 2.008473e-01 -2.277618e+00 0.000000e+00## (Intercept) AtBat Hits HmRun Walks Years CAtBat CHmRun CRuns CRBI CWalks LeagueN DivisionW PutOuts Assists Errors

## 1.348925e+02 -1.689582e+00 5.971182e+00 9.734402e-02 4.978211e+00 -1.019167e+01 -9.794493e-05 5.650266e-01 7.036826e-01 3.867695e-01 -5.851131e-01 3.305686e+01 -1.193420e+02 2.760478e-01 2.008473e-01 -2.277618e+00选择的自变量子集有15个自变量。

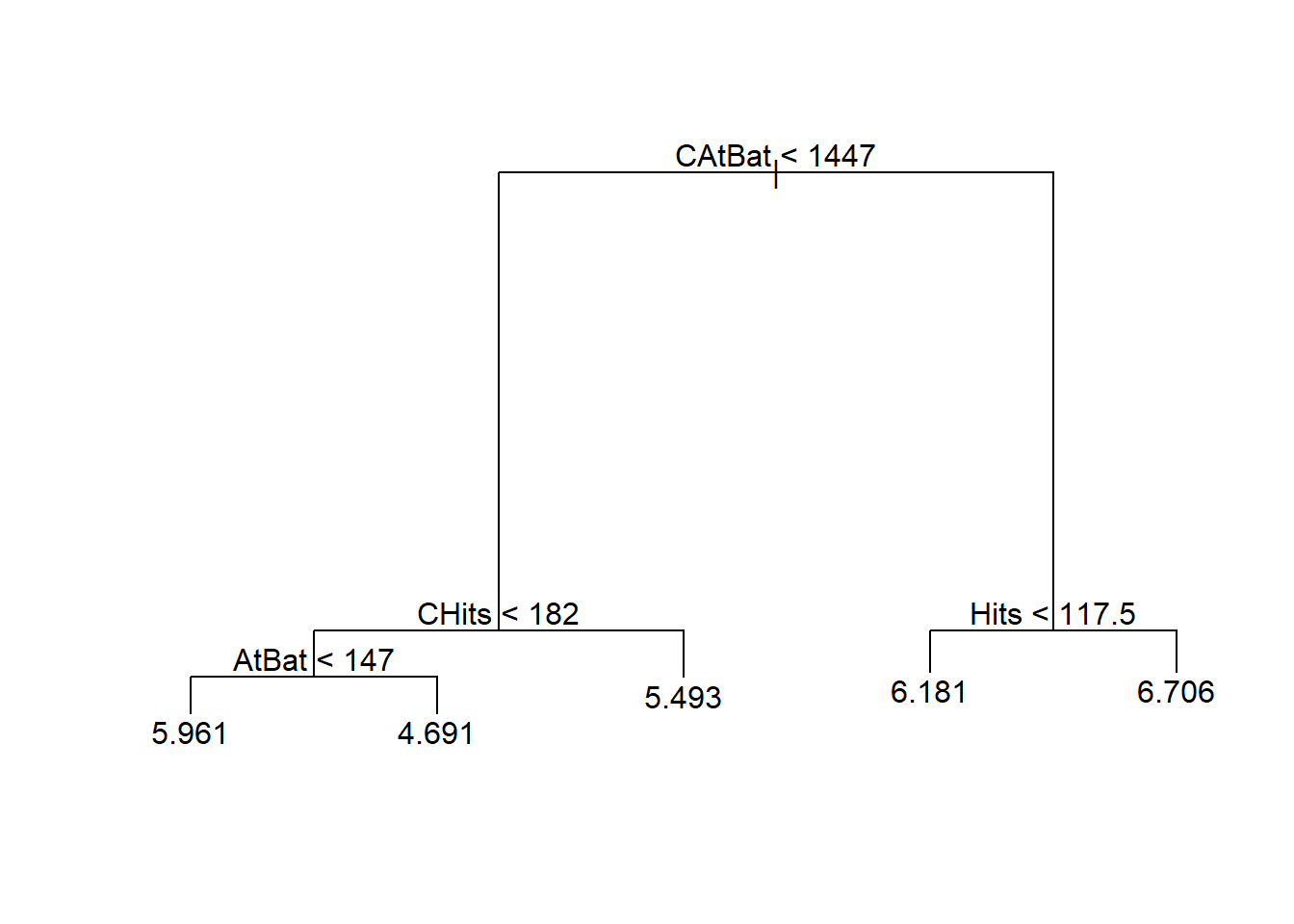

41.2.6 树回归的简单演示

决策树方法按不同自变量的不同值, 分层地把训练集分组。 每层使用一个变量, 所以这样的分组构成一个二叉树表示。 为了预测一个观测的类归属, 找到它所属的组, 用组的类归属或大多数观测的类归属进行预测。 这样的方法称为决策树(decision tree)。 决策树方法既可以用于判别问题, 也可以用于回归问题,称为回归树。

决策树的好处是容易解释, 在自变量为分类变量时没有额外困难。 但预测准确率可能比其它有监督学习方法差。

改进方法包括装袋法(bagging)、随机森林(random forests)、 提升法(boosting)。 这些改进方法都是把许多棵树合并在一起, 通常能改善准确率但是可解释性变差。

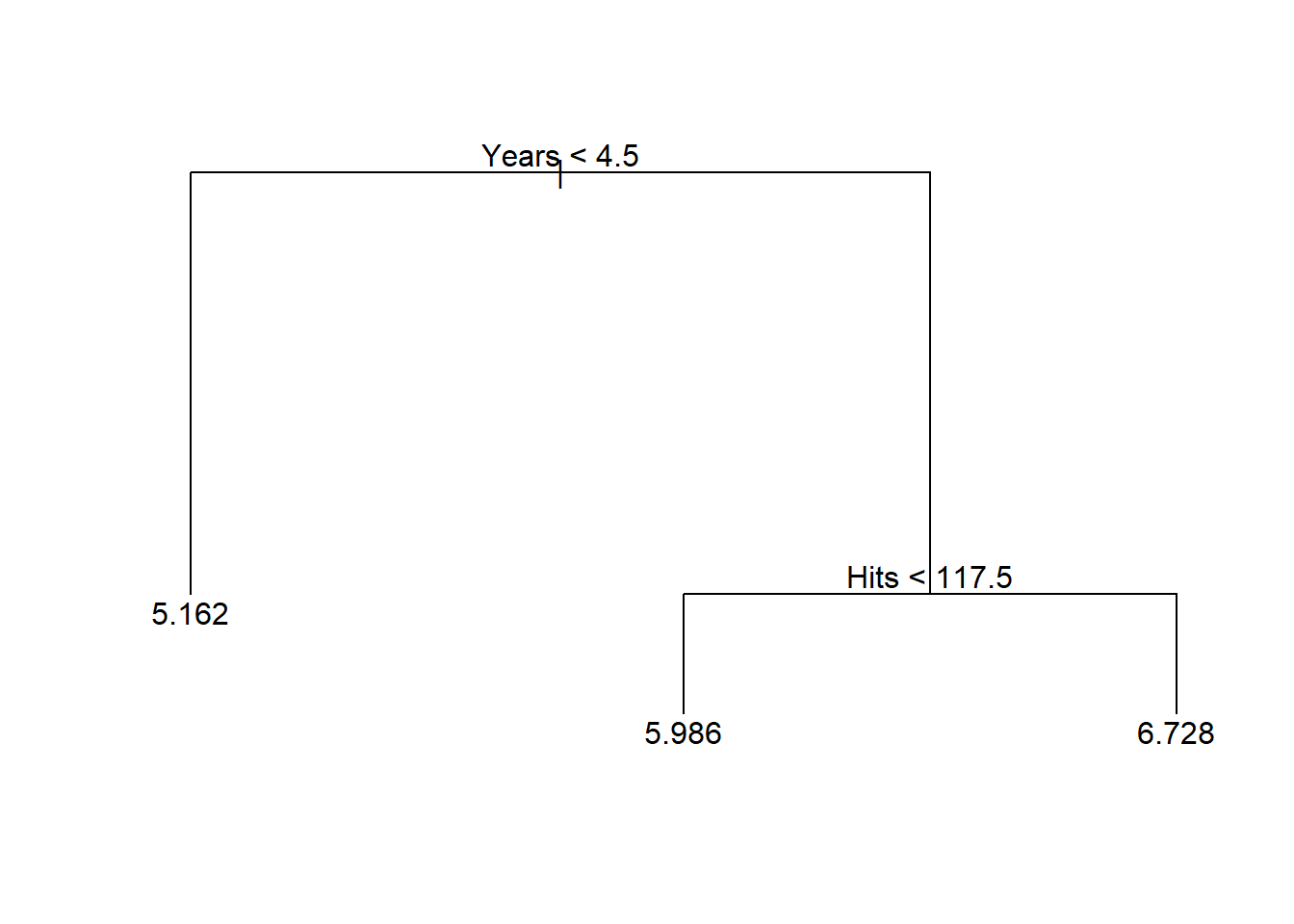

对Hitters数据,用Years和Hits作因变量预测log(Salaray)。

建立完整的树:

剪枝为只有3个叶结点:

显示树:

## node), split, n, deviance, yval

## * denotes terminal node

##

## 1) root 208 161.20 5.936

## 2) Years < 4.5 72 35.07 5.162 *

## 3) Years > 4.5 136 60.05 6.346

## 6) Hits < 117.5 70 23.60 5.986 *

## 7) Hits > 117.5 66 17.75 6.728 *显示概括:

##

## Regression tree:

## snip.tree(tree = tr1, nodes = c(6L, 2L))

## Number of terminal nodes: 3

## Residual mean deviance: 0.3727 = 76.41 / 205

## Distribution of residuals:

## Min. 1st Qu. Median Mean 3rd Qu. Max.

## -2.2280 -0.3740 -0.0589 0.0000 0.3414 2.5010做树图:

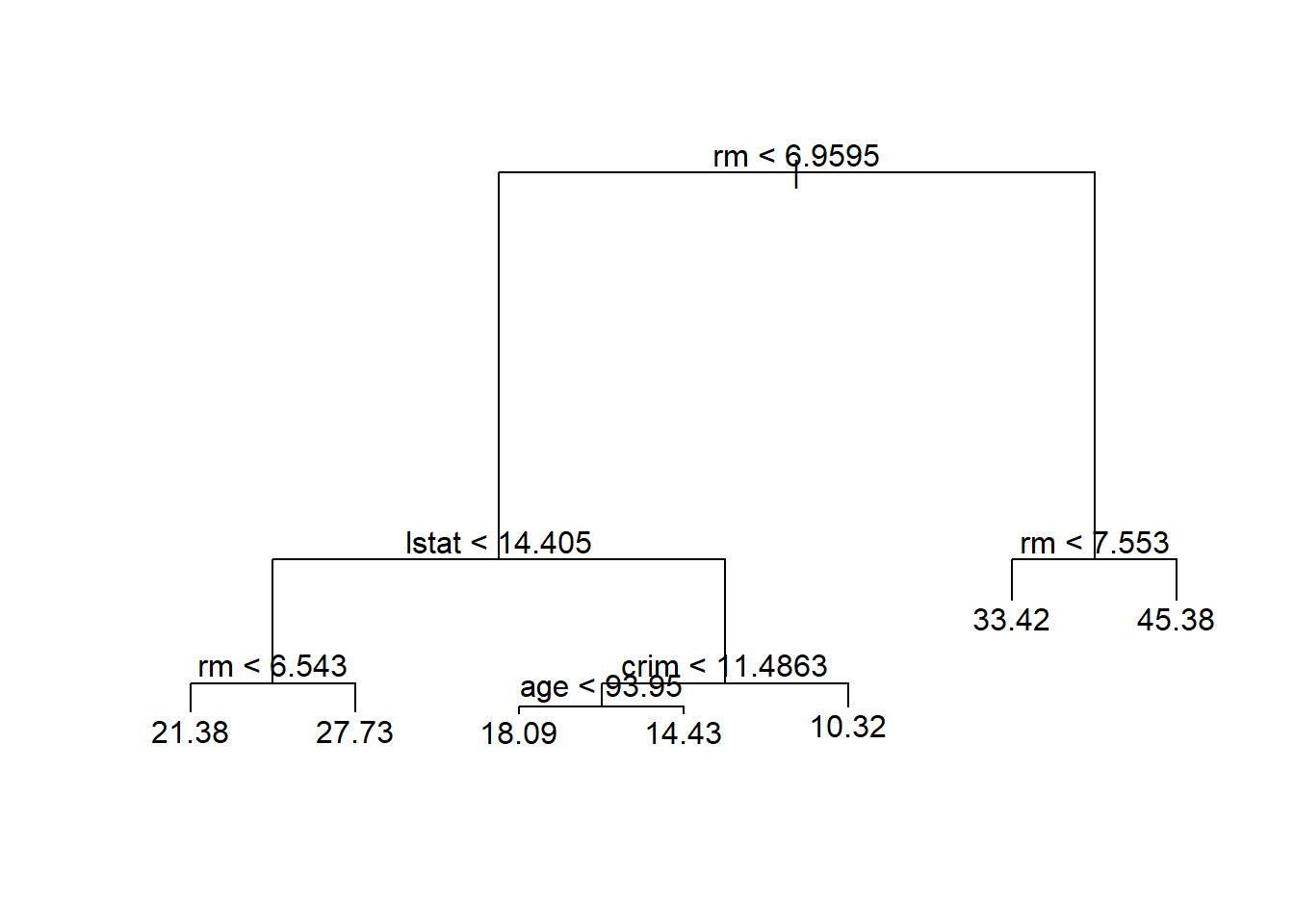

树的深度(depth)是指从根节点到最远的页节点经过的步数, 比如,上图的树的深度为2, 为了用叶结点给出因变量预测值, 最多需要2次判断。

41.2.7 树回归

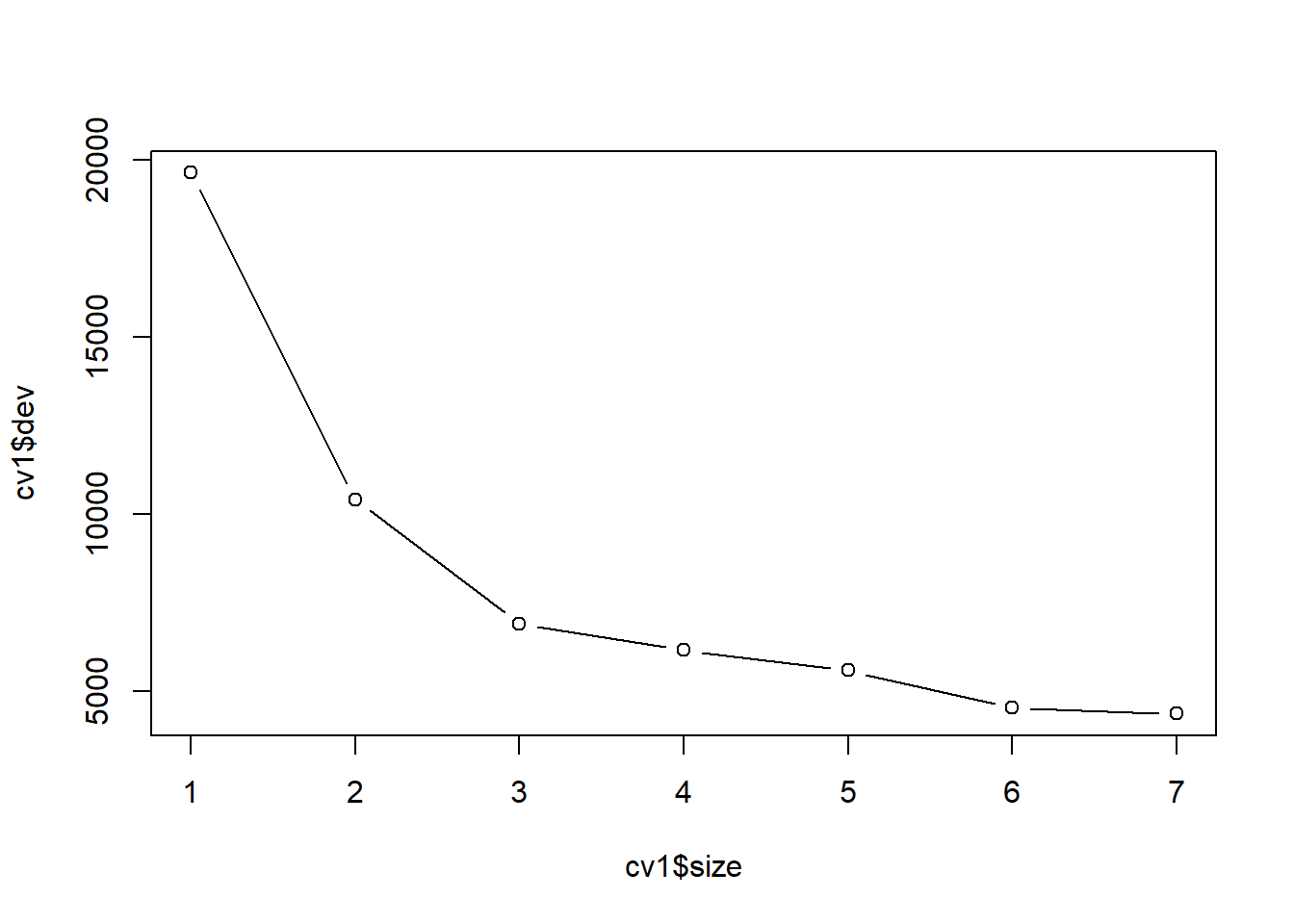

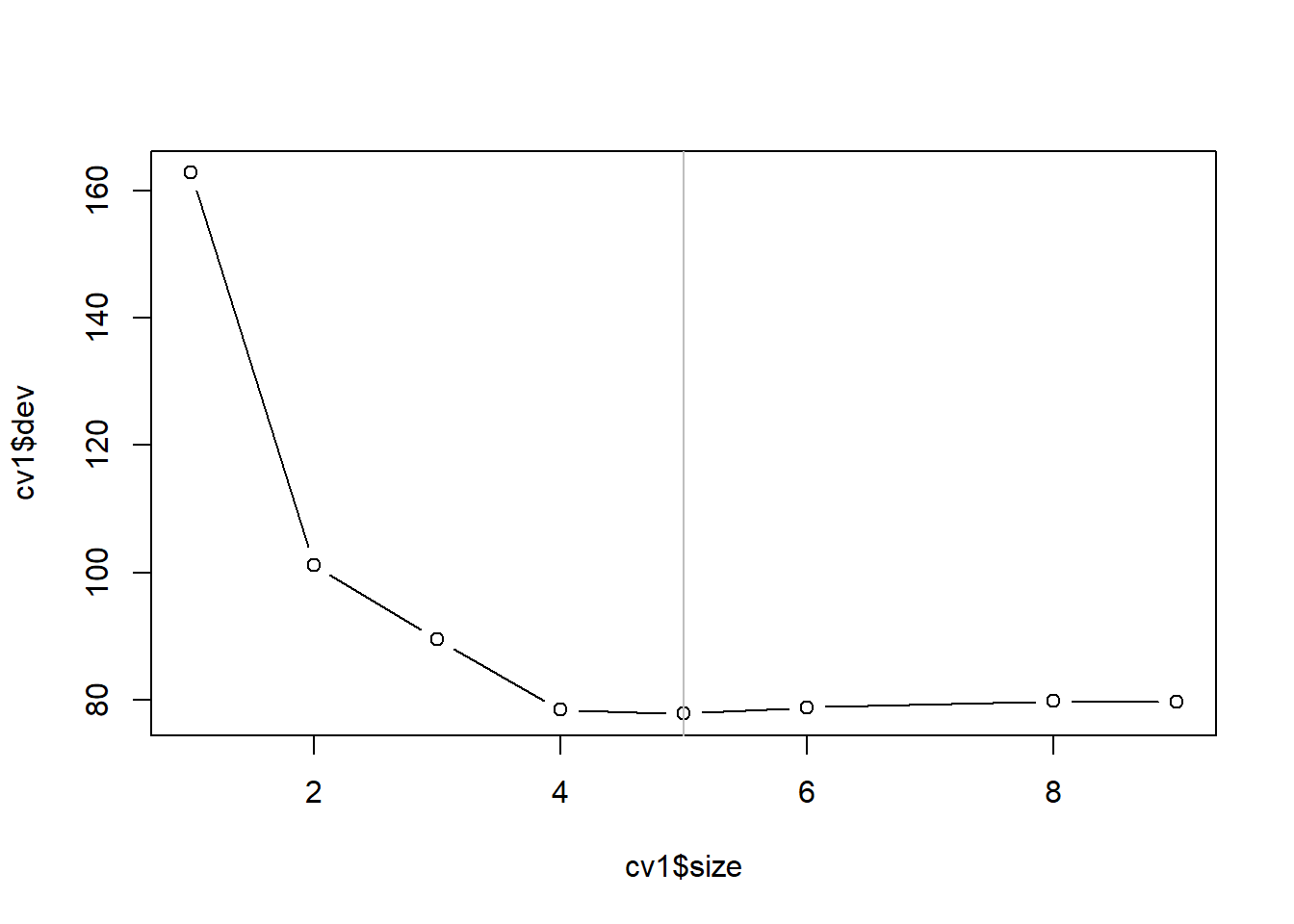

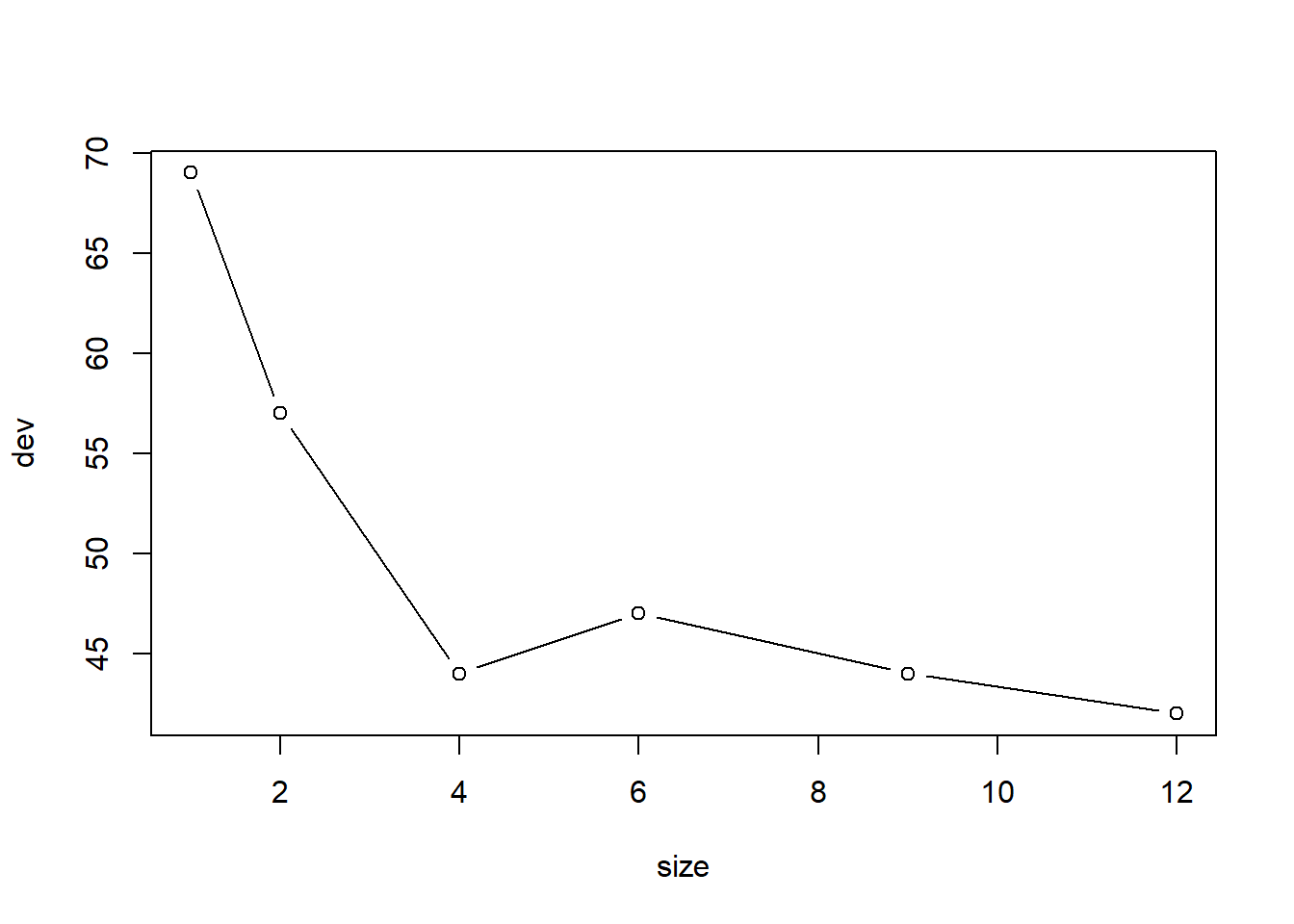

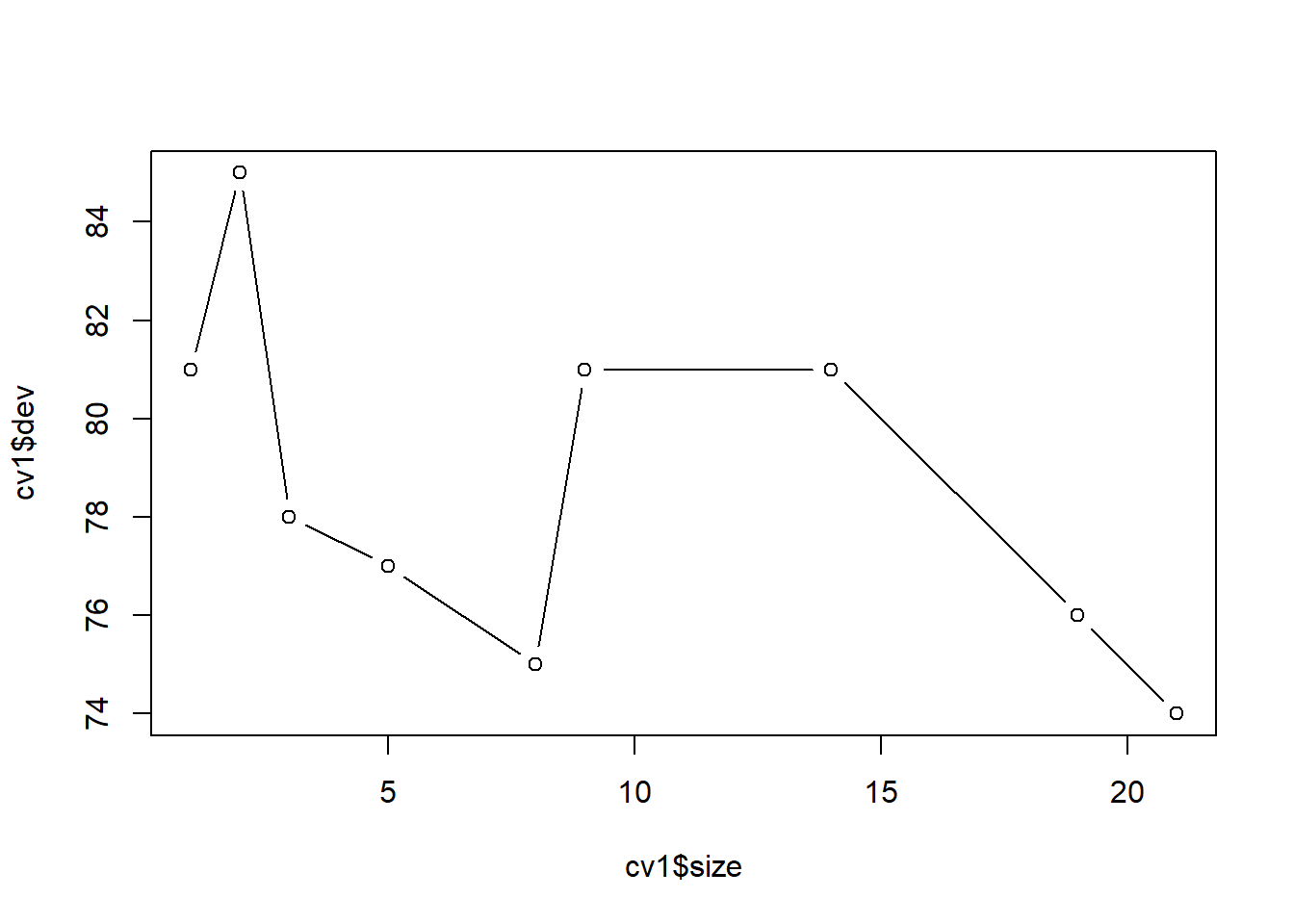

对训练集上的未剪枝树用交叉验证方法寻找最优大小:

## $size

## [1] 9 8 6 5 4 3 2 1

##

## $dev

## [1] 79.61217 79.76379 78.84809 77.84420 78.42855 89.51855 101.22807 162.80732

##

## $k

## [1] -Inf 2.445601 2.639571 3.186007 4.133744 8.296626 18.711912 66.037022

##

## $method

## [1] "deviance"

##

## attr(,"class")

## [1] "prune" "tree.sequence"plot(cv1$size, cv1$dev, type='b')

best.size <- cv1$size[which.min(cv1$dev)[1]]

abline(v=best.size, col='gray')

最优大小为5。 获得训练集上构造的树剪枝后的结果:

在测试集上计算预测均方误差:

pred.test <- predict(tr1b, newdata = hit_test)

test.mse <- mean( (hit_test$Salary - exp(pred.test))^2 )

test.mse## [1] 72954.21如果用训练集的因变量平均值估计测试集的因变量值, 均方误差为:

## [1] 170680.7用所有数据来构造未剪枝树:

用训练集上得到的子树大小剪枝:

41.2.8 装袋法

判别树在不同的训练集、测试集划分上可以产生很大变化, 说明其预测值方差较大。 利用bootstrap的思想, 可以随机选取许多个训练集, 把许多个训练集的模型结果平均, 就可以降低预测值的方差。

办法是从一个训练集中用有放回抽样的方法抽取\(B\)个训练集, 设第\(b\)个抽取的训练集得到的回归函数为\(\hat f^{*b}(\cdot)\), 则最后的回归函数是这些回归函数的平均值: \[\begin{aligned} \hat f_{\text{bagging}}(x) = \frac{1}{B} \sum_{b=1}^b \hat f^{*b}(x) \end{aligned}\] 这称为装袋法(bagging)。 装袋法对改善判别与回归树的精度十分有效。

装袋法的步骤如下:

- 从训练集中取\(B\)个有放回随机抽样的bootstrap训练集,\(B\)取为几百到几千之间。

- 对每个bootstrap训练集,估计未剪枝的树。

- 如果因变量是连续变量,对测试样品,用所有的树的预测值的平均值作预测。

- 如果因变量是分类变量,对测试样品,可以用所有树预测类的多数投票决定预测值。

装袋法也可以用来改进其他的回归和判别方法。

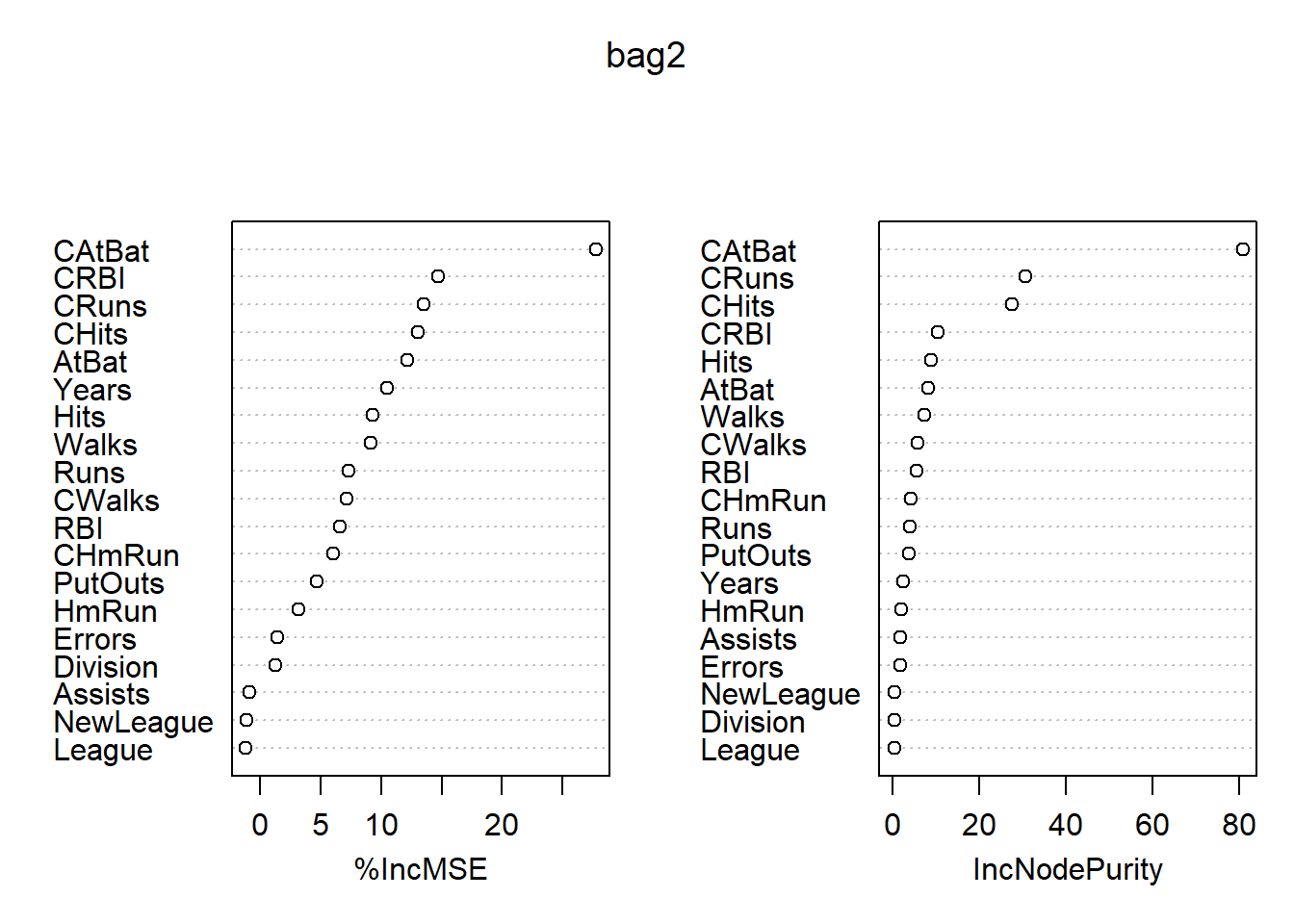

装袋后不能再用图形表示,模型可解释性较差。 但是,可以度量自变量在预测中的重要程度。 在回归问题中, 可以计算每个自变量在所有\(B\)个树种平均减少的残差平方和的量, 以此度量其重要度。 在判别问题中, 可以计算每个自变量在所有\(B\)个树种平均减少的基尼系数的量, 以此度量其重要度。

除了可以用测试集、交叉验证方法以外, 还可以使用袋外观测预测误差。 用bootstrap再抽样获得多个训练集时每个bootstrap训练集总会遗漏一些观测, 平均每个bootstrap训练集会遗漏三分之一的观测。 对每个观测,大约有\(B/3\)棵树没有用到此观测, 可以用这些树的预测值平均来预测此观测,得到一个误差估计, 这样得到的均方误差估计或错判率称为袋外观测估计(OOB估计)。 好处是不用很多额外的工作。

对训练集用装袋法:

bag1 <- randomForest(

log(Salary) ~ .,

data = hit_train,

mtry=ncol(hit_train)-1,

importance=TRUE)

bag1##

## Call:

## randomForest(formula = log(Salary) ~ ., data = hit_train, mtry = ncol(hit_train) - 1, importance = TRUE)

## Type of random forest: regression

## Number of trees: 500

## No. of variables tried at each split: 19

##

## Mean of squared residuals: 0.1997855

## % Var explained: 74.21注意randomForest()函数实际是随机森林法,

但是当mtry的值取为所有自变量个数时就是装袋法。

对测试集进行预报:

pred2 <- predict(bag1, newdata = hit_test)

test.mse2 <- mean( (hit_test$Salary - exp(pred2))^2 )

test.mse2## [1] 40493.2在全集上使用装袋法:

##

## Call:

## randomForest(formula = log(Salary) ~ ., data = da_hit, mtry = ncol(da_hit) - 1, importance = TRUE)

## Type of random forest: regression

## Number of trees: 500

## No. of variables tried at each split: 19

##

## Mean of squared residuals: 0.1887251

## % Var explained: 76.04变量的重要度数值和图形: 各变量的重要度数值及其图形:

## %IncMSE IncNodePurity

## AtBat 12.1847865 8.0539634

## Hits 9.3337219 8.8872063

## HmRun 3.1834789 1.9012234

## Runs 7.3505924 3.8938682

## RBI 6.5675751 5.5666456

## Walks 9.1539209 7.3512005

## Years 10.5282905 2.3558252

## CAtBat 27.7282364 80.8752219

## CHits 13.0681742 27.4949657

## CHmRun 6.0152055 4.2342280

## CRuns 13.5257425 30.6010931

## CRBI 14.7426270 10.3984912

## CWalks 7.1140115 5.6665591

## League -1.1679843 0.2463835

## Division 1.2910729 0.2785405

## PutOuts 4.7092044 3.7752935

## Assists -0.8721871 1.7583406

## Errors 1.4266974 1.6524380

## NewLeague -1.0968795 0.3831401

图41.6: Hitters数据装袋法的变量重要性结果

最重要的自变量是CAtBats, 其次有CRuns, CHits等。

如何计算变量重要度? 基于树的方法, 每个叶节点的纯度越高(叶结点中所有观测的标签相同,或者因变量值相等), 模型拟合优度越好。 所以, 对每一个变量, 可以计算其在作为分枝用的变量时, 对中间节点的纯度指标的改善量, 将这些改善量加起来。 对装袋法、随机森林、提升法(如GBM), 则是计算每个变量对损失函数的改善量。

不同的机器学习算法对变量重要程度有不同的定义, 比如, 广义线性模型(GLM)用标准化后的自变量的系数估计的绝对值大小作为重要程度度量。

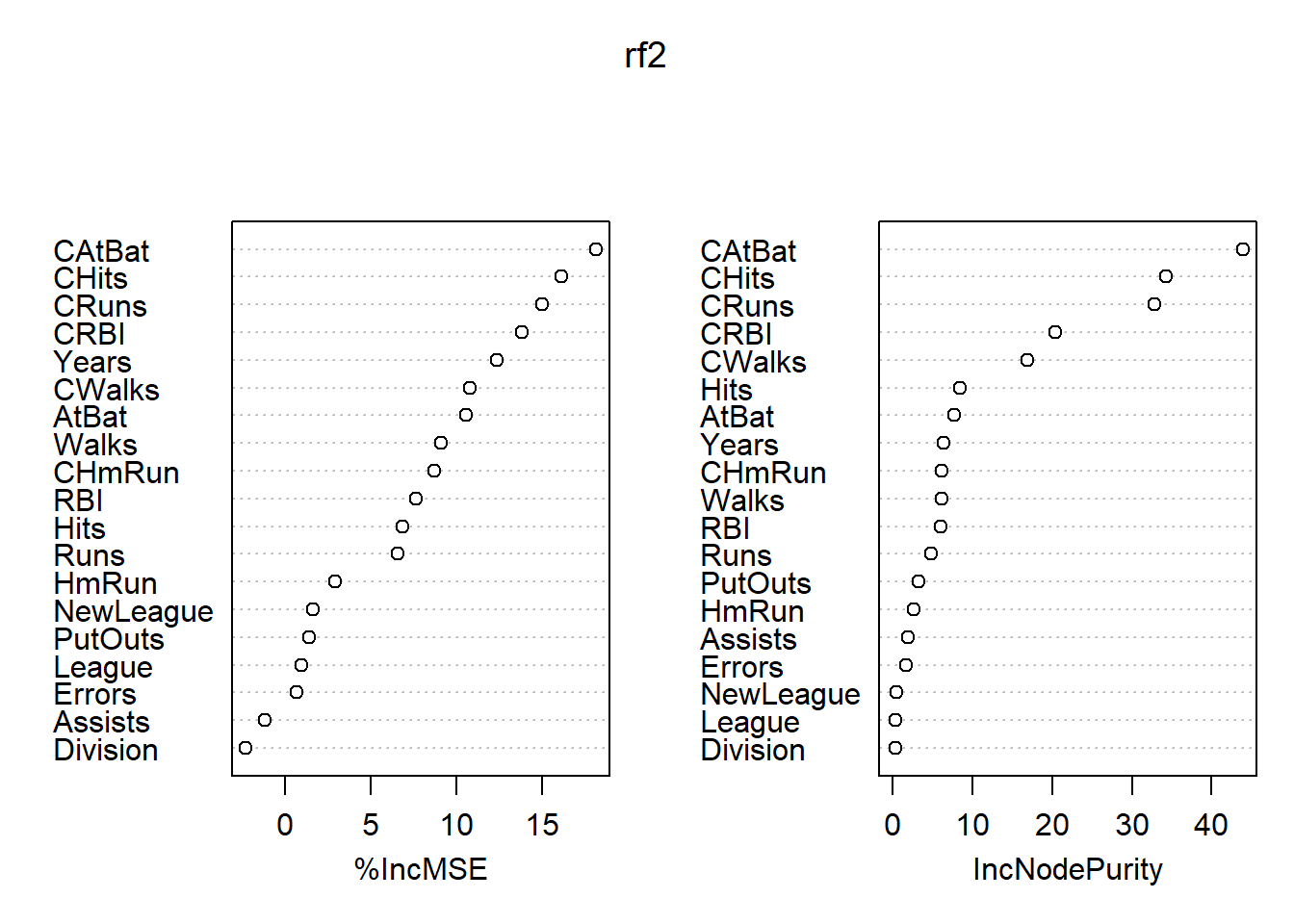

41.2.9 随机森林

随机森林的思想与装袋法类似, 但是试图使得参加平均的各个树之间变得比较独立。 仍采用有放回抽样得到的多个bootstrap训练集, 但是对每个bootstrap训练集构造判别树时, 每次分叉时不考虑所有自变量, 而是仅考虑随机选取的一个自变量子集。

对判别树,每次分叉时选取的自变量个数通常取\(m \approx \sqrt{p}\)个。 比如,对Heart数据的13个自变量, 每次分叉时仅随机选取4个纳入考察范围。

随机森林的想法是基于正相关的样本在平均时并不能很好地降低方差, 独立样本能比较好地降低方差。 如果存在一个最重要的变量, 如果不加限制这个最重要的变量总会是第一个分叉, 使得\(B\)棵树相似程度很高。 随机森林解决这个问题的办法是限制分叉时可选的变量子集。

随机森林也可以用来改进其他的回归和判别方法。

装袋法和随机森林都可以用R扩展包randomForest的

randomForest()函数实现。

当此函数的mtry参数取为自变量个数时,执行的就是装袋法;

mtry取缺省值时,执行随机森林算法。

执行随机森林算法时,

randomForest()函数在回归问题时分叉时考虑的自变量个数取\(m \approx p/3\),

在判别问题时取\(m \approx \sqrt{p}\)。

对训练集用随机森林法:

##

## Call:

## randomForest(formula = log(Salary) ~ ., data = hit_train, importance = TRUE)

## Type of random forest: regression

## Number of trees: 500

## No. of variables tried at each split: 6

##

## Mean of squared residuals: 0.1961343

## % Var explained: 74.69当mtry的值取为缺省值时执行随机森林算法。

对测试集进行预报:

pred3 <- predict(rf1, newdata = hit_test)

test.mse3 <- mean( (hit_test$Salary - exp(pred3))^2 )

test.mse3## [1] 39605.52结果与装袋法相近。

在全集上使用随机森林:

##

## Call:

## randomForest(formula = log(Salary) ~ ., data = da_hit, importance = TRUE)

## Type of random forest: regression

## Number of trees: 500

## No. of variables tried at each split: 6

##

## Mean of squared residuals: 0.1819559

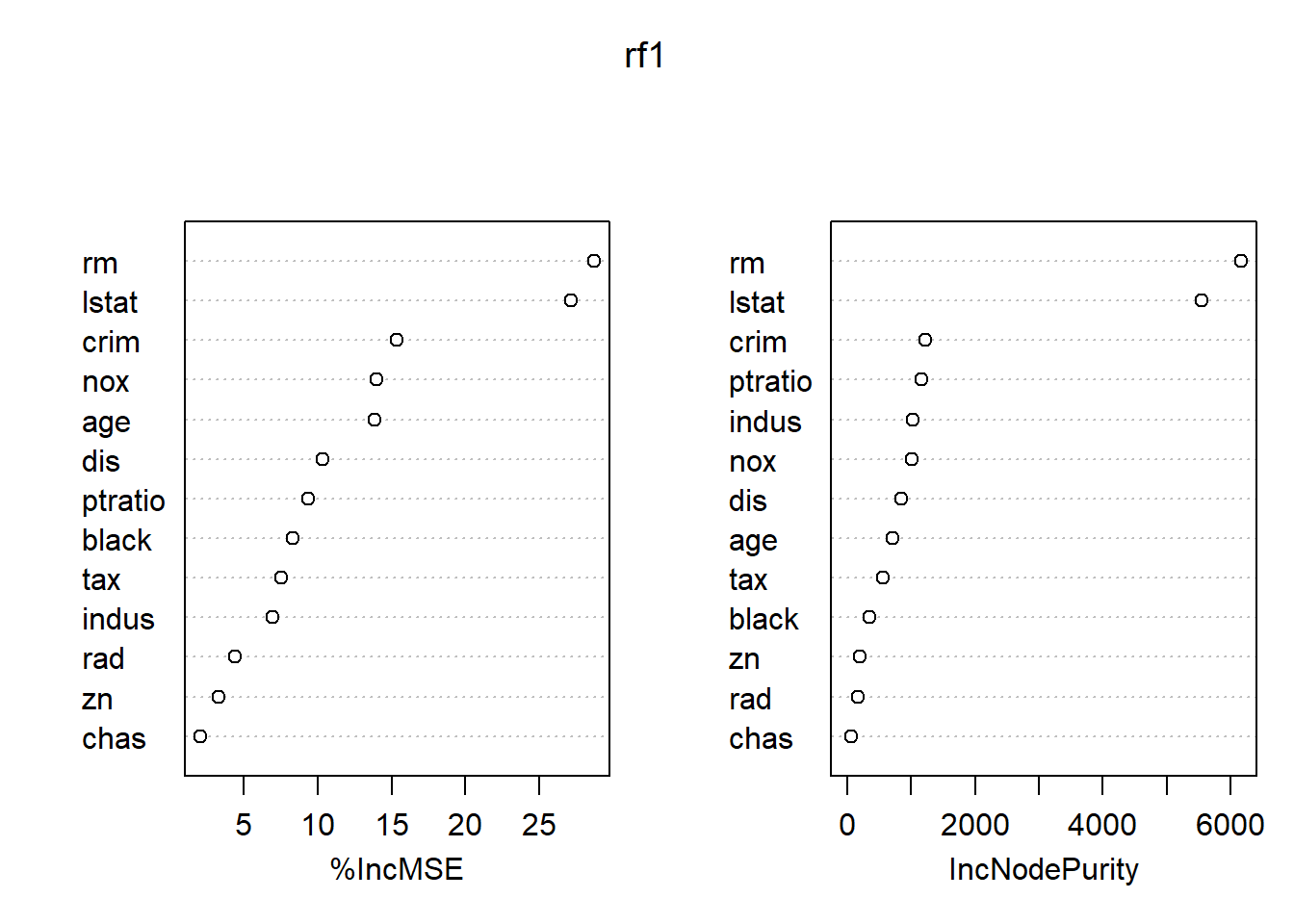

## % Var explained: 76.9各变量的重要度数值及其图形:

## %IncMSE IncNodePurity

## AtBat 10.5831938 7.6803164

## Hits 6.8534500 8.4427137

## HmRun 2.9411205 2.6021258

## Runs 6.6085935 4.7906075

## RBI 7.6427518 6.0407086

## Walks 9.1235296 6.0742818

## Years 12.3990224 6.4031367

## CAtBat 18.1391649 43.9058026

## CHits 16.1265574 34.3144001

## CHmRun 8.6922887 6.0819271

## CRuns 15.0136882 32.8197588

## CRBI 13.8506429 20.4319951

## CWalks 10.7946944 16.8511024

## League 0.9803532 0.2773488

## Division -2.2734452 0.2644631

## PutOuts 1.4260960 3.1688382

## Assists -1.1869686 1.8335868

## Errors 0.6877809 1.6121681

## NewLeague 1.6258977 0.4034615

图41.7: Hitters数据随机森林法的变量重要度结果

最重要的自变量是CAtBats, CRuns, CHits, CWalks, CRBI等。

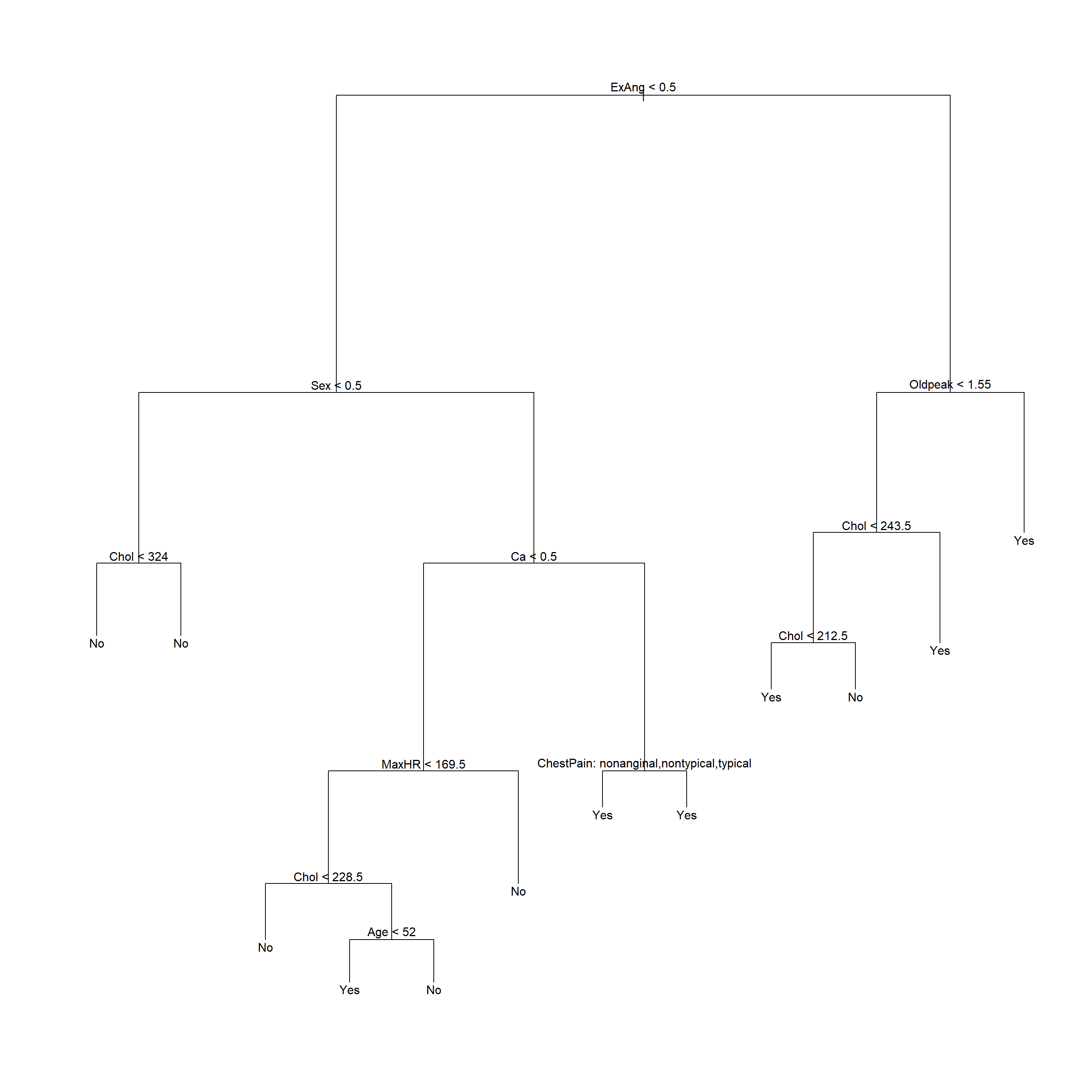

41.3 Heart数据分析

Heart数据是心脏病诊断的数据, 因变量AHD为是否有心脏病, 试图用各个自变量预测(判别)。

读入Heart数据集,并去掉有缺失值的观测:

Heart <- read.csv(

"data/Heart.csv", header=TRUE, row.names=1,

stringsAsFactors=TRUE)

Heart <- na.omit(Heart)

str(Heart)## 'data.frame': 297 obs. of 14 variables:

## $ Age : int 63 67 67 37 41 56 62 57 63 53 ...

## $ Sex : int 1 1 1 1 0 1 0 0 1 1 ...

## $ ChestPain: Factor w/ 4 levels "asymptomatic",..: 4 1 1 2 3 3 1 1 1 1 ...

## $ RestBP : int 145 160 120 130 130 120 140 120 130 140 ...

## $ Chol : int 233 286 229 250 204 236 268 354 254 203 ...

## $ Fbs : int 1 0 0 0 0 0 0 0 0 1 ...

## $ RestECG : int 2 2 2 0 2 0 2 0 2 2 ...

## $ MaxHR : int 150 108 129 187 172 178 160 163 147 155 ...

## $ ExAng : int 0 1 1 0 0 0 0 1 0 1 ...

## $ Oldpeak : num 2.3 1.5 2.6 3.5 1.4 0.8 3.6 0.6 1.4 3.1 ...

## $ Slope : int 3 2 2 3 1 1 3 1 2 3 ...

## $ Ca : int 0 3 2 0 0 0 2 0 1 0 ...

## $ Thal : Factor w/ 3 levels "fixed","normal",..: 1 2 3 2 2 2 2 2 3 3 ...

## $ AHD : Factor w/ 2 levels "No","Yes": 1 2 2 1 1 1 2 1 2 2 ...

## - attr(*, "na.action")= 'omit' Named int [1:6] 88 167 193 267 288 303

## ..- attr(*, "names")= chr [1:6] "88" "167" "193" "267" ...##

## Age Min. :29.00 1st Qu.:48.00 Median :56.00 Mean :54.54 3rd Qu.:61.00 Max. :77.00

## Sex Min. :0.0000 1st Qu.:0.0000 Median :1.0000 Mean :0.6768 3rd Qu.:1.0000 Max. :1.0000

## ChestPain asymptomatic:142 nonanginal : 83 nontypical : 49 typical : 23

## RestBP Min. : 94.0 1st Qu.:120.0 Median :130.0 Mean :131.7 3rd Qu.:140.0 Max. :200.0

## Chol Min. :126.0 1st Qu.:211.0 Median :243.0 Mean :247.4 3rd Qu.:276.0 Max. :564.0

## Fbs Min. :0.0000 1st Qu.:0.0000 Median :0.0000 Mean :0.1448 3rd Qu.:0.0000 Max. :1.0000

## RestECG Min. :0.0000 1st Qu.:0.0000 Median :1.0000 Mean :0.9966 3rd Qu.:2.0000 Max. :2.0000

## MaxHR Min. : 71.0 1st Qu.:133.0 Median :153.0 Mean :149.6 3rd Qu.:166.0 Max. :202.0

## ExAng Min. :0.0000 1st Qu.:0.0000 Median :0.0000 Mean :0.3266 3rd Qu.:1.0000 Max. :1.0000

## Oldpeak Min. :0.000 1st Qu.:0.000 Median :0.800 Mean :1.056 3rd Qu.:1.600 Max. :6.200

## Slope Min. :1.000 1st Qu.:1.000 Median :2.000 Mean :1.603 3rd Qu.:2.000 Max. :3.000

## Ca Min. :0.0000 1st Qu.:0.0000 Median :0.0000 Mean :0.6768 3rd Qu.:1.0000 Max. :3.0000

## Thal fixed : 18 normal :164 reversable:115

## AHD No :160 Yes:137数据下载:Heart.csv

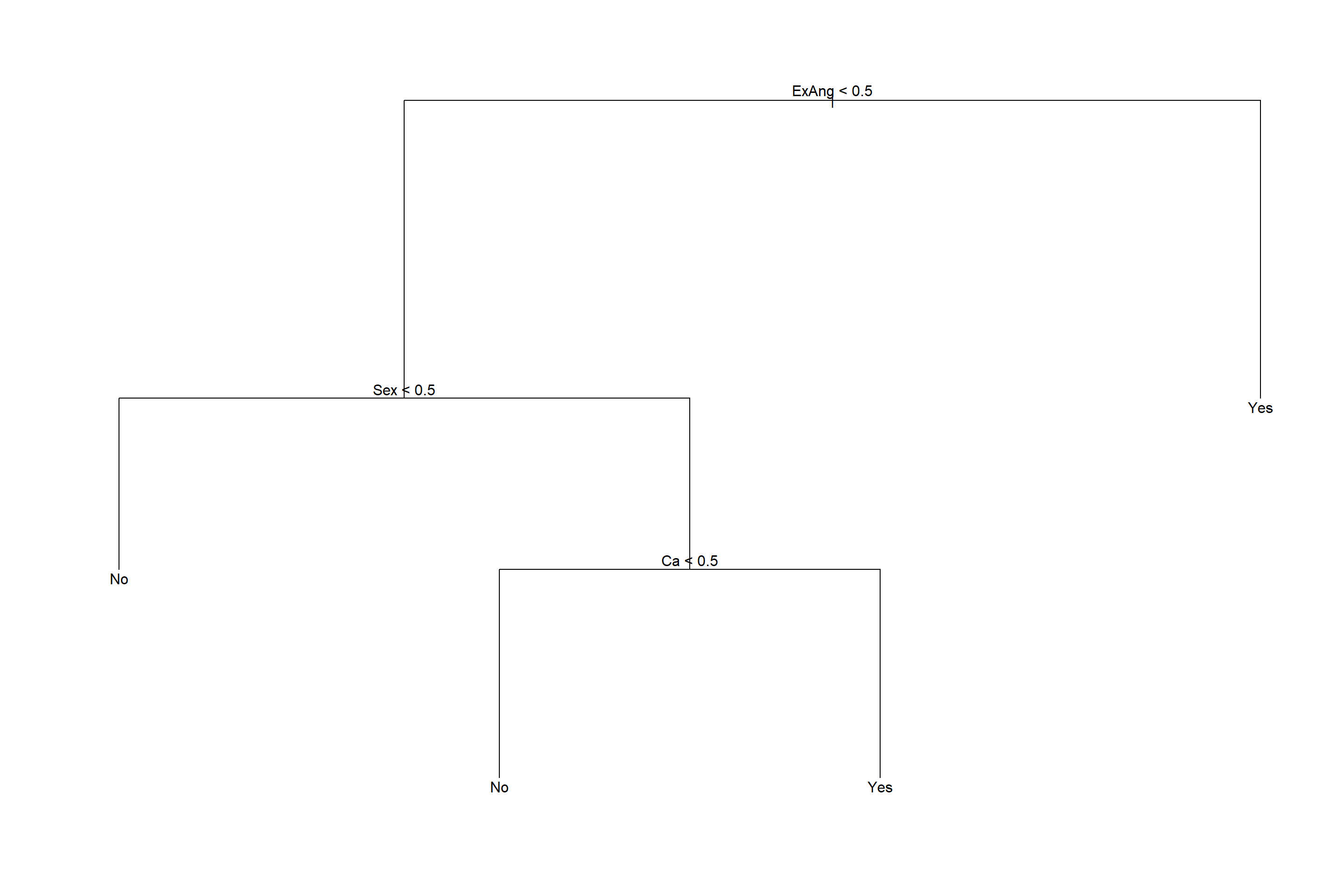

41.3.1 树回归

41.3.1.1 划分训练集与测试集

简单地把观测分为一半训练集、一半测试集:

set.seed(1)

train <- sample(nrow(Heart), size=round(nrow(Heart)/2))

test <- (-train)

test.y <- Heart[test, 'AHD']在训练集上建立未剪枝的判别树:

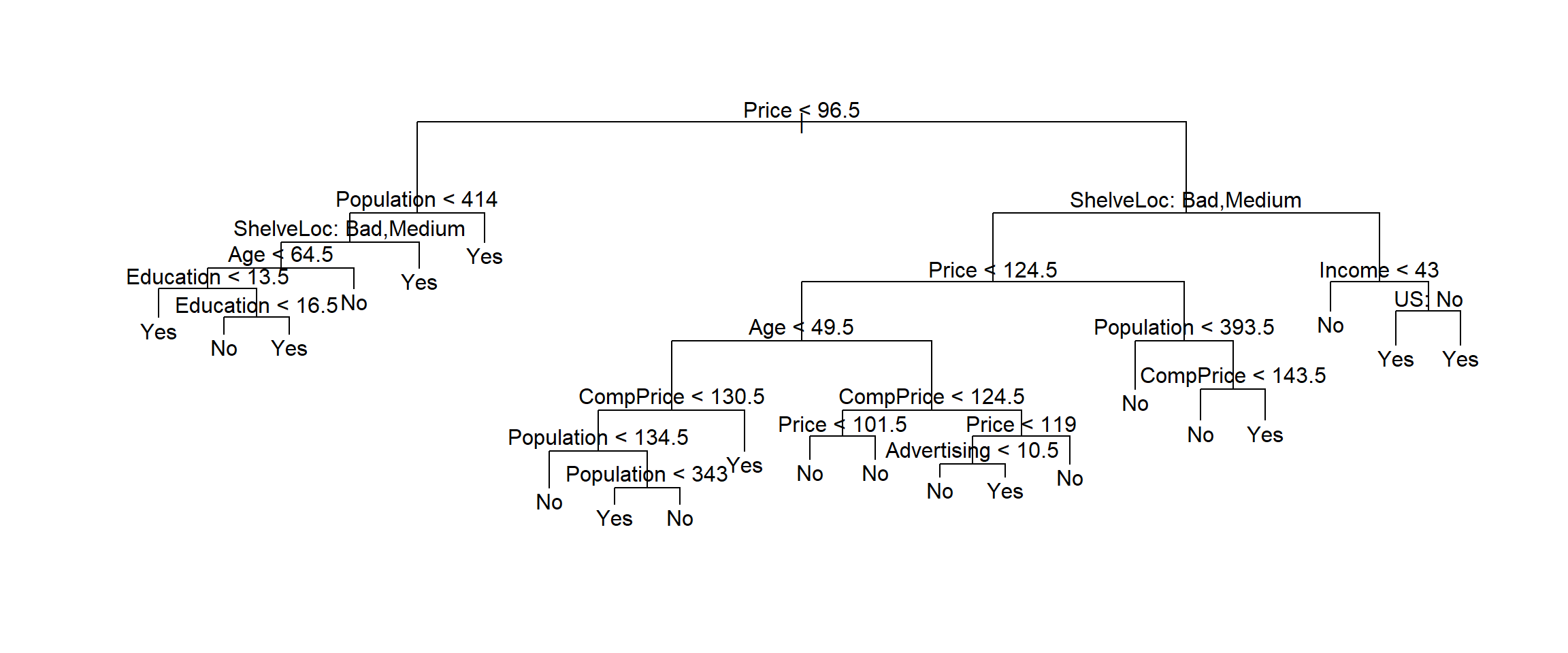

41.3.1.2 适当剪枝

用交叉验证方法确定剪枝保留的叶子个数,剪枝时按照错判率执行:

## $size

## [1] 12 9 6 4 2 1

##

## $dev

## [1] 42 44 47 44 57 69

##

## $k

## [1] -Inf 0.000000 1.666667 3.000000 7.000000 26.000000

##

## $method

## [1] "misclass"

##

## attr(,"class")

## [1] "prune" "tree.sequence"

最优的大小是12。但是从图上看,4个叶结点已经足够好,所以取为4。

对训练集生成剪枝结果:

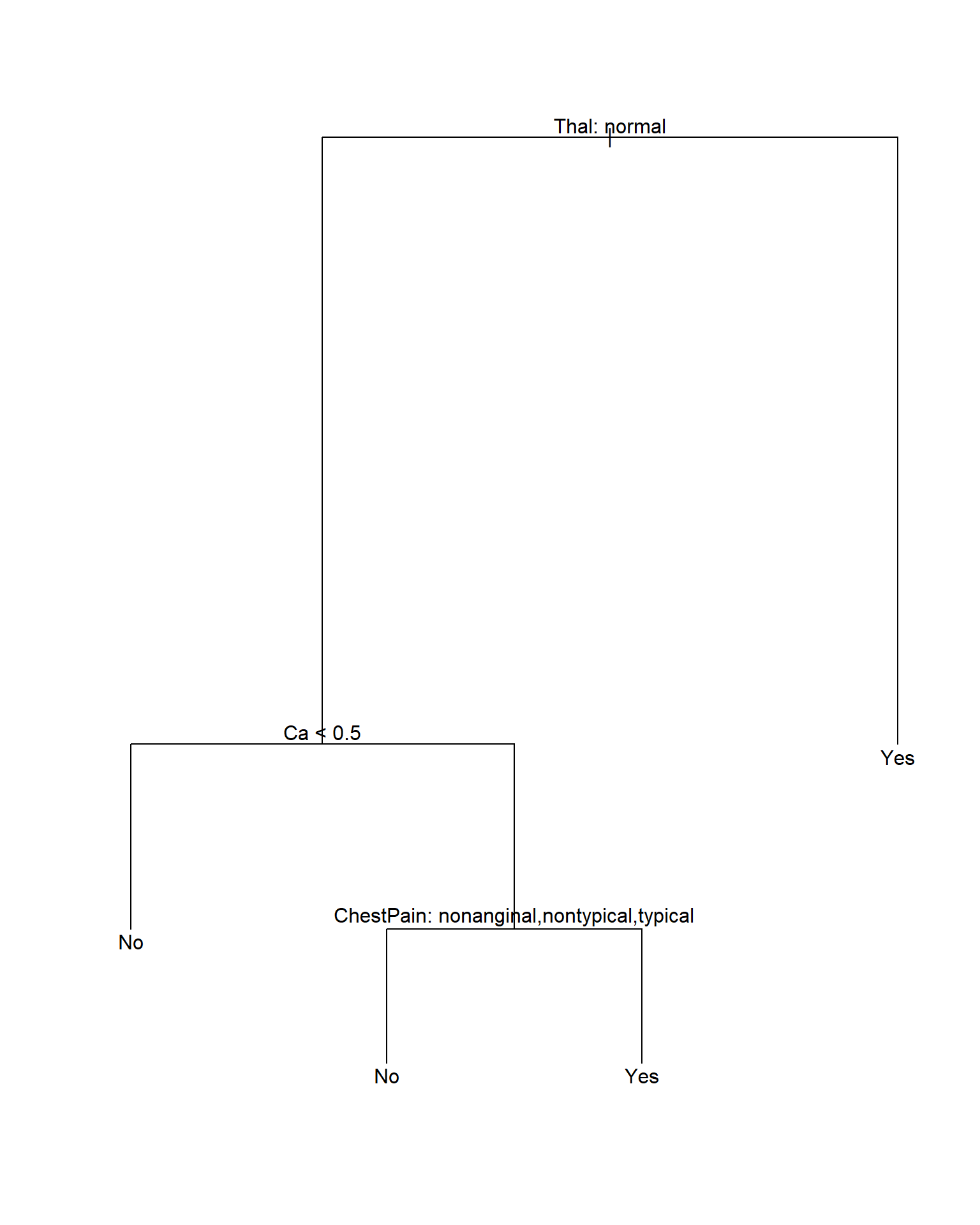

图41.8: Heart数据回归树

注意剪枝后树的显示中, 内部节点的自变量存在分类变量, 这时按照这个自变量分叉时, 取指定的某几个分类值时对应分支Yes, 取其它的分类值时对应分支No。

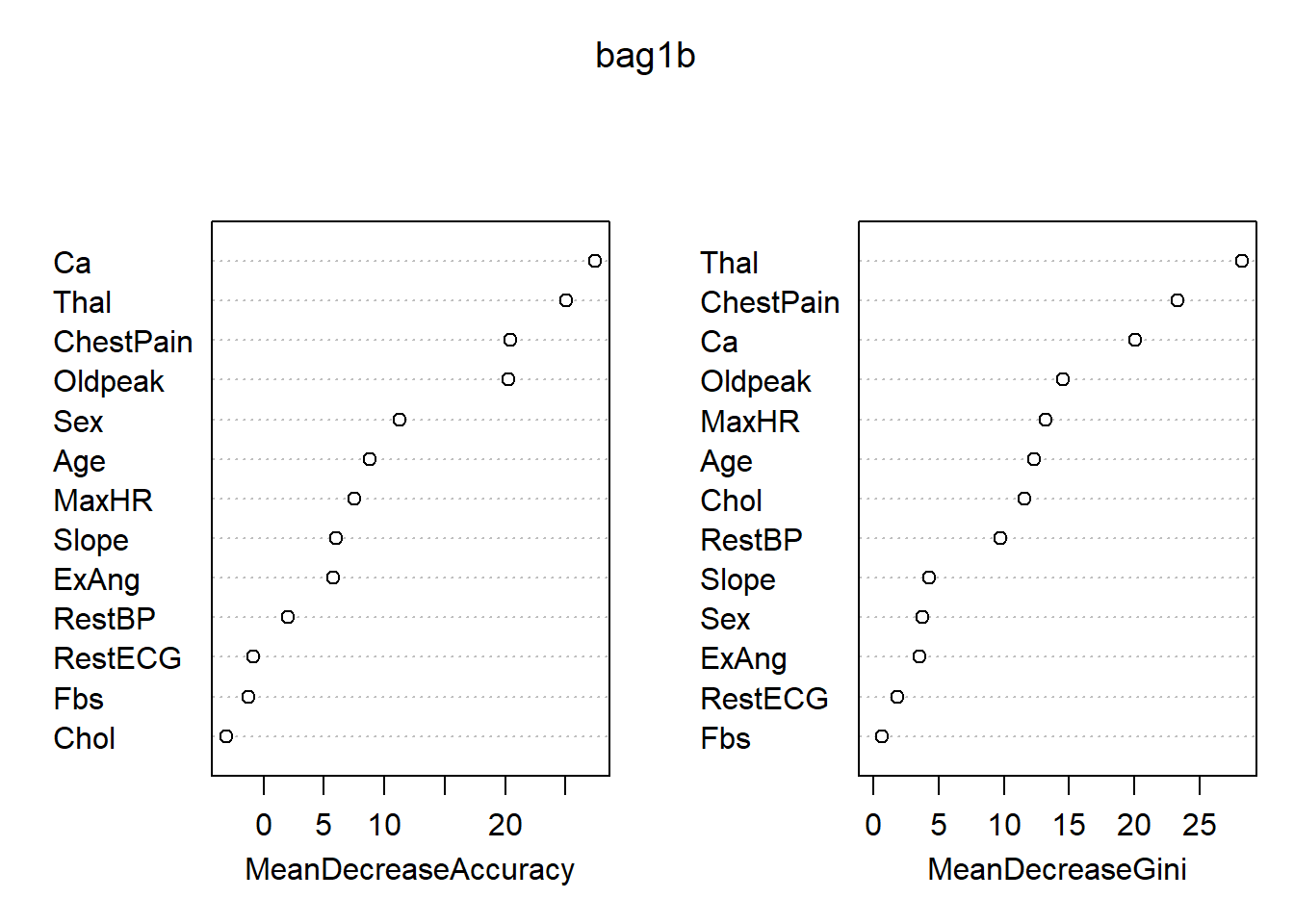

41.3.2 用装袋法

对训练集用装袋法:

##

## Call:

## randomForest(formula = AHD ~ ., data = Heart, mtry = 13, importance = TRUE, subset = train)

## Type of random forest: classification

## Number of trees: 500

## No. of variables tried at each split: 13

##

## OOB estimate of error rate: 22.3%

## Confusion matrix:

## No Yes class.error

## No 71 12 0.1445783

## Yes 21 44 0.3230769注意randomForest()函数实际是随机森林法,

但是当mtry的值取为所有自变量个数时就是装袋法。

袋外观测得到的错判率比较差。

对测试集进行预报:

## test.y

## pred2 No Yes

## No 66 17

## Yes 11 55## [1] 0.1879195测试集的错判率约为19%。

对全集用装袋法:

##

## Call:

## randomForest(formula = AHD ~ ., data = Heart, mtry = 13, importance = TRUE)

## Type of random forest: classification

## Number of trees: 500

## No. of variables tried at each split: 13

##

## OOB estimate of error rate: 20.88%

## Confusion matrix:

## No Yes class.error

## No 131 29 0.1812500

## Yes 33 104 0.2408759各变量的重要度数值及其图形:

## No Yes MeanDecreaseAccuracy MeanDecreaseGini

## Age 6.5766876 5.12005531 8.7542379 12.2956568

## Sex 11.2077275 4.48390165 11.2853739 3.7278623

## ChestPain 13.0268932 17.89348038 20.4292863 23.3424850

## RestBP 2.6203153 0.05626759 2.0521195 9.7650173

## Chol -0.8712348 -4.23294461 -3.0733270 11.5911988

## Fbs -0.6941335 -1.16860850 -1.2288380 0.6775051

## RestECG -1.4881617 0.23292163 -0.8772267 1.8426038

## MaxHR 7.7625054 2.34660468 7.5122314 13.2101707

## ExAng 2.7926364 5.45108497 5.7525854 3.5491718

## Oldpeak 14.8193517 14.67748373 20.2425364 14.5480191

## Slope 2.5189935 5.73789018 5.9744484 4.2777028

## Ca 23.0513399 18.01671793 27.4320740 20.0564750

## Thal 20.1968435 18.74418431 25.0618361 28.2479833

最重要的变量是Thal, ChestPain, Ca。

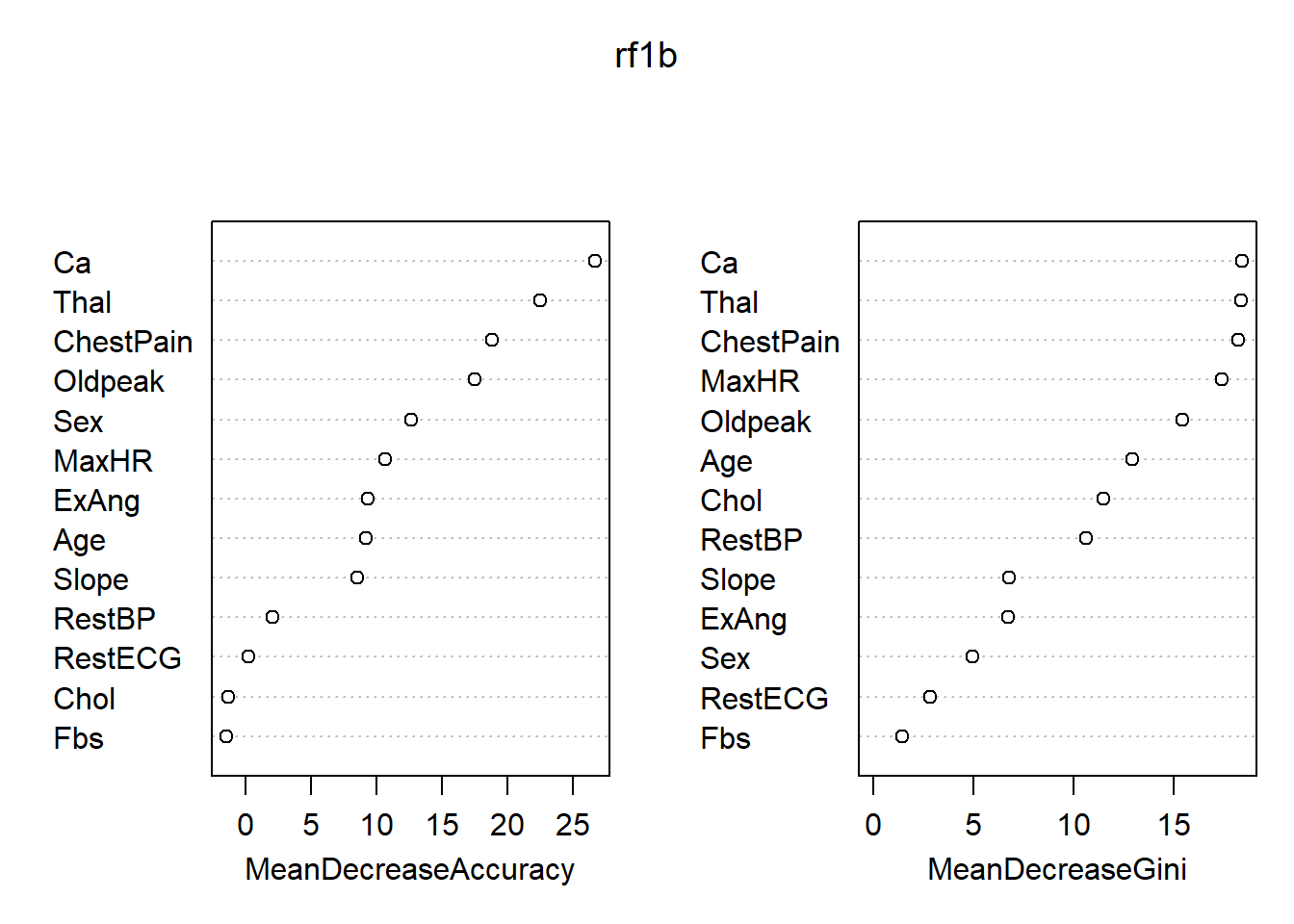

41.3.3 用随机森林

对训练集用随机森林法:

##

## Call:

## randomForest(formula = AHD ~ ., data = Heart, importance = TRUE, subset = train)

## Type of random forest: classification

## Number of trees: 500

## No. of variables tried at each split: 3

##

## OOB estimate of error rate: 21.62%

## Confusion matrix:

## No Yes class.error

## No 71 12 0.1445783

## Yes 20 45 0.3076923这里mtry取缺省值,对应于随机森林法。

对测试集进行预报:

## test.y

## pred3 No Yes

## No 70 16

## Yes 7 56## [1] 0.1543624测试集的错判率约为15%。

对全集用随机森林:

##

## Call:

## randomForest(formula = AHD ~ ., data = Heart, importance = TRUE)

## Type of random forest: classification

## Number of trees: 500

## No. of variables tried at each split: 3

##

## OOB estimate of error rate: 16.5%

## Confusion matrix:

## No Yes class.error

## No 140 20 0.1250000

## Yes 29 108 0.2116788各变量的重要度数值及其图形:

## No Yes MeanDecreaseAccuracy MeanDecreaseGini

## Age 7.2380857 5.4451404 9.1859647 12.908917

## Sex 10.1973138 8.0790483 12.6929315 4.938266

## ChestPain 10.4623927 16.7054395 18.8771946 18.218363

## RestBP 1.2157266 1.8875229 2.1025511 10.624864

## Chol -1.2630538 -0.4285615 -1.3028275 11.470420

## Fbs 0.4417651 -2.6574949 -1.4327524 1.418137

## RestECG -1.1149040 1.4649476 0.2220661 2.840670

## MaxHR 9.3788412 6.0542618 10.7139921 17.383623

## ExAng 3.3923281 9.8037831 9.3523828 6.715947

## Oldpeak 10.1617047 14.3372404 17.5616061 15.403935

## Slope 2.6703016 9.3147738 8.5774100 6.752552

## Ca 21.1750038 20.2285033 26.7114362 18.388524

## Thal 18.0250446 16.6731737 22.5365589 18.351175

图41.9: Heart数据随机森林方法得到的变量重要度

最重要的变量是ChestPain, Thal, Ca。

41.4 汽车销量数据分析

Carseats是ISLR包的一个数据集,基本情况如下:

{rstatl-car-summ01, cache=TRUE} str(Carseats) summary(Carseats)

把Salses变量按照大于8与否分成两组, 结果存入变量High,以High为因变量作判别分析。

## [1] 400 1241.4.1 判别树

41.4.1.1 全体数据的判别树

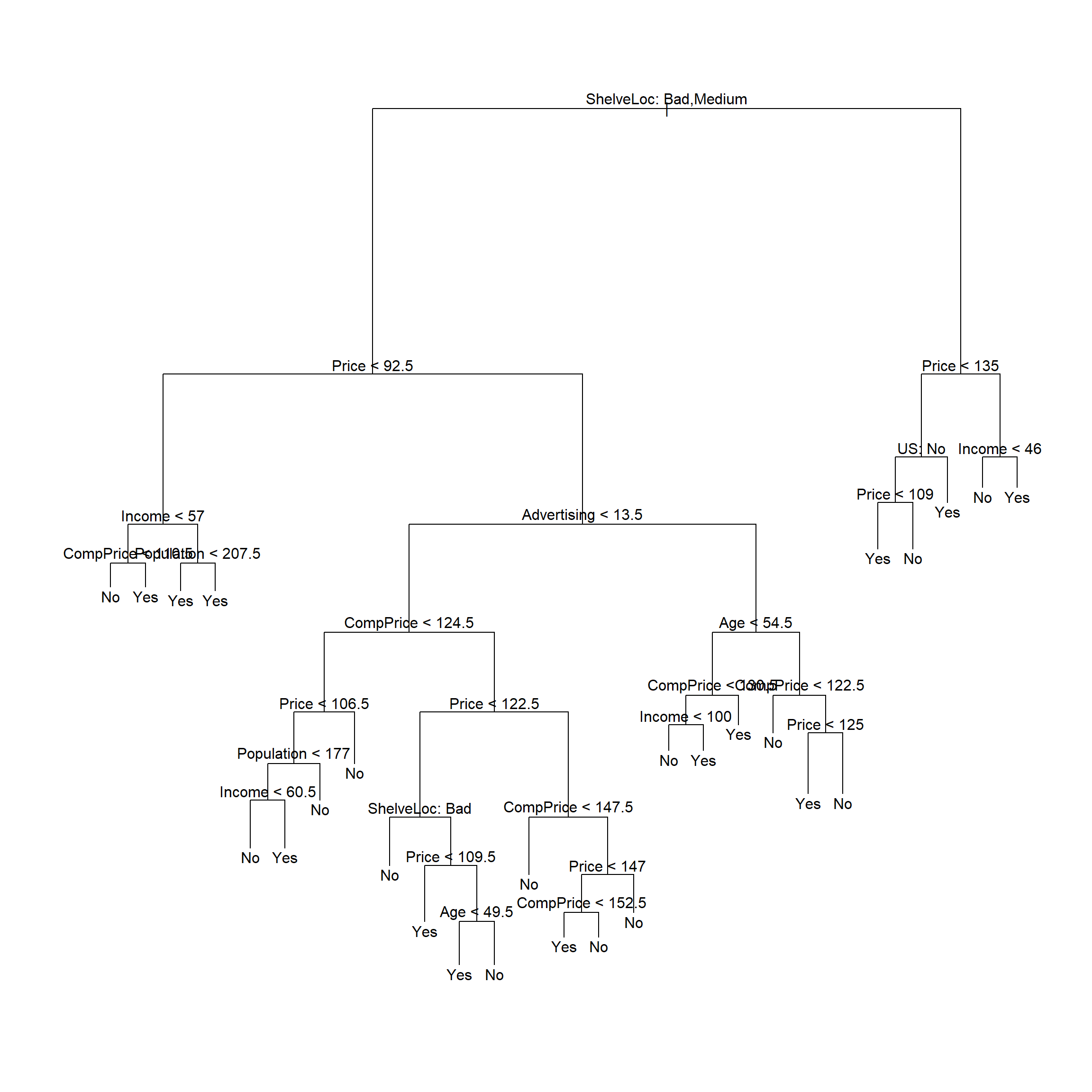

对全体数据建立未剪枝的判别树:

##

## Classification tree:

## tree(formula = High ~ . - Sales, data = d)

## Variables actually used in tree construction:

## [1] "ShelveLoc" "Price" "Income" "CompPrice" "Population" "Advertising" "Age" "US"

## Number of terminal nodes: 27

## Residual mean deviance: 0.4575 = 170.7 / 373

## Misclassification error rate: 0.09 = 36 / 400

41.4.1.2 划分训练集和测试集

把输入数据集随机地分一半当作训练集,另一半当作测试集:

set.seed(2)

train <- sample(nrow(d), size=round(nrow(d)/2))

test <- (-train)

test.high <- d[test, 'High']用训练数据建立未剪枝的判别树:

##

## Classification tree:

## tree(formula = High ~ . - Sales, data = d, subset = train)

## Variables actually used in tree construction:

## [1] "Price" "Population" "ShelveLoc" "Age" "Education" "CompPrice" "Advertising" "Income" "US"

## Number of terminal nodes: 21

## Residual mean deviance: 0.5543 = 99.22 / 179

## Misclassification error rate: 0.115 = 23 / 200

用未剪枝的树对测试集进行预测,并计算误判率:

## test.high

## pred2 No Yes

## No 104 33

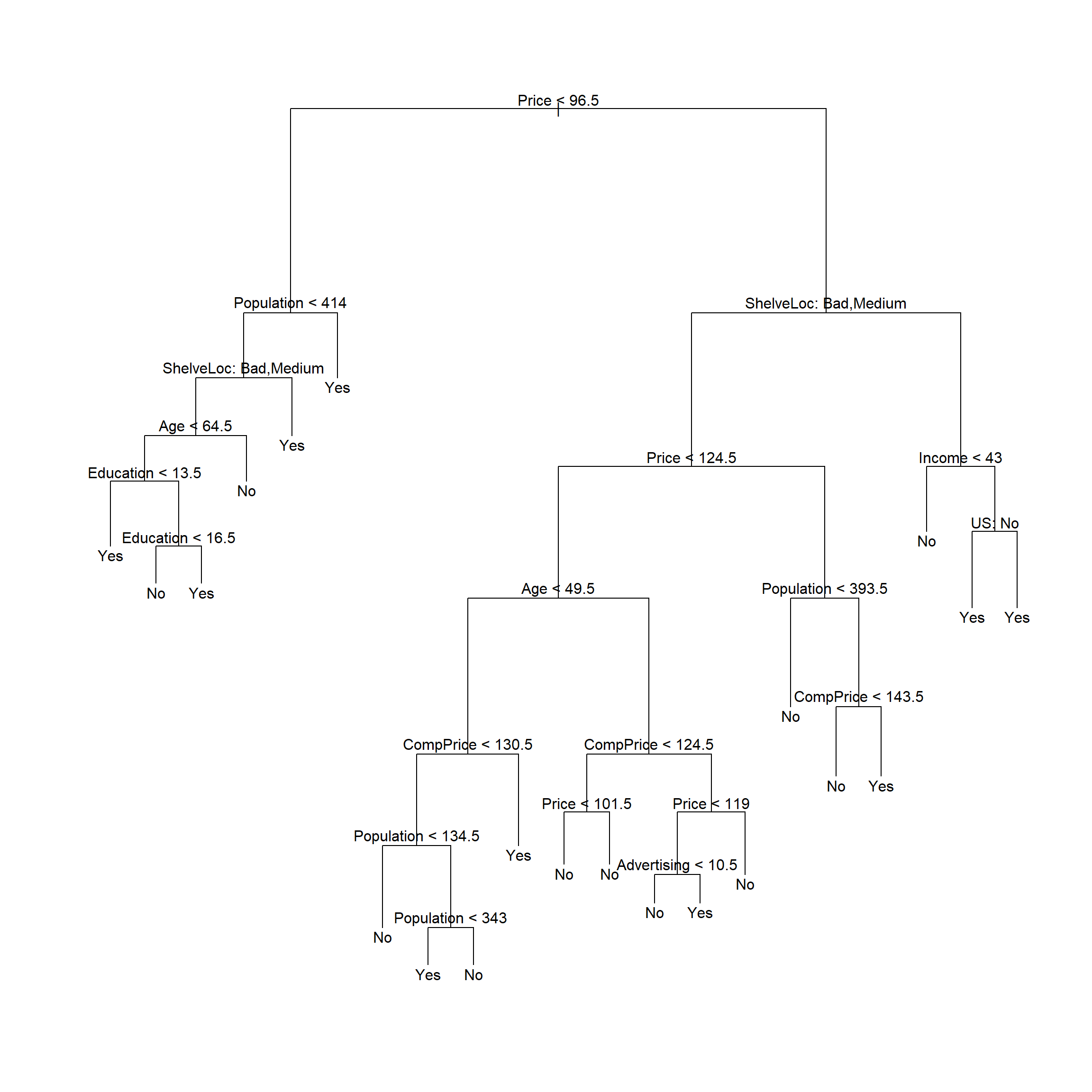

## Yes 13 50## [1] 0.2341.4.1.3 用交叉验证确定训练集的剪枝

## $size

## [1] 21 19 14 9 8 5 3 2 1

##

## $dev

## [1] 74 76 81 81 75 77 78 85 81

##

## $k

## [1] -Inf 0.0 1.0 1.4 2.0 3.0 4.0 9.0 18.0

##

## $method

## [1] "misclass"

##

## attr(,"class")

## [1] "prune" "tree.sequence"

用交叉验证方法自动选择的最佳树大小为21。

剪枝:

##

## Classification tree:

## tree(formula = High ~ . - Sales, data = d, subset = train)

## Variables actually used in tree construction:

## [1] "Price" "Population" "ShelveLoc" "Age" "Education" "CompPrice" "Advertising" "Income" "US"

## Number of terminal nodes: 21

## Residual mean deviance: 0.5543 = 99.22 / 179

## Misclassification error rate: 0.115 = 23 / 200

用剪枝后的树对测试集进行预测,计算误判率:

## test.high

## pred3 No Yes

## No 104 32

## Yes 13 51## [1] 0.22541.4.2 随机森林

对训练集用随机森林法:

##

## Call:

## randomForest(formula = High ~ . - Sales, data = d, importance = TRUE, subset = train)

## Type of random forest: classification

## Number of trees: 500

## No. of variables tried at each split: 3

##

## OOB estimate of error rate: 25.5%

## Confusion matrix:

## No Yes class.error

## No 102 17 0.1428571

## Yes 34 47 0.4197531这里mtry取缺省值,对应于随机森林法。

对测试集进行预报:

## test.high

## pred4 No Yes

## No 109 24

## Yes 8 59## [1] 0.16注意错判率结果依赖于训练集和测试集的划分, 另行选择训练集与测试集可能会得到很不一样的错判率结果。

对全集用随机森林:

##

## Call:

## randomForest(formula = High ~ . - Sales, data = d, importance = TRUE)

## Type of random forest: classification

## Number of trees: 500

## No. of variables tried at each split: 3

##

## OOB estimate of error rate: 18.25%

## Confusion matrix:

## No Yes class.error

## No 213 23 0.09745763

## Yes 50 114 0.30487805各变量的重要度数值及其图形:

## No Yes MeanDecreaseAccuracy MeanDecreaseGini

## CompPrice 11.0998129 5.4168875 11.65469579 21.820876

## Income 3.2897388 4.7177705 5.75865440 20.384692

## Advertising 10.8093624 16.0263308 18.31175988 23.350563

## Population -3.1872660 -1.7367798 -3.63402082 15.670307

## Price 30.0864270 28.7929995 37.44125656 43.492787

## ShelveLoc 30.2789749 33.8109594 39.67983055 30.053785

## Age 9.7116826 9.0261373 12.78808426 22.578000

## Education 0.2214031 -0.3203644 0.06365633 9.899447

## Urban 1.3826674 1.4199879 1.98859615 2.128048

## US 3.7289827 5.1909662 6.83788775 3.405420

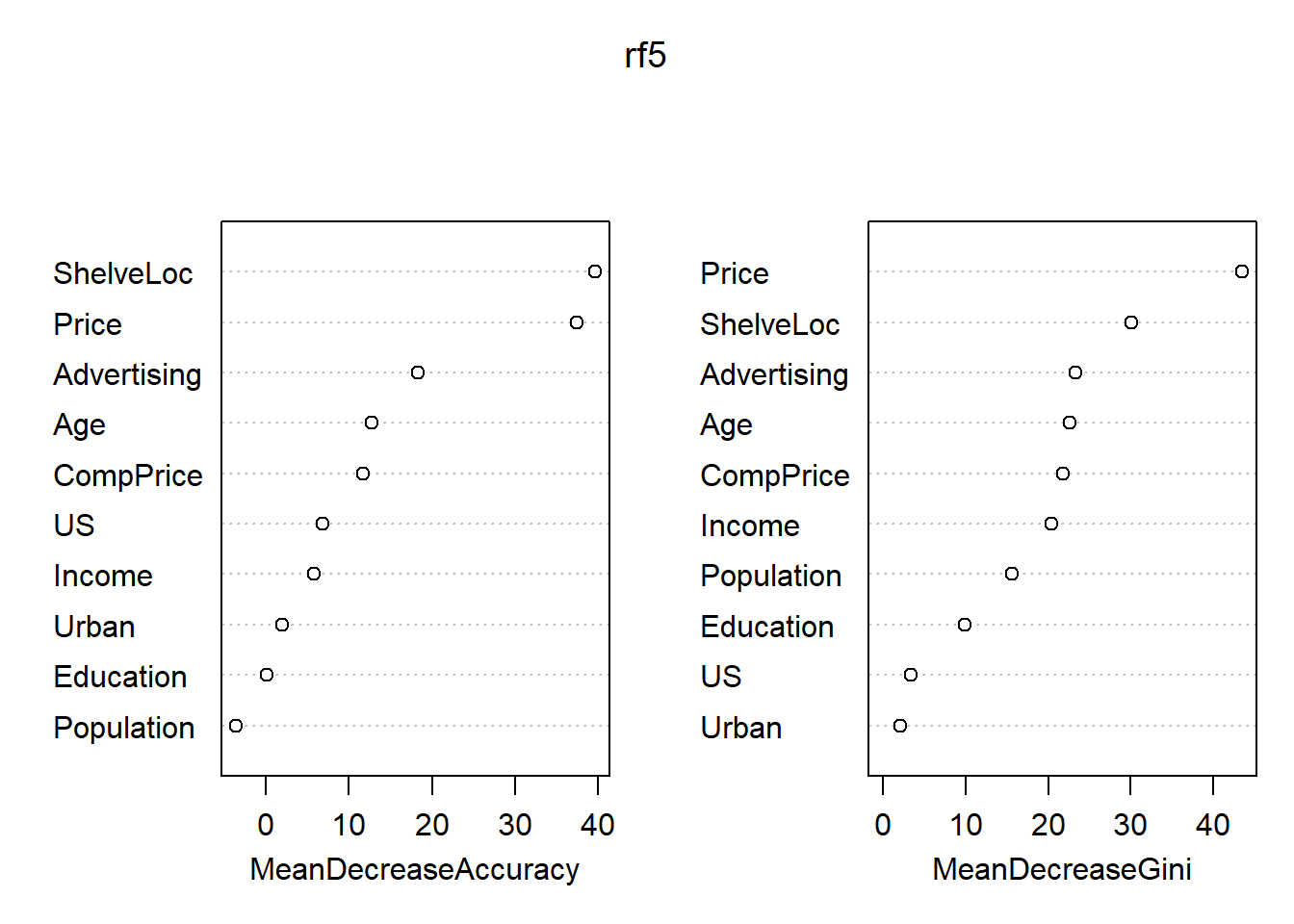

图41.10: Carseats数据随机森林法得到的变量重要度

重要的自变量为Price, ShelfLoc, 其次有Age, Advertising, CompPrice, Income等。

41.5 波士顿郊区房价数据

MASS包的Boston数据包含了波士顿地区郊区房价的若干数据。 以中位房价medv为因变量建立回归模型。 首先把缺失值去掉后存入数据集d:

数据集概况:

## 'data.frame': 506 obs. of 14 variables:

## $ crim : num 0.00632 0.02731 0.02729 0.03237 0.06905 ...

## $ zn : num 18 0 0 0 0 0 12.5 12.5 12.5 12.5 ...

## $ indus : num 2.31 7.07 7.07 2.18 2.18 2.18 7.87 7.87 7.87 7.87 ...

## $ chas : int 0 0 0 0 0 0 0 0 0 0 ...

## $ nox : num 0.538 0.469 0.469 0.458 0.458 0.458 0.524 0.524 0.524 0.524 ...

## $ rm : num 6.58 6.42 7.18 7 7.15 ...

## $ age : num 65.2 78.9 61.1 45.8 54.2 58.7 66.6 96.1 100 85.9 ...

## $ dis : num 4.09 4.97 4.97 6.06 6.06 ...

## $ rad : int 1 2 2 3 3 3 5 5 5 5 ...

## $ tax : num 296 242 242 222 222 222 311 311 311 311 ...

## $ ptratio: num 15.3 17.8 17.8 18.7 18.7 18.7 15.2 15.2 15.2 15.2 ...

## $ black : num 397 397 393 395 397 ...

## $ lstat : num 4.98 9.14 4.03 2.94 5.33 ...

## $ medv : num 24 21.6 34.7 33.4 36.2 28.7 22.9 27.1 16.5 18.9 ...## crim zn indus chas nox rm age dis rad tax ptratio black lstat medv

## Min. : 0.00632 Min. : 0.00 Min. : 0.46 Min. :0.00000 Min. :0.3850 Min. :3.561 Min. : 2.90 Min. : 1.130 Min. : 1.000 Min. :187.0 Min. :12.60 Min. : 0.32 Min. : 1.73 Min. : 5.00

## 1st Qu.: 0.08205 1st Qu.: 0.00 1st Qu.: 5.19 1st Qu.:0.00000 1st Qu.:0.4490 1st Qu.:5.886 1st Qu.: 45.02 1st Qu.: 2.100 1st Qu.: 4.000 1st Qu.:279.0 1st Qu.:17.40 1st Qu.:375.38 1st Qu.: 6.95 1st Qu.:17.02

## Median : 0.25651 Median : 0.00 Median : 9.69 Median :0.00000 Median :0.5380 Median :6.208 Median : 77.50 Median : 3.207 Median : 5.000 Median :330.0 Median :19.05 Median :391.44 Median :11.36 Median :21.20

## Mean : 3.61352 Mean : 11.36 Mean :11.14 Mean :0.06917 Mean :0.5547 Mean :6.285 Mean : 68.57 Mean : 3.795 Mean : 9.549 Mean :408.2 Mean :18.46 Mean :356.67 Mean :12.65 Mean :22.53

## 3rd Qu.: 3.67708 3rd Qu.: 12.50 3rd Qu.:18.10 3rd Qu.:0.00000 3rd Qu.:0.6240 3rd Qu.:6.623 3rd Qu.: 94.08 3rd Qu.: 5.188 3rd Qu.:24.000 3rd Qu.:666.0 3rd Qu.:20.20 3rd Qu.:396.23 3rd Qu.:16.95 3rd Qu.:25.00

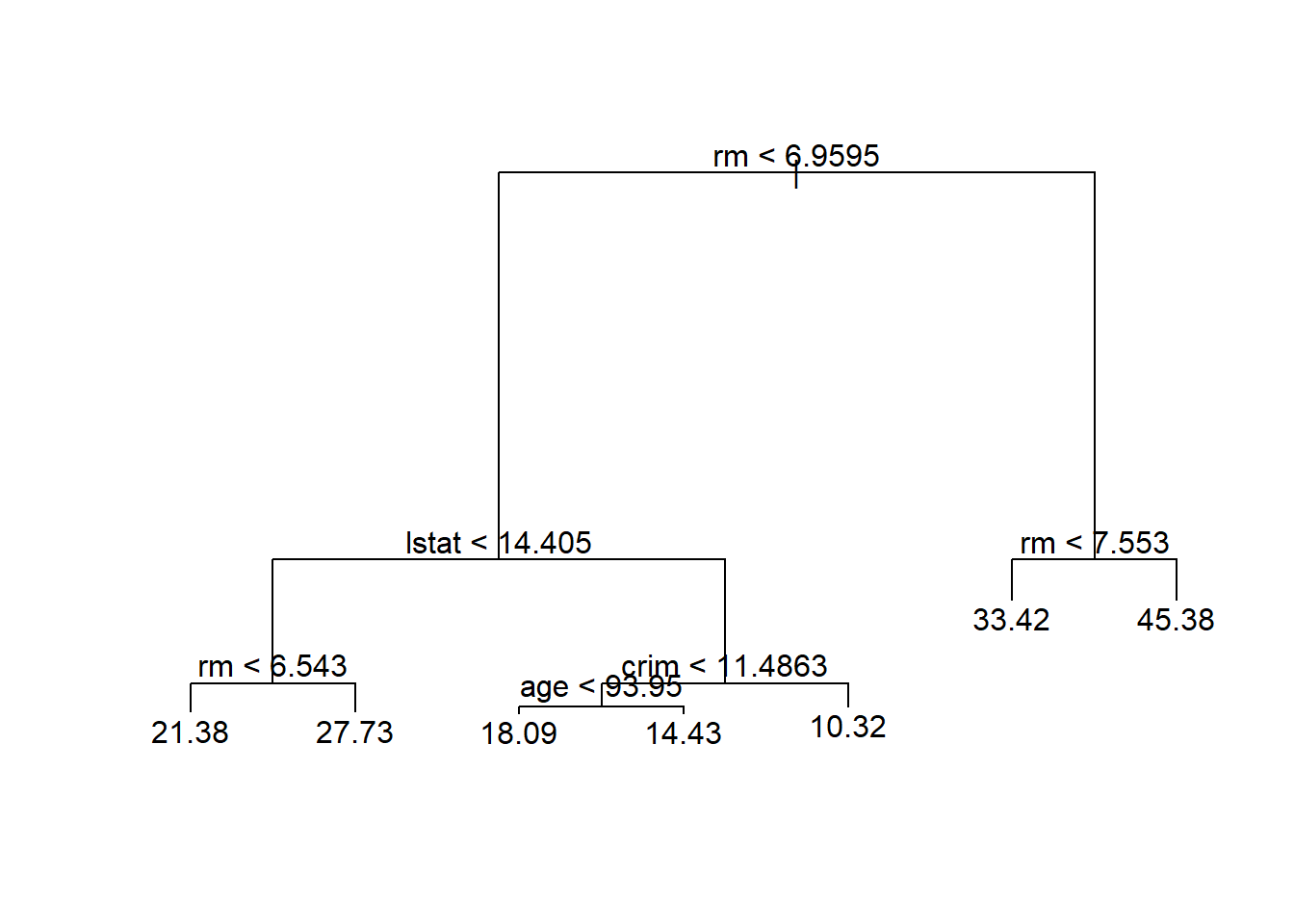

## Max. :88.97620 Max. :100.00 Max. :27.74 Max. :1.00000 Max. :0.8710 Max. :8.780 Max. :100.00 Max. :12.127 Max. :24.000 Max. :711.0 Max. :22.00 Max. :396.90 Max. :37.97 Max. :50.0041.5.1 回归树

41.5.1.1 划分训练集和测试集

对训练集建立未剪枝的树:

##

## Regression tree:

## tree(formula = medv ~ ., data = d, subset = train)

## Variables actually used in tree construction:

## [1] "rm" "lstat" "crim" "age"

## Number of terminal nodes: 7

## Residual mean deviance: 10.38 = 2555 / 246

## Distribution of residuals:

## Min. 1st Qu. Median Mean 3rd Qu. Max.

## -10.1800 -1.7770 -0.1775 0.0000 1.9230 16.5800

用未剪枝的树对测试集进行预测,计算均方误差:

## [1] 35.2868841.5.2 装袋法

用randomForest包计算。

当参数mtry取为自变量个数时按照装袋法计算。

对训练集计算。

set.seed(1)

bag1 <- randomForest(

medv ~ ., data=d, subset=train,

mtry=ncol(d)-1, importance=TRUE)

bag1##

## Call:

## randomForest(formula = medv ~ ., data = d, mtry = ncol(d) - 1, importance = TRUE, subset = train)

## Type of random forest: regression

## Number of trees: 500

## No. of variables tried at each split: 13

##

## Mean of squared residuals: 11.39601

## % Var explained: 85.17在测试集上计算装袋法的均方误差:

## [1] 23.59273比单棵树的结果有明显改善。

41.5.3 随机森林

用randomForest包计算。

当参数mtry取为缺省值时按照随机森林方法计算。

对训练集计算。

##

## Call:

## randomForest(formula = medv ~ ., data = d, importance = TRUE, subset = train)

## Type of random forest: regression

## Number of trees: 500

## No. of variables tried at each split: 4

##

## Mean of squared residuals: 10.23441

## % Var explained: 86.69在测试集上计算随机森林法的均方误差:

## [1] 18.11686比单棵树的结果有明显改善, 比装袋法的结果也好一些。

各变量的重要度数值及其图形:

## %IncMSE IncNodePurity

## crim 15.372334 1220.14856

## zn 3.335435 194.85945

## indus 6.964559 1021.94751

## chas 2.059298 69.68099

## nox 14.009761 1005.14707

## rm 28.693900 6162.30720

## age 13.832143 708.55138

## dis 10.317731 852.33701

## rad 4.390624 162.22597

## tax 7.536563 564.60422

## ptratio 9.333716 1163.39624

## black 8.341316 355.62445

## lstat 27.132450 5549.25088

图41.11: Boston数据用随机森林法得到的变量重要度

41.5.4 提升法

提升法(Boosting)也是可以用在多种回归和判别问题中的方法。 提升法的想法是,用比较简单的模型拟合因变量, 计算残差, 然后以残差为新的因变量建模, 仍使用简单的模型, 把两次的回归函数作加权和, 得到新的残差后,再以新残差作为因变量建模, 如此重复地更新回归函数, 得到由多个回归函数加权和组成的最终的回归函数。

加权一般取为比较小的值, 其目的是降低逼近速度。 统计学习问题中降低逼近速度一般结果更好。

提升法算法:

[(1)] 对训练集,设置\(r_i = y_i\),并令初始回归函数为\(\hat f(\cdot)=0\)。

[(2)] 对\(b=1,2,\dots,B\)重复执行:

- [(a)] 以训练集的自变量为自变量,以\(r\)为因变量,拟合一个仅有\(d\)个分叉的简单树回归函数, 设为\(\hat f_b\);

- [(b)] 更新回归函数,添加一个压缩过的树回归函数: \[\begin{aligned} \hat f(x) \leftarrow \hat f(x) + \lambda \hat f_b(x); \end{aligned}\]

- [(c)] 更新残差: \[\begin{aligned} r_i \leftarrow r_i - \lambda \hat f_b(x_i). \end{aligned}\]

[(3)] 提升法的回归函数为 \[\begin{aligned} \hat f(x) = \sum_{b=1}^B \lambda \hat f_b(x) . \end{aligned}\]

用多少个回归函数做加权和,即\(B\)的选取问题。 取得\(B\)太大也会有过度拟合, 但是只要\(B\)不太大这个问题不严重。 可以用交叉验证选择\(B\)的值。

收缩系数\(\lambda\)。 是一个小的正数, 控制学习速度, 经常用0.01, 0.001这样的值, 与要解决的问题有关。 取\(\lambda\)很小,就需要取\(B\)很大。

用来控制每个回归函数复杂度的参数, 对树回归而言就是树的大小, 用树的深度\(d\)表示。 深度等于1则仅使用一个自变量, 仅有一次分叉, 就是二叉树, 这样多棵树相加, 相当于各个变量的可加模型, 没有交互作用效应, 这样的可加模型往往就很好。 \(d>1\)时就加入了交互项, 比如\(d=2\), 就可以用两个变量, 用叶结点预测因变量时, 最多可以用两个自变量作两次判断, 因为树模型是非线性的, 将许多棵这样的深度为2的树相加, 就可以包含自变量两两之间的非线性的相互作用效应。

使用gbm包。

interaction.depth表示树的深度(复杂度),

n.trees表示用多少棵树相加。

shrinkage表示学习速度,

即算法中的\(\lambda\)。

n.minobsinnode表示每个叶结点至少应包含的观测点数,

可以设置这个参数,

以避免过少的训练样例也单独作为一个规则。

在训练集上拟合:

set.seed(1)

bst1 <- gbm(

medv ~ .,

data=d[train,],

distribution='gaussian',

n.trees=5000,

interaction.depth=4)

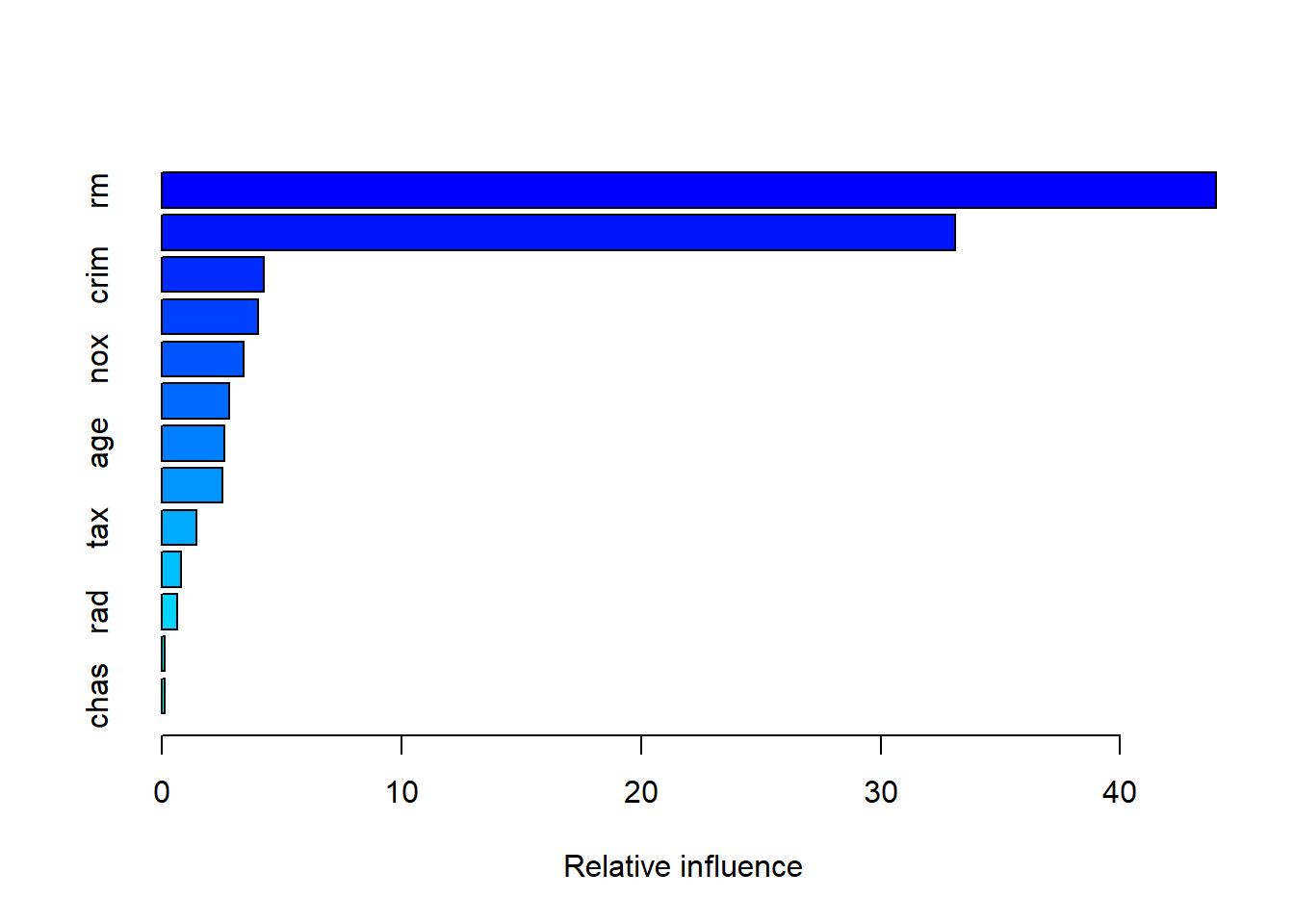

summary(bst1)

## var rel.inf

## rm rm 43.9919329

## lstat lstat 33.1216941

## crim crim 4.2604167

## dis dis 4.0111090

## nox nox 3.4353017

## black black 2.8267554

## age age 2.6113938

## ptratio ptratio 2.5403035

## tax tax 1.4565654

## indus indus 0.8008740

## rad rad 0.6546400

## zn zn 0.1446149

## chas chas 0.1443986lstat和rm是最重要的变量。

在测试集上预报,并计算均方误差:

## [1] 18.84709与随机森林方法结果相近。

如果提高学习速度:

bst2 <- gbm(

medv ~ .,

data=d[train,],

distribution='gaussian',

n.trees=5000,

interaction.depth=4,

shrinkage=0.2)

yhat <- predict(

bst2,

newdata=d[test,],

n.trees=5000)

mean( (yhat - d[test, 'medv'])^2 )## [1] 18.33455均方误差有改善。

41.6 支持向量机方法

支持向量机是1990年代有计算机科学家发明的一种有监督学习方法, 使用范围较广,预测精度较高。

支持向量机利用了Hilbert空间的方法将线性问题扩展为非线性问题。 线性的支持向量判别法, 可以通过\(\mathbb R^p\)的内积将线性的判别函数转化为如下的表示:

\[\begin{aligned} f(\boldsymbol x) = \beta_0 + \sum_{i=1}^n \alpha_i \langle \boldsymbol x, \boldsymbol x_i \rangle \end{aligned}\] 其中\(\beta_0, \alpha_1, \dots, \alpha_n\)是待定参数。 为了估计参数, 不需要用到各\(\boldsymbol x_i\)的具体值, 而只需要其两两的内积值, 而且在判别函数中只有支持向量对应的\(\alpha_i\)才非零, 记\(\mathcal S\)为支持向量点集, 则线性判别函数为 \[\begin{aligned} f(\boldsymbol x) = \beta_0 + \sum_{i \in \mathcal S} \alpha_i \langle \boldsymbol x, \boldsymbol x_i \rangle \end{aligned}\]

支持向量机方法将\(\mathbb R^p\)中的内积推广为如下的核函数值: \[\begin{aligned} K(\boldsymbol x, \boldsymbol x') \end{aligned}\] 核函数\(K(\boldsymbol x, \boldsymbol x')\), \(\boldsymbol x, \boldsymbol x' \in \mathbb R^p\) 是度量两个观测点\(\boldsymbol x, \boldsymbol x'\)的相似程度的函数。 比如, 取 \[\begin{aligned} K(\boldsymbol x, \boldsymbol x') = \sum_{j=1}^p x_j x_j' \end{aligned}\] 就又回到了线性的支持向量判别法。

核有多种取法。 例如, 取 \[\begin{aligned} K(\boldsymbol x, \boldsymbol x') = \left\{ 1 + \sum_{j=1}^p x_j x_j' \right\}^d \end{aligned}\] 其中\(d>1\)为正整数, 称为多项式核, 则结果是多项式边界的判别法, 本质上是对线性的支持向量方法添加了高次项和交叉项。

利用核代替内积后, 判别法的判别函数变成 \[\begin{aligned} f(\boldsymbol x) = \beta_0 + \sum_{i \in \mathcal S} K(\boldsymbol x, \boldsymbol x_i) \end{aligned}\]

另一种常用的核是径向核(radial kernel), 定义为 \[\begin{aligned} K(\boldsymbol x, \boldsymbol x') = \exp\left\{ - \gamma \sum_{j=1}^p (x_j - x_j')^2 \right\} \end{aligned}\] \(\gamma\)为正常数。 当\(\boldsymbol x\)和\(\boldsymbol x'\)分别落在以原点为中心的两个超球面上时, 其核函数值不变。

使用径向核时, 判别函数为 \[\begin{aligned} f(\boldsymbol x) = \beta_0 + \sum_{i \in \mathcal S} \exp\left\{ - \gamma \sum_{j=1}^p (x_{j} - x_{ij})^2 \right\} \end{aligned}\] 对一个待判别的观测\(\boldsymbol x^*\), 如果\(\boldsymbol x^*\)距离训练观测点\(\boldsymbol x_i\)较远, 则\(K(\boldsymbol x^*, \boldsymbol x_i)\)的值很小, \(\boldsymbol x_i\)对\(\boldsymbol x^*\)的判别基本不起作用。 这样的性质使得径向核方法具有很强的局部性, 只有离\(\boldsymbol x^*\)很近的点才对其判别起作用。

为什么采用核函数计算观测两两的\(\binom{n}{2}\)个核函数值, 而不是直接增加非线性项? 原因是计算这些核函数值计算量是确定的, 而增加许多非线性项, 则可能有很大的计算量, 而且某些核如径向核对应的自变量空间维数是无穷维的, 不能通过添加维度的办法解决。

支持向量机的理论基于再生核希尔伯特空间(RKHS), 可参见(Trevor Hastie 2009)节5.8和节12.3.3。

41.6.1 支持向量机用于Heart数据

考虑心脏病数据Heart的判别。 共297个观测, 随机选取其中207个作为训练集, 90个作为测试集。

set.seed(1)

Heart <- read.csv(

"data/Heart.csv", header=TRUE, row.names=1,

stringsAsFactors=TRUE)

d <- na.omit(Heart)

train <- sample(nrow(d), size=207)

test <- -train

d[["AHD"]] <- factor(d[["AHD"]], levels=c("No", "Yes"))定义一个错判率函数:

classifier.error <- function(truth, pred){

tab1 <- table(truth, pred)

err <- 1 - sum(diag(tab1))/sum(c(tab1))

err

}41.6.1.1 线性的SVM

支持向量判别法就是SVM取多项式核,

阶数\(d=1\)的情形。

需要一个调节参数cost,

cost越大,

分隔边界越窄,

过度拟合危险越大。

先随便取调节参数cost=1试验支持向量判别法:

res.svc <- svm(AHD ~ ., data=d[train,], kernel="linear", cost=1, scale=TRUE)

fit.svc <- predict(res.svc)

summary(res.svc)##

## Call:

## svm(formula = AHD ~ ., data = d[train, ], kernel = "linear", cost = 1, scale = TRUE)

##

##

## Parameters:

## SVM-Type: C-classification

## SVM-Kernel: linear

## cost: 1

##

## Number of Support Vectors: 79

##

## ( 38 41 )

##

##

## Number of Classes: 2

##

## Levels:

## No Yes计算拟合结果并计算错判率:

## fitted

## truth No Yes

## No 105 9

## Yes 18 75## SVC错判率: 0.13e1071函数提供了tune()函数,

可以在训练集上用十折交叉验证选择较好的调节参数。

set.seed(101)

res.tune <- tune(svm, AHD ~ ., data=d[train,], kernel="linear", scale=TRUE,

ranges=list(cost=c(0.001, 0.01, 0.1, 1, 5, 10, 100, 1000)))

summary(res.tune)##

## Parameter tuning of 'svm':

##

## - sampling method: 10-fold cross validation

##

## - best parameters:

## cost

## 0.1

##

## - best performance: 0.1542857

##

## - Detailed performance results:

## cost error dispersion

## 1 1e-03 0.4450000 0.08509809

## 2 1e-02 0.1695238 0.07062868

## 3 1e-01 0.1542857 0.07006458

## 4 1e+00 0.1590476 0.07793796

## 5 5e+00 0.1590476 0.08709789

## 6 1e+01 0.1590476 0.08709789

## 7 1e+02 0.1590476 0.08709789

## 8 1e+03 0.1590476 0.08709789找到的最优调节参数为0.1,

可以用res.tune$best.model获得对应于最优调节参数的模型:

##

## Call:

## best.tune(method = svm, train.x = AHD ~ ., data = d[train, ], ranges = list(cost = c(0.001, 0.01, 0.1, 1, 5, 10, 100, 1000)), kernel = "linear", scale = TRUE)

##

##

## Parameters:

## SVM-Type: C-classification

## SVM-Kernel: linear

## cost: 0.1

##

## Number of Support Vectors: 90

##

## ( 44 46 )

##

##

## Number of Classes: 2

##

## Levels:

## No Yes在测试集上测试:

pred.svc <- predict(res.tune$best.model, newdata=d[test,])

tab1 <- table(truth=d[test,"AHD"], predict=pred.svc); tab1## predict

## truth No Yes

## No 43 3

## Yes 11 33## SVC错判率: 0.1641.6.1.2 多项式核SVM

res.svm1 <- svm(AHD ~ ., data=d[train,], kernel="polynomial",

order=2, cost=0.1, scale=TRUE)

fit.svm1 <- predict(res.svm1)

summary(res.svm1)##

## Call:

## svm(formula = AHD ~ ., data = d[train, ], kernel = "polynomial", order = 2, cost = 0.1, scale = TRUE)

##

##

## Parameters:

## SVM-Type: C-classification

## SVM-Kernel: polynomial

## cost: 0.1

## degree: 3

## coef.0: 0

##

## Number of Support Vectors: 187

##

## ( 92 95 )

##

##

## Number of Classes: 2

##

## Levels:

## No Yes## fitted

## truth No Yes

## No 114 0

## Yes 82 11## 2阶多项式核SVM错判率: 0.4尝试找到调节参数cost的最优值:

set.seed(101)

res.tune2 <- tune(svm, AHD ~ ., data=d[train,], kernel="polynomial",

order=2, scale=TRUE,

ranges=list(cost=c(0.001, 0.01, 0.1, 1, 5, 10, 100, 1000)))

summary(res.tune2)##

## Parameter tuning of 'svm':

##

## - sampling method: 10-fold cross validation

##

## - best parameters:

## cost

## 5

##

## - best performance: 0.2130952

##

## - Detailed performance results:

## cost error dispersion

## 1 1e-03 0.4500000 0.08022549

## 2 1e-02 0.4500000 0.08022549

## 3 1e-01 0.4111905 0.09215957

## 4 1e+00 0.2185714 0.09094005

## 5 5e+00 0.2130952 0.09790737

## 6 1e+01 0.2180952 0.07948562

## 7 1e+02 0.2807143 0.09539966

## 8 1e+03 0.2807143 0.09539966fit.svm2 <- predict(res.tune2$best.model)

tab1 <- table(truth=d[train,"AHD"], fitted=fit.svm2); tab1## fitted

## truth No Yes

## No 111 3

## Yes 4 89## 2阶多项式核最优参数SVM错判率: 0.03看这个最优调节参数的模型在测试集上的表现:

pred.svm2 <- predict(res.tune2$best.model, d[test,])

tab1 <- table(truth=d[test,"AHD"], predict=pred.svm2); tab1## predict

## truth No Yes

## No 43 3

## Yes 10 34## 2阶多项式核最优参数SVM测试集错判率: 0.14在测试集上的表现与线性方法相近。

41.6.1.3 径向核SVM

径向核需要的参数为\(\gamma\)值。

取参数gamma=0.1。

res.svm3 <- svm(AHD ~ ., data=d[train,], kernel="radial",

gamma=0.1, cost=0.1, scale=TRUE)

fit.svm3 <- predict(res.svm3)

summary(res.svm3)##

## Call:

## svm(formula = AHD ~ ., data = d[train, ], kernel = "radial", gamma = 0.1, cost = 0.1, scale = TRUE)

##

##

## Parameters:

## SVM-Type: C-classification

## SVM-Kernel: radial

## cost: 0.1

##

## Number of Support Vectors: 179

##

## ( 89 90 )

##

##

## Number of Classes: 2

##

## Levels:

## No Yes## fitted

## truth No Yes

## No 108 6

## Yes 26 67## 径向核(gamma=0.1, cost=0.1)SVM错判率: 0.15选取最优cost, gamma调节参数:

set.seed(101)

res.tune4 <- tune(svm, AHD ~ ., data=d[train,], kernel="radial",

scale=TRUE,

ranges=list(cost=c(0.001, 0.01, 0.1, 1, 5, 10, 100, 1000),

gamma=c(0.1, 0.01, 0.001)))

summary(res.tune4)##

## Parameter tuning of 'svm':

##

## - sampling method: 10-fold cross validation

##

## - best parameters:

## cost gamma

## 100 0.001

##

## - best performance: 0.1492857

##

## - Detailed performance results:

## cost gamma error dispersion

## 1 1e-03 0.100 0.4500000 0.08022549

## 2 1e-02 0.100 0.4500000 0.08022549

## 3 1e-01 0.100 0.2235714 0.09912346

## 4 1e+00 0.100 0.1788095 0.08490543

## 5 5e+00 0.100 0.1835714 0.06267781

## 6 1e+01 0.100 0.1835714 0.07375788

## 7 1e+02 0.100 0.1933333 0.09294732

## 8 1e+03 0.100 0.1933333 0.09294732

## 9 1e-03 0.010 0.4500000 0.08022549

## 10 1e-02 0.010 0.4500000 0.08022549

## 11 1e-01 0.010 0.3147619 0.11998992

## 12 1e+00 0.010 0.1647619 0.06992960

## 13 5e+00 0.010 0.1547619 0.07819776

## 14 1e+01 0.010 0.1547619 0.08135598

## 15 1e+02 0.010 0.2126190 0.06443790

## 16 1e+03 0.010 0.2409524 0.08621108

## 17 1e-03 0.001 0.4500000 0.08022549

## 18 1e-02 0.001 0.4500000 0.08022549

## 19 1e-01 0.001 0.4500000 0.08022549

## 20 1e+00 0.001 0.2138095 0.11215945

## 21 5e+00 0.001 0.1695238 0.07062868

## 22 1e+01 0.001 0.1840476 0.08321647

## 23 1e+02 0.001 0.1492857 0.08228019

## 24 1e+03 0.001 0.1640476 0.07494392fit.svm4 <- predict(res.tune4$best.model)

tab1 <- table(truth=d[train,"AHD"], fitted=fit.svm4); tab1## fitted

## truth No Yes

## No 107 7

## Yes 18 75## 径向核最优参数SVM错判率: 0.12看这个最优调节参数的模型在测试集上的表现:

pred.svm4 <- predict(res.tune4$best.model, d[test,])

tab1 <- table(truth=d[test,"AHD"], predict=pred.svm2); tab1## predict

## truth No Yes

## No 43 3

## Yes 10 34## 径向核最优参数SVM测试集错判率: 0.14与线性方法结果相近。

41.7 用H2O包进行统计学习计算

H2O是一个开源的、集成的机器学习环境, 基于Java语言开发, 支持并行处理, 支持大型数据。 R的H2O扩展包提供了对H2O软件的接口, 可以用比较统一的界面访问各种机器学习方法。

H2O使用自己的数据格式,

R的data.frame和data.table可以用as.h2o()函数转换为H2O的H2OFrame格式。

H2O的R扩展包利用网络服务访问正在运行的H2O软件, R本身并不进行计算和数据存储。

41.7.1 安装

如果安装有旧的H2O版本, 应预先卸载。 H2O包还依赖RCurl包和jsonlite包, 应提前安装。

H2O需要使用Java语言, 所以应该先安装一个Java环境, 64位JRE即可(Java运行环境), 64位的JDK则可以支持Java源代码编译和H2O测试。 在Windows下, 下载链接为:

H2O需要进行源代码编译, 所以在Windows操作系统中使用需要安装RTools工具包。

从下列链接下载H2O的源代码形式的扩展包:

放在当前工作目录后, 用如下命令安装, 安装时需要进行编译:

41.7.2 启动和退出H2O

启动:

library(h2o)

h2o.init(

nthreads = -1, max_mem_size = '16g',

ip = "127.0.0.1", port = 54321)

h2o.no_progress()因为启动了一个本地服务, 所以退出H2O时应该有一个关闭动作:

41.7.3 Hitters数据示例

转换数据格式为H2O格式:

拆分训练集、测试集:

splits <- h2o.splitFrame(

data = hf_hit,

ratios = c(0.60), seed = 1234)

train <- splits[[1]]

test <- splits[[2]]设置自变量、因变量:

用GBM方法。 先人为指定调优参数进行测试:

gbm1 <- h2o.gbm(

y=y, x=x,

training_frame = train,

ntrees = 10,

max_depth = 2,

min_rows = 3,

learn_rate = 0.1,

distribution= "gaussian")迭代过程的显示:

结果略。

训练集上的表现:

H2ORegressionMetrics: gbm

** Reported on training data. **

MSE: 98531.6

RMSE: 313.8974

MAE: 223.4093

RMSLE: 0.6764681

Mean Residual Deviance : 98531.6训练集上的RMSE为314。

变量重要度的度量:

Variable Importances:

variable relative_importance scaled_importance percentage

1 CRBI 34232028.000000 1.000000 0.288900

2 CHits 22870448.000000 0.668101 0.193014

3 Walks 21664550.000000 0.632874 0.182837

4 Runs 11754988.000000 0.343392 0.099206

5 CAtBat 8579465.000000 0.250627 0.072406

6 AtBat 5039900.000000 0.147228 0.042534

7 Hits 4386617.000000 0.128144 0.037021

8 CHmRun 3640191.250000 0.106339 0.030721

9 CRuns 3588647.750000 0.104833 0.030286

10 RBI 1586647.750000 0.046350 0.013390

11 CWalks 1147642.875000 0.033525 0.009685

12 HmRun 0.000000 0.000000 0.000000

13 Years 0.000000 0.000000 0.000000

14 League 0.000000 0.000000 0.000000

15 Division 0.000000 0.000000 0.000000

16 PutOuts 0.000000 0.000000 0.000000

17 Assists 0.000000 0.000000 0.000000

18 Errors 0.000000 0.000000 0.000000

19 NewLeague 0.000000 0.000000 0.000000可以用结果中scaled_importance作为每个变量重要程度的度量。

可以用条形图显示:

图形略。

下面进行参数调优。 H2O有两种参数调优方法, 第一种方法是将每个参数的若干个可能的值进行完全组合, 形成一个完全设计试验方案, 称为一个网格, 然后对每一种参数组合训练一个模型, 用交叉验证或者验证集比较这些模型; 第二种方法是形成了网格后, 在网格中随机均匀抽取进行模型比较, 这种方法可以设置一个时间限制, 在限制时间内找到较优模型, 其网格可以密集一些。

例如, 若参数\(A\)可取\(0.5, 1.5\), \(B\)可取\(10, 20\), \(C\)可取\(0.01, 0.1\), 则网格(完全试验方案)为: \[ \begin{array}{rlll} \text{NO} & A & B & C \\ 1 & 0.5 & 10 & 0.01 \\ 2 & 0.5 & 10 & 0.1 \\ 3 & 0.5 & 20 & 0.01 \\ 4 & 0.5 & 20 & 0.1 \\ 5 & 1.5 & 10 & 0.01 \\ 6 & 1.5 & 10 & 0.1 \\ 7 & 1.5 & 20 & 0.01 \\ 8 & 1.5 & 20 & 0.1 \end{array} \]

先用一个较小的网格搜索。 用默认的交叉验证方法。 仅修改树棵数、树最大深度、学习率参数。

time0 <- proc.time()[3]

gbm_params1 <- list(

ntrees = c(10, 20, 30),

max_depth = c(3, 5, 10),

min_rows = c(3, 5, 10),

learn_rate = c(0.01, 0.1, 0.5))

gbm_grid1 <- h2o.grid(

"gbm",

x = x,

y = y,

grid_id = "gbm_grid1",

training_frame = train,

nfolds=5,

seed = 1,

hyper_params= gbm_params1)

time_search <- paste(

round((proc.time()[3] - time0)/60), "minuntes")

cat("Time used:", time_search, "\n")

gbm_gridperf1 <- h2o.getGrid(

grid_id = "gbm_grid1",

sort_by = "rmse",

decreasing = FALSE)

gbm_gridperf1@summary_tableHyper-Parameter Search Summary: ordered by increasing rmse

learn_rate max_depth min_rows ntrees model_ids rmse

1 0.50000 10.00000 3.00000 10.00000 gbm_grid1_model_9 353.58941

2 0.50000 10.00000 3.00000 20.00000 gbm_grid1_model_36 355.47727

3 0.50000 10.00000 3.00000 30.00000 gbm_grid1_model_63 355.57809

4 0.10000 5.00000 3.00000 30.00000 gbm_grid1_model_59 356.84165

5 0.10000 10.00000 3.00000 30.00000 gbm_grid1_model_62 357.29143

---

learn_rate max_depth min_rows ntrees model_ids rmse

76 0.01000 3.00000 10.00000 10.00000 gbm_grid1_model_19 480.13855

77 0.01000 5.00000 3.00000 10.00000 gbm_grid1_model_4 480.30282

78 0.01000 5.00000 5.00000 10.00000 gbm_grid1_model_13 481.28910

79 0.01000 3.00000 5.00000 10.00000 gbm_grid1_model_10 481.49020

80 0.01000 10.00000 5.00000 10.00000 gbm_grid1_model_16 481.72192

81 0.01000 3.00000 3.00000 10.00000 gbm_grid1_model_1 481.72711完成参数网格优化后,

可以用h2o.getGrid()从优化结果中获取网格参数对应的各个模型,

并可以按RMSE、AOC等指标对模型排序显示。

可以用模型代码访问其中的具体模型。

最优参数组合为:

ntrees = 10;max_depth = 10;learn_rate = 0.5;min_rows = 3。

交叉验证的RMSE为354。

目前的最优模型:

此模型的变量重要度度量:

结果略, 与gbm1的排序有较大变化。

在最优组合附近再次进行搜索, 但使用离散随机化搜索策略, 取一个较密集的网格, 限制时间为5分钟:

time0 <- proc.time()[3]

gbm_params2 <- list(

ntrees = seq(5, 50, by=5),

max_depth = seq(1, 20, by=1),

min_rows = seq(2, 20, by=1),

learn_rate = c(0.01*(5:9), 0.1*(1:5)))

search_criteria2 <- list(

strategy = "RandomDiscrete",

max_runtime_secs = 300)

gbm_grid2 <- h2o.grid(

"gbm",

x = x,

y = y,

grid_id = "gbm_grid2",

training_frame = train,

nfolds = 5,

seed = 1,

hyper_params= gbm_params2,

search_criteria = search_criteria2)

time_search <- paste(

round((proc.time()[3] - time0)/60), "minuntes")

cat("Time used:", time_search, "\n")

gbm_gridperf2 <- h2o.getGrid(

grid_id = "gbm_grid2",

sort_by = "rmse",

decreasing = FALSE)

gbm_gridperf2@summary_tableHyper-Parameter Search Summary: ordered by increasing rmse

learn_rate max_depth min_rows ntrees model_ids rmse

1 0.10000 3.00000 2.00000 30.00000 gbm_grid2_model_820 344.00923

2 0.05000 4.00000 2.00000 35.00000 gbm_grid2_model_1151 344.84749

3 0.50000 9.00000 3.00000 5.00000 gbm_grid2_model_1033 344.85596

4 0.07000 15.00000 3.00000 50.00000 gbm_grid2_model_884 346.27286

5 0.07000 18.00000 3.00000 40.00000 gbm_grid2_model_675 347.23740

---

learn_rate max_depth min_rows ntrees model_ids rmse

1353 0.05000 18.00000 6.00000 5.00000 gbm_grid2_model_1197 455.81170

1354 0.05000 2.00000 2.00000 5.00000 gbm_grid2_model_1121 456.76030

1355 0.05000 2.00000 3.00000 5.00000 gbm_grid2_model_1305 458.97621

1356 0.08000 1.00000 5.00000 5.00000 gbm_grid2_model_571 459.82785

1357 0.06000 1.00000 5.00000 5.00000 gbm_grid2_model_793 469.72791

1358 0.05000 1.00000 2.00000 5.00000 gbm_grid2_model_167 473.67680最优参数组合:

ntrees = 30;max_depth = 3;learn_rate = 0.1;min_rows = 2。

交叉核实的RMSE为344。

提取调优结果的最优模型:

使用最后找到的最优模型在测试集上进行预测比较:

H2ORegressionMetrics: gbm

MSE: 77536.85

RMSE: 278.4544

MAE: 175.6084

RMSLE: 0.5015645

Mean Residual Deviance : 77536.85测试集上的RMSE为278, 比较理想。

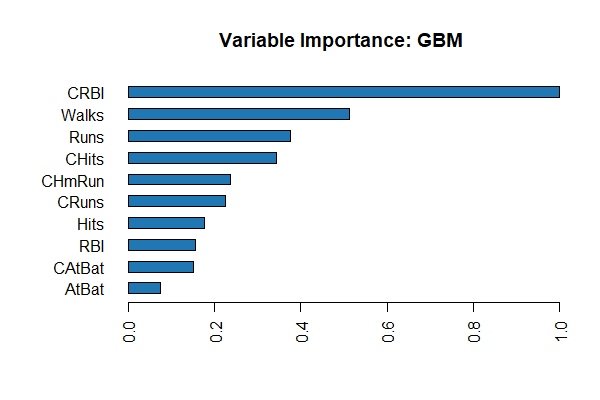

变量重要度分析:

Variable Importances:

Variable Relative Importance Scaled Importance Percentage

1 CRBI 132.466204 1.000000 0.222229

2 Walks 96.518919 0.728631 0.161923

3 CHits 59.025072 0.445586 0.099022

4 CHmRun 55.486735 0.418875 0.093086

5 Runs 50.785759 0.383387 0.085200

6 CRuns 38.727044 0.292354 0.064970

7 CAtBat 33.082905 0.249746 0.055501

8 Hits 25.749126 0.194383 0.043197

9 CWalks 20.566305 0.155257 0.034503

10 RBI 16.308211 0.123112 0.027359

11 Years 15.404481 0.116290 0.025843

12 AtBat 14.144269 0.106776 0.023729

13 Errors 14.052819 0.106086 0.023575

14 PutOuts 10.156549 0.076673 0.017039

15 HmRun 5.738121 0.043318 0.009626

16 Division 4.937515 0.037274 0.008283

17 NewLeague 1.975050 0.014910 0.003313

18 Assists 0.954782 0.007208 0.001602重要度作图:

图形略。

在测试集上计算因变量预测值:

predict

1 423.0851

2 890.0124

3 160.4647

4 815.4056

5 1271.8789

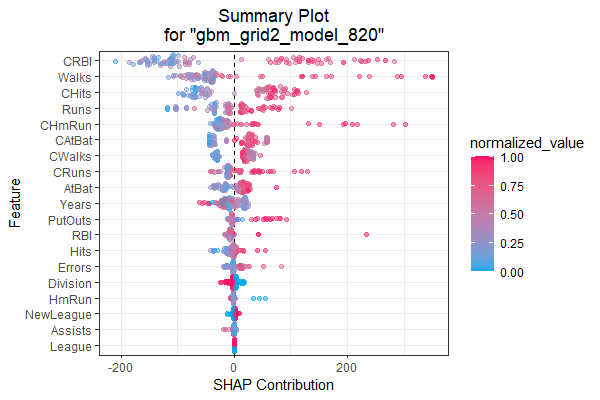

6 181.5200变量解释性分析:

这会产生多个关于每个变量的贡献的图形。 也有一些单个图形的函数, 比如SHAP概况图:

SHAP计算每个观测上每个变量的贡献值, 并对变量的总的贡献由大到小排序, 并用散点图绘制出这些贡献。 结果如:

变量重要度图:

41.7.4 AutoML

H2O提供了一个AutoML功能, 可以自动使用各个机器学习方法进行训练、参数调优、模型比较, 输出占优的多个模型。

用户仅需要指定训练数据集training_frame、因变量y、最多允许训练时间max_runtime_secs,

自变量自动选择为因变量以外的所有变量,

参数调优自动使用交叉验证方法。

示例:

library(h2o)

h2o.init()

train <- h2o.importFile("https://s3.amazonaws.com/erin-data/higgs/higgs_train_10k.csv")

test <- h2o.importFile("https://s3.amazonaws.com/erin-data/higgs/higgs_test_5k.csv")

y <- "response"

x <- setdiff(names(train), y)

# 分类问题的因变量必须是因子

train[, y] <- as.factor(train[, y])

test[, y] <- as.factor(test[, y])

# 限制5分钟

aml <- h2o.automl(

x = x, y = y,

training_frame = train,

#max_models = 20,

max_runtime_secs = 300,

seed = 1)

# View the AutoML Leaderboard

lb <- aml@leaderboard

print(lb, n = nrow(lb)) model_id auc logloss aucpr

1 StackedEnsemble_AllModels_3_AutoML_1_20230717_82125 0.7896537 0.5492908 0.8084317

2 StackedEnsemble_AllModels_4_AutoML_1_20230717_82125 0.7888052 0.5503257 0.8076245

3 StackedEnsemble_AllModels_2_AutoML_1_20230717_82125 0.7874863 0.5515817 0.8072801

4 StackedEnsemble_AllModels_1_AutoML_1_20230717_82125 0.7867515 0.5522508 0.8069401

5 StackedEnsemble_BestOfFamily_4_AutoML_1_20230717_82125 0.7854556 0.5534061 0.8053178

6 StackedEnsemble_BestOfFamily_5_AutoML_1_20230717_82125 0.7847936 0.5542375 0.8050583

7 StackedEnsemble_BestOfFamily_3_AutoML_1_20230717_82125 0.7832484 0.5556922 0.8029427

8 StackedEnsemble_BestOfFamily_2_AutoML_1_20230717_82125 0.7819484 0.5568627 0.8017783

9 StackedEnsemble_AllModels_5_AutoML_1_20230717_82125 0.7817324 0.5638011 0.7997335

10 StackedEnsemble_BestOfFamily_1_AutoML_1_20230717_82125 0.7800970 0.5592433 0.7990314

11 GBM_grid_1_AutoML_1_20230717_82125_model_12 0.7800394 0.5595114 0.8014000

12 GBM_grid_1_AutoML_1_20230717_82125_model_9 0.7797381 0.5625036 0.7983718

13 GBM_1_AutoML_1_20230717_82125 0.7795121 0.5602557 0.7995356

14 GBM_2_AutoML_1_20230717_82125 0.7792939 0.5608256 0.7984392

15 GBM_grid_1_AutoML_1_20230717_82125_model_17 0.7790189 0.5649027 0.7959446

16 GBM_grid_1_AutoML_1_20230717_82125_model_16 0.7788996 0.5624376 0.7947606

17 GBM_5_AutoML_1_20230717_82125 0.7788048 0.5617556 0.7967867

18 GBM_grid_1_AutoML_1_20230717_82125_model_19 0.7786671 0.5639216 0.7971413

19 StackedEnsemble_BestOfFamily_6_AutoML_1_20230717_82125 0.7779028 0.5602988 0.7989296

20 GBM_grid_1_AutoML_1_20230717_82125_model_2 0.7778602 0.5646552 0.7953585

21 GBM_grid_1_AutoML_1_20230717_82125_model_14 0.7775555 0.5668371 0.7924693

22 GBM_grid_1_AutoML_1_20230717_82125_model_6 0.7772192 0.5642876 0.7954070

23 GBM_grid_1_AutoML_1_20230717_82125_model_7 0.7764426 0.5701478 0.7923477

24 GBM_3_AutoML_1_20230717_82125 0.7751876 0.5650460 0.7946101

25 GBM_4_AutoML_1_20230717_82125 0.7742870 0.5656442 0.7963992

26 GBM_grid_1_AutoML_1_20230717_82125_model_11 0.7734054 0.5716275 0.7919521

27 GBM_grid_1_AutoML_1_20230717_82125_model_3 0.7729262 0.5681808 0.7911955

28 GBM_grid_1_AutoML_1_20230717_82125_model_4 0.7705223 0.5692442 0.7890998

29 GBM_grid_1_AutoML_1_20230717_82125_model_5 0.7704555 0.5732127 0.7881083

30 XRT_1_AutoML_1_20230717_82125 0.7642216 0.5814393 0.7820797

31 DRF_1_AutoML_1_20230717_82125 0.7631956 0.5802385 0.7840833

32 GBM_grid_1_AutoML_1_20230717_82125_model_10 0.7603439 0.5805147 0.7762872

33 GBM_grid_1_AutoML_1_20230717_82125_model_8 0.7532375 0.5947734 0.7703927

34 GBM_grid_1_AutoML_1_20230717_82125_model_15 0.7532095 0.5887163 0.7719831

35 GBM_grid_1_AutoML_1_20230717_82125_model_1 0.7476579 0.5915102 0.7632106

36 GBM_grid_1_AutoML_1_20230717_82125_model_13 0.7426757 0.6044879 0.7619594

37 DeepLearning_grid_2_AutoML_1_20230717_82125_model_1 0.7297311 0.6137454 0.7358833

38 DeepLearning_grid_1_AutoML_1_20230717_82125_model_1 0.7265855 0.6634738 0.7275126

39 GBM_grid_1_AutoML_1_20230717_82125_model_18 0.7245035 0.6152842 0.7447474

40 DeepLearning_grid_3_AutoML_1_20230717_82125_model_1 0.7160532 0.6232399 0.7192921

41 DeepLearning_grid_1_AutoML_1_20230717_82125_model_2 0.7142102 0.6319313 0.7162592

42 DeepLearning_1_AutoML_1_20230717_82125 0.7081655 0.6274959 0.7123640

43 DeepLearning_grid_1_AutoML_1_20230717_82125_model_3 0.7042074 0.6544330 0.7070402

44 GLM_1_AutoML_1_20230717_82125 0.6826483 0.6385202 0.6807189

mean_per_class_error rmse mse

1 0.3281307 0.4317858 0.1864389

2 0.3212199 0.4322351 0.1868272

3 0.3315550 0.4328426 0.1873527

4 0.3280114 0.4331952 0.1876580

5 0.3354530 0.4338531 0.1882285

6 0.3293683 0.4341424 0.1884796

7 0.3363375 0.4347748 0.1890291

8 0.3316707 0.4353819 0.1895574

9 0.3214789 0.4371155 0.1910699

10 0.3486560 0.4362937 0.1903522

11 0.3367738 0.4365091 0.1905402

12 0.3330875 0.4371565 0.1911058

13 0.3275111 0.4366086 0.1906271

14 0.3278906 0.4367848 0.1907810

15 0.3285993 0.4379804 0.1918268

16 0.3347428 0.4372481 0.1911859

17 0.3343263 0.4371076 0.1910630

18 0.3363475 0.4377541 0.1916287

19 0.3301687 0.4370242 0.1909902

20 0.3337600 0.4380880 0.1919211

21 0.3236620 0.4387509 0.1925023

22 0.3248413 0.4380742 0.1919090

23 0.3332508 0.4402129 0.1937874

24 0.3302285 0.4388332 0.1925746

25 0.3456632 0.4393214 0.1930033

26 0.3288446 0.4411005 0.1945696

27 0.3228082 0.4399974 0.1935977

28 0.3497369 0.4407917 0.1942973

29 0.3286788 0.4424870 0.1957948

30 0.3474700 0.4457808 0.1987205

31 0.3492529 0.4455428 0.1985084

32 0.3560809 0.4456789 0.1986297

33 0.3445959 0.4515736 0.2039187

34 0.3537379 0.4498069 0.2023263

35 0.3594190 0.4510526 0.2034484

36 0.3540427 0.4563462 0.2082518

37 0.3674737 0.4596807 0.2113064

38 0.3713030 0.4701575 0.2210480

39 0.3957685 0.4618223 0.2132799

40 0.3822944 0.4643025 0.2155768

41 0.3894767 0.4655305 0.2167187

42 0.3786903 0.4666819 0.2177920

43 0.4008949 0.4726119 0.2233620

44 0.3972341 0.4726827 0.223428941.8 附录

41.8.1 Hitters数据

| AtBat | Hits | HmRun | Runs | RBI | Walks | Years | CAtBat | CHits | CHmRun | CRuns | CRBI | CWalks | League | Division | PutOuts | Assists | Errors | Salary | NewLeague | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| -Andy Allanson | 293 | 66 | 1 | 30 | 29 | 14 | 1 | 293 | 66 | 1 | 30 | 29 | 14 | A | E | 446 | 33 | 20 | NA | A |

| -Alan Ashby | 315 | 81 | 7 | 24 | 38 | 39 | 14 | 3449 | 835 | 69 | 321 | 414 | 375 | N | W | 632 | 43 | 10 | 475.000 | N |

| -Alvin Davis | 479 | 130 | 18 | 66 | 72 | 76 | 3 | 1624 | 457 | 63 | 224 | 266 | 263 | A | W | 880 | 82 | 14 | 480.000 | A |

| -Andre Dawson | 496 | 141 | 20 | 65 | 78 | 37 | 11 | 5628 | 1575 | 225 | 828 | 838 | 354 | N | E | 200 | 11 | 3 | 500.000 | N |

| -Andres Galarraga | 321 | 87 | 10 | 39 | 42 | 30 | 2 | 396 | 101 | 12 | 48 | 46 | 33 | N | E | 805 | 40 | 4 | 91.500 | N |

| -Alfredo Griffin | 594 | 169 | 4 | 74 | 51 | 35 | 11 | 4408 | 1133 | 19 | 501 | 336 | 194 | A | W | 282 | 421 | 25 | 750.000 | A |

| -Al Newman | 185 | 37 | 1 | 23 | 8 | 21 | 2 | 214 | 42 | 1 | 30 | 9 | 24 | N | E | 76 | 127 | 7 | 70.000 | A |

| -Argenis Salazar | 298 | 73 | 0 | 24 | 24 | 7 | 3 | 509 | 108 | 0 | 41 | 37 | 12 | A | W | 121 | 283 | 9 | 100.000 | A |

| -Andres Thomas | 323 | 81 | 6 | 26 | 32 | 8 | 2 | 341 | 86 | 6 | 32 | 34 | 8 | N | W | 143 | 290 | 19 | 75.000 | N |

| -Andre Thornton | 401 | 92 | 17 | 49 | 66 | 65 | 13 | 5206 | 1332 | 253 | 784 | 890 | 866 | A | E | 0 | 0 | 0 | 1100.000 | A |

| -Alan Trammell | 574 | 159 | 21 | 107 | 75 | 59 | 10 | 4631 | 1300 | 90 | 702 | 504 | 488 | A | E | 238 | 445 | 22 | 517.143 | A |

| -Alex Trevino | 202 | 53 | 4 | 31 | 26 | 27 | 9 | 1876 | 467 | 15 | 192 | 186 | 161 | N | W | 304 | 45 | 11 | 512.500 | N |

| -Andy VanSlyke | 418 | 113 | 13 | 48 | 61 | 47 | 4 | 1512 | 392 | 41 | 205 | 204 | 203 | N | E | 211 | 11 | 7 | 550.000 | N |

| -Alan Wiggins | 239 | 60 | 0 | 30 | 11 | 22 | 6 | 1941 | 510 | 4 | 309 | 103 | 207 | A | E | 121 | 151 | 6 | 700.000 | A |

| -Bill Almon | 196 | 43 | 7 | 29 | 27 | 30 | 13 | 3231 | 825 | 36 | 376 | 290 | 238 | N | E | 80 | 45 | 8 | 240.000 | N |

| -Billy Beane | 183 | 39 | 3 | 20 | 15 | 11 | 3 | 201 | 42 | 3 | 20 | 16 | 11 | A | W | 118 | 0 | 0 | NA | A |

| -Buddy Bell | 568 | 158 | 20 | 89 | 75 | 73 | 15 | 8068 | 2273 | 177 | 1045 | 993 | 732 | N | W | 105 | 290 | 10 | 775.000 | N |

| -Buddy Biancalana | 190 | 46 | 2 | 24 | 8 | 15 | 5 | 479 | 102 | 5 | 65 | 23 | 39 | A | W | 102 | 177 | 16 | 175.000 | A |

| -Bruce Bochte | 407 | 104 | 6 | 57 | 43 | 65 | 12 | 5233 | 1478 | 100 | 643 | 658 | 653 | A | W | 912 | 88 | 9 | NA | A |

| -Bruce Bochy | 127 | 32 | 8 | 16 | 22 | 14 | 8 | 727 | 180 | 24 | 67 | 82 | 56 | N | W | 202 | 22 | 2 | 135.000 | N |

| -Barry Bonds | 413 | 92 | 16 | 72 | 48 | 65 | 1 | 413 | 92 | 16 | 72 | 48 | 65 | N | E | 280 | 9 | 5 | 100.000 | N |

| -Bobby Bonilla | 426 | 109 | 3 | 55 | 43 | 62 | 1 | 426 | 109 | 3 | 55 | 43 | 62 | A | W | 361 | 22 | 2 | 115.000 | N |

| -Bob Boone | 22 | 10 | 1 | 4 | 2 | 1 | 6 | 84 | 26 | 2 | 9 | 9 | 3 | A | W | 812 | 84 | 11 | NA | A |

| -Bob Brenly | 472 | 116 | 16 | 60 | 62 | 74 | 6 | 1924 | 489 | 67 | 242 | 251 | 240 | N | W | 518 | 55 | 3 | 600.000 | N |

| -Bill Buckner | 629 | 168 | 18 | 73 | 102 | 40 | 18 | 8424 | 2464 | 164 | 1008 | 1072 | 402 | A | E | 1067 | 157 | 14 | 776.667 | A |

| -Brett Butler | 587 | 163 | 4 | 92 | 51 | 70 | 6 | 2695 | 747 | 17 | 442 | 198 | 317 | A | E | 434 | 9 | 3 | 765.000 | A |

| -Bob Dernier | 324 | 73 | 4 | 32 | 18 | 22 | 7 | 1931 | 491 | 13 | 291 | 108 | 180 | N | E | 222 | 3 | 3 | 708.333 | N |